Let’s explain why the normal distribution is so important.

(This is a section in the notes here.)

Continue reading “The Law of Large Numbers and Central Limit Theorem”

Let’s explain why the normal distribution is so important.

(This is a section in the notes here.)

Continue reading “The Law of Large Numbers and Central Limit Theorem”

We consider distributions that have a continuous range of values. Discrete probability distributions where defined by a probability mass function. Analogously continuous probability distributions are defined by a probability density function.

(This is a section in the notes here.)

There are some probability distributions that occur frequently. This is because they either have a particularly natural or simple construction. Or they arise as the limit of some simpler distribution. Here we cover

(This is a section in the notes here.)

Often we are interested in the magnitude of an outcome as well as its probability. E.g. in a gambling game amount you win or loss is as important as the probability each outcome.

(This is a section in the notes here.)

(This is a section in the notes here.)

Conditional probabilities are probabilities where we have assumed that another event has occurred.

(This is a section in the notes here.)

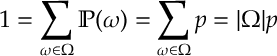

Counting in Probability. If each outcome is equally likely, i.e. for all

, then since

(where

(where is the number of outcomes in the set

) it must be that

(This is a section in the notes here.)

We want to calculate probabilities for different events. Events are sets of outcomes, and we recall that there are various ways of combining sets. The current section is a bit abstract but will become more useful for concrete calculations later.

(This is a section in the notes here.)

I throw a coin times. I got

heads.

This is the appendix in the notes here.

In loose terms, the mixing time is the amount of time to wait before you can expect a Markov chain to be close to its stationary distribution. We give an upper bound for this.