In loose terms, the mixing time is the amount of time to wait before you can expect a Markov chain to be close to its stationary distribution. We give an upper bound for this.

We consider the following setup:

- We consider a discrete time Markov chain on finite state space

and transition matrix

.

- We assume that the Markov chain is irreducible and aperiodic and has stationary distribution,

.

- We will, at the end, assume that our Markov chain is reversible,1 that is for all

it satifies detailed balance

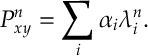

Here is a rough description of the main idea. We could diagonalize and get its eigenvalues

, then the entries of

look like

where could be perhaps be polynomial in

. In this expression the stationary distribution

is the coefficient of the eigenvalue of value

. All other terms go to zero. The one that goes slowest is the largest eigenvalue not equal to

. This determines the rate at which the Markov chain reaches stationarity. We now make this more formal.

We want to compare the marginal distribution of the Markov chain at a fixed time to the stationary distribution.

- For two probability distribution

and

the total variation distance is

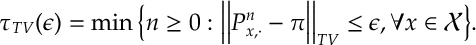

- For each

, the mixing time with respect to the total variation norm is

We prove some results about a mixing times with respect to a norm which is much more taylored to our chain; we then go back to total variation.

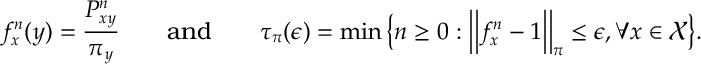

- For

and

, we define the norm

and inner product

by

- Note that the the distance

is convex. So in the above definition, once we have reached the mixing time from each initial state

, the chain is mixed from all initial distributions.

For the following proposition, we define

The mixing time is intended to give the first time that the relative mass between

and

equate within an

error. The proof is boarders on being a direct consequence of our definitions and assumptions.

Prop 1.

Proof. Observe that

So, in short, . Thus, iteratively applying this and the definitions of

we have that

The final inequality is a straightforward bound on

The final inequality is a straightforward bound on . Thus

has occured before for any

satisfying

rearranging for and using the bound

gives the above result.

The above bound gives the main flavour, we expect convergence to stationarity to be exponentially fast. Still, there is work left: our distance metric depends on our stationary distribution and we need to guarentee that . We will show that

is an eigenvalue of

, in particular, the magnitude of the largest eigenvalue (excluding the trival unit eigenvalue that all Markov chains must have).

We use the following lemma (which we don’t currently prove). The result for the better part is a statement of Spectral Theorem, which states an analogous result for symmetric matrices.2 As a consequence, the lemma below involves making the observation that for a reversible chain is a symmetric matrix.

Lemma 1. For the transition matrix of a reversible Markov chain, eigenvalues of are all real valued each with magnitude less than one (with one attaining this value) and their eigenvectors form an orthogonal basis (with repect to ).

However, we use it to prove the following lemma

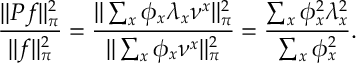

Lemma 2.

Proof. The definition of , , is defined over the supremum of

with

. We write

with repect to the orthogonal basis of eigenvectors

, we have

, Since

, the componentent of

corresponding to the largest eigenvalue must be zero, but all others may be chosen freely. Now, since each

is an eigenvector with value

for

, we have

Clearly this is maximized by chosing all off the weight of on the eigenvalue of largest magnitude (excluding the unit one which we know must have weight be zero).

The above lemma extracts a definition for that can now in prinicple calculated, e.g. by a diagonalizing

. This improves Proposition [Mix:Prop] but the metric used to define out mixing time is still depends on the stationary distribution

. We can work with the Total Varaition norm thanks to the following lemma

Basically norm is less than the

norm (by Jensen’s for instance), so for

we have

Putting together the last two lemmas and Proposition [Mix:Prop], we have the following result.

Theorem. where

where and

.

Literature

The arguments here are based on reading the two sources:

Montenegro, Ravi, and Prasad Tetali. “Mathematical aspects of mixing times in Markov chains.” Foundations and Trends® in Theoretical Computer Science 1.3 (2006): 237-354.

Levin, David A., and Yuval Peres. Markov chains and mixing times. Vol. 107. American Mathematical Soc., 2017.