We consider what is perhaps the simplest and most tractable queueing model: a single-server queue with Poisson arrivals and exponential service times. This is called the M/M/1 queue.

Continue reading “A single-server queue (M/M/1)”Poisson Arrivals See Time Averages (PASTA)

We are often interested in the state an arriving customer observes in a queue. If arrivals are Poisson, then the average arrival sees the system in its equilibrium state.

This may seem counterintuitive. When a customer arrives, we might expect the system to be slightly emptier in anticipation of the arrival. However, this is not the case for Poisson processes: they are uniformly distributed over time, and arrivals after time are independent of what happened before

.

Kelly’s Lemma

Kelly’s Lemma provides a way of using the time reversal of a Markov chain to calculate its stationary distribution. In theory, it is just a rewrite of the stationary distribution and time-reversal results for continuous-time chains. However, in application, it is different; you can take a guess at the time reversal of a chain $Q’$, and if you are right, i.e., if it checks out in Kelly’s Lemma, you get the stationary distribution as a consequence.

Continue reading “Kelly’s Lemma”Stationary Distributions (Continuous Time)

We quickly recap the results known for discrete time that also hold in continuous time. (The slight advantage from a modeling perspective is that in continuous time, transitions occur distinctly, which gives more chances for reversibility to hold)

Continue reading “Stationary Distributions (Continuous Time)”Continuous-time Markov chains

We want to model queueing systems in continuous time. Continuous-time Markov chains are a natural generalization of discrete-time Markov chains. We focus on developing intuition for continuous-time chains as the natural continuous-time extension of discrete chains, similar to our discussion of Poisson processes.

Continue reading “Continuous-time Markov chains”Poisson process

The Poisson process is a fundamental object in probability. Just as we have a uniform distribution for a single point in an interval, we would like a notion of a “uniform” distribution for a countable set of points in . This motivates the Poisson process. We develop this intuition and then give a definition.

Stationary Distributions — Recap

We have characterized recurrence in terms of return times. However, if a Markov chain is positive recurrent, what proportion of time does it spend in each state?

Continue reading “Stationary Distributions — Recap”Markov Chains Recurrence

We quickly recap the recurrence properties of Markov chains. The aim is to give a short review not a full rigorous treatment.

Continue reading “Markov Chains Recurrence”A discrete-time single-server queue

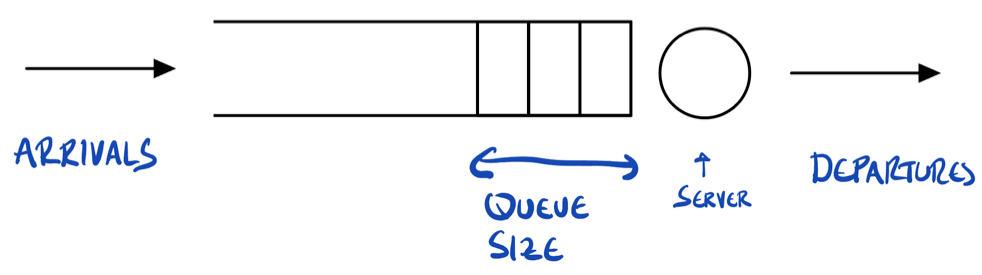

Many queues can be modeled as discrete-time Markov chains. Here, the state is the length of the queue, and fluctuations in arrivals and service give the random evolution from one time period to the next. Here is a diagram of a queue.

Figure: arrivals occur from the left and are stored in a buffer. Jobs or customers are represented as rectangles. A server is represented by a circle, which processes the jobs, and they then depart on the right-hand side.

Continue reading “A discrete-time single-server queue”Markov chains and matrices — A Quick Summary

Probabilities for a Markov chain can be expressed in terms of the probability vector and the transition matrix

.

For example, for and a function

,

So essentially, multiplication to the left gives probabilities and multiplication to the right gives expectations.

Notation explanation. If the above is unclear, some notation may need explaining. For , we multiply

copies of the matrix

together.1 Interpreting

as a row vector,

is a row vector, and

is the

-th component of that row vector.

Similarly, we can think of the function as a column vector

. Then

is a column vector under matrix multiplication, and

denotes its

-th component.

Throughout these notes, and

.

1 We use for transpose, not

.