(This is a section in the notes here.)

Conditional probabilities are probabilities where we have assumed that another event has occurred.

An example: Two aces. Suppose we have a deck of cards and we consider the probability of getting an ace. If we take one card out, then the probability of an ace is . (There are

aces in a pack of

cards.) Now, given that the first card was an ace, we take a second card out the pack, notice now the probability of the second card being an ace has changed. Specifically there are now

aces and

cards in the pack. So the probability is

. (Also observe that this probability is different than if we had assumed that the first card was not an ace, which in that case the probability would be

.) Thus our condition on the first card has effected the probability for the second card. This is an example of conditional probability, which we now develop more generally.

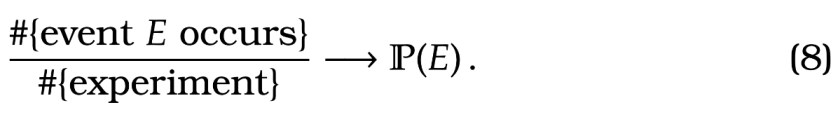

Motivating a definition of conditional probability. Recall that we thought of probability as

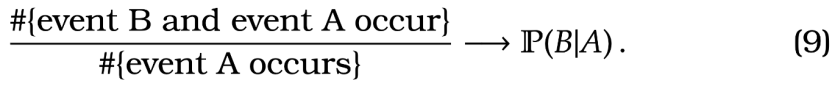

Similarly, as we think of conditional probability as the proportion of time that an event occurs knowing that an other event has occurred. In this case, an analogous statement would be that

where here we use to denote the probability of event

conditional on event

.

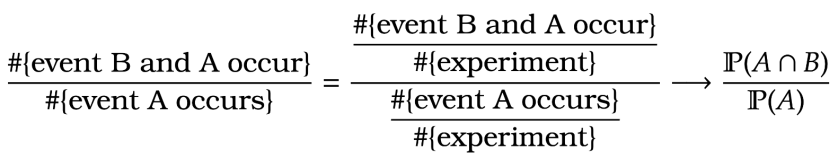

Observe, that we can express (9) in terms of (8). In particular,

Here we divide the denominator and numerator from the left hand side of by the number of experiments and then we apply to both expressions.

This motivates the following

Definition of conditional probability. Given the above we have:

Definition [Conditional Probability] For events and

, the conditional probability of

given

is

when . By convention, if

then we define

.

A couple of examples.

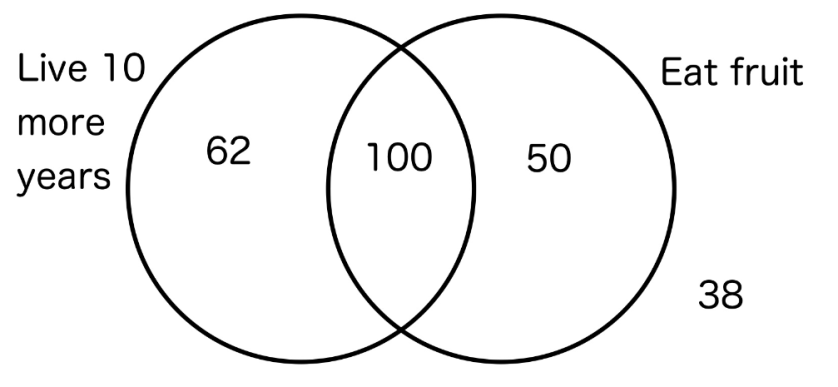

Example. The results of a survey of 250 different 75 year-olds is given in the following Venn diagram.

For a randomly selected participant in the survey:

1) Calculate the probability living 10 more years given that they eat fruit.

2) Calculate the probability living 10 more years given that they don’t eat fruit.

Answer. 1)

2)  So you should eat fruit…

So you should eat fruit…

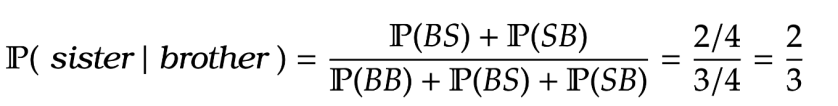

Example. I have two siblings, given I have a brother, what is the probability that I also have a sister.

Answer. Note that our sample space is . E.g. here

means the eldest sibling is a brother and the youngest is a sister. Each outcome has equal probability of

. So

For some this is an example of conditional probability being a bit counter-intuitive, as ones gut reaction is that the answer is a half. Notice if I specified that my eldest sibling was a brother then the answer would indeed be a half. This is just one small example of the subtle art of manipulating conditional probabilities.

Independence

We are interested in the setting where knowing that an event has occurred does not affect another event. E.g. if I roll a dice twice, in principle the outcome of the first roll should not influence the second roll. If event does not influence event

then it should be that

I.e. conditioning on having happened does not change the probability of

occuring. Since

, we can slightly more symmetrically express the above equality as

This is what we call independence of two events.

Definition [Independence] We say that events and

are independent if

So if knowing that has happened does not affect the probability of

then we multiply the probabilities together.

Warning! The following is sometimes confused by students. We say that two events are “mutually exclusive” if . In that case we know from Lemma [lem:op1] that we add the probabilities together. This not the same as independence where we multiply the probabilities together.

As discussed, independence says that knowing an outcome is in does not effect the probability of

. However, if events

and

are mutually exclusive, then knowing the outcome is in

effects the probability of

. Specifically, if we know an outcome is in

then it definitely is not in

.

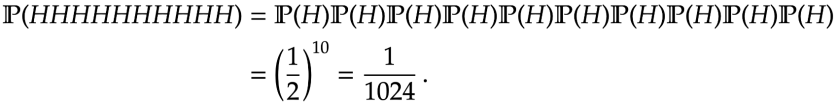

Example. What is the probability of getting heads in a row from an unbiased coin?

Answer. Since with multiple probabilities together

This is actually a “magic trick”. Notice there are minutes in a day. So enough time to have a reasonable chance of getting

heads in a row over the course of a day. There are TV magicians that had performed this as a trick (by cutting out the roughly

previous camera takes).

Rules for Conditional Probabilities

Here are few useful formulas for Conditional Probabilities. (Like with operations on sets the proofs are not entirely necessary to know for exams.)

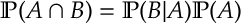

Lemma 1.

Proof. Follows immediately from the definition of .

.

This is useful as it can be easier to find . E.g. like with the earlier two aces example, we can easily find the probability of drawing a ace from a deck given the previous card was an ace, and from that calculate the probability of two aces.

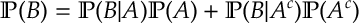

Lemma 2.

Proof. Since , then Lemma 1 gives

and then applying Lemma 1

and then applying Lemma 1

gives as required.

as required.

Note that the above result can be applied to any number of sets . So long as

and

for

, it holds that

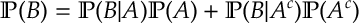

Lemma 3 [Bayes’ Rule]

The result is sometimes called Bayes’ Theorem, as well.

Bayes’ Rule reverses the order of the conditional probability. There is a whole branch of statistics developed to this which we will very briefly touch upon shortly.

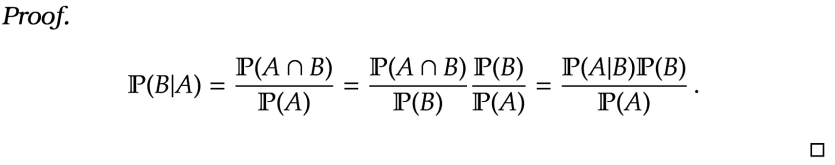

Example [Two aces] Taking out two cards from a well-shuffled deck. What is the probability that both cards are aces? What is the probability that the 2nd card is an ace?

Answer. We know that . Also we know that

, because after one ace is dealt then there are

aces and

cards. Thus using Lemma 1

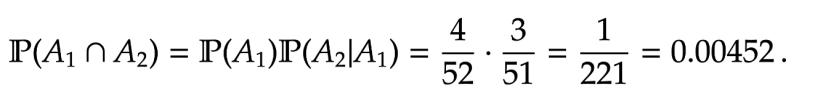

For the 2nd part, we can apply Lemma 7. Here

For the 2nd part, we can apply Lemma 7. Here

This should come as no surprise, as the probability that the 2nd card is an ace should be the same as the probability that the 1st card is an ace. (Imagine taking the first card out the pack and putting it to the back, and then taking the 2nd card out and looking at it.)

Example [Frequentist vs Bayesian Statistics] I have a biased coin, it is biased so the probability of heads, , is either

or

, but you don’t know which. So you throw the coin three times and get three heads. From this data determine if

or

.

Answer. This is clearly not a well-defined question, because we cannot determine with certainty the value of . Given it’s subjective nature (where there is no right answers). We give two approaches: a frequentist approach, which is a more classical statistical approach, and a bayesian statistical approach.

The Frequentist Answer. The likelihood of three heads for both choices of is

The parameter that gives the highest probability is

The parameter that gives the highest probability is . So our answer for this problem is the estimator

.

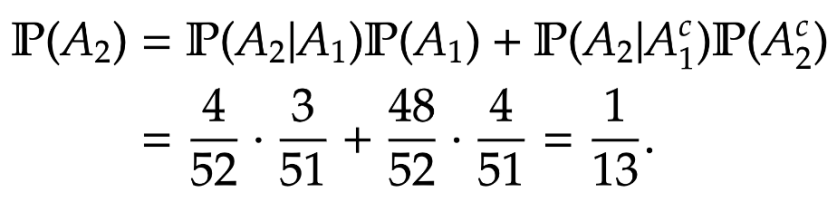

The Bayesian Answer. Since we don’t know in prior to throwing the coin, which of the two possibilities hold. We could give each possibility equal likelihood that is

This is called the prior distribution. After throwing the coin and getting three heads then we want to update our estimate to find

This called the posterior distribution. We can use Bayes’ Rule to calculate the posterior:

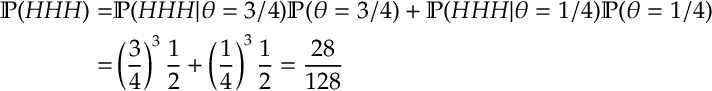

To calculate we can apply Lemma 2

which then gives with (10) that

which then gives with (10) that

and thus . Thus in the Bayesian approach we says that we think

with probability

.

Under reasonable assumptions and enough data both the Bayesian and Frequentist approaches will converge on the correct parameter. The choice of the prior in the Bayesian approach is quite subjective. When the range of parameters gets large (or continuous) then we need to solve an optimization problem in the frequentist approach, while in the Bayesian approach we need to sum over a large number of terms to find the normalizing constant, which was the term in the above example.