(This is a section in the notes here.)

I throw a coin times. I got

heads.

Question. How many heads should I expect?

Answer. 50 heads should be expected.

Another experiment, I throw a dice.

Question. If I throw the dice forever, then what proportion of the throws are a .

Answer. The probability of this outcome is .

A slightly tougher one: I stop and start a stopwatch and look at the last digit.

Question. What is the probability that this digit is even?

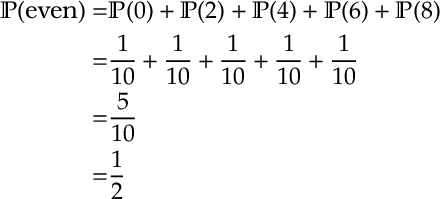

Answer. It’s . Because half the numbers are even. More precisely there are

even numbers each having probability

(as there are

digits). So

Discussion

Above we introduced various pieces of terminology:

experiment, outcome, probability, expectation…

We will define these more precisely soon.

In the dice question, we see that probabilities can be thought of as an idealized proportion, when we repeat an experiment infinitely many times. Since it is a proportion, notice the probabilities are less than 1.

In both the dice and stopwatch question, notice that counting was useful to us. E.g. In the stopwatch question, we counted the number of outcomes of interest (the even digits) and the total number of outcome (the

possible digits). The probability of an even number was the ratio of these

. In general, counting is an important starting point in probability.1

Notice that in the stopwatch question, the stopwatch is deterministic. However, our interaction with the stopwatch introduces randomness. Analogously, the particles of air in room can be argued to move deterministically, but small perturbations of the system move towards a state were the particles are uniformly random in the room.

When we discuss randomness colloquially, often we think of it as something than cannot be known. However, it is important to note that, when studying probability (and randomness), our uncertainty is quantifiable. Thus we can reason about randomness mathematically. The point of this course is to introduce initial concepts and principles in probability.

Beyond this course, it is worth noting that probability has many applications in statistics, finance and gambling, game theory, algorithm design, operational logistics, physics, machine learning…

Probability Terminology and Definitions

In probability, we consider an experiment. E.g. we throw two dice and add the total.

An outcome is the result of the experiment. E.g. if the first dice is a and the 2nd is

then the outcome is

.

The sample space is the set of possible outcomes, e.g. .

An event is a subset of outcomes from the sample space. E.g. ,

,

We can define an event by explicitly listing the outcomes, e.g. , or by implicitly stating the outcomes, e.g. we can also write

.)

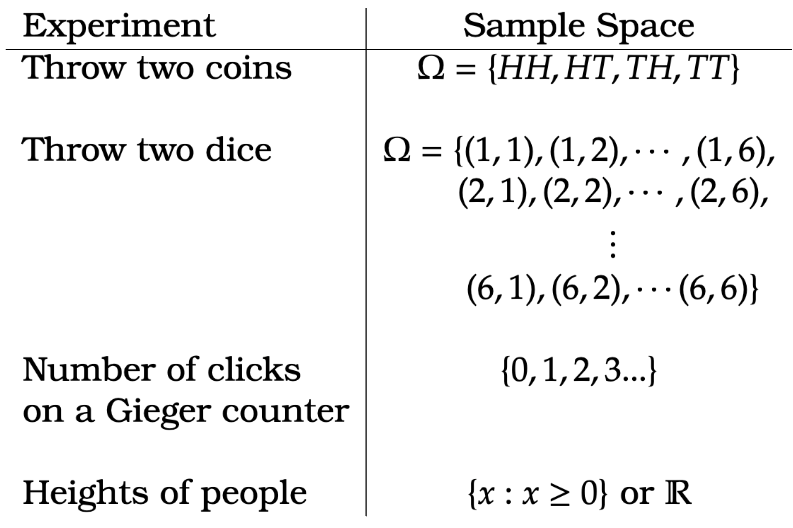

For a given set of events, there may be more than one way to define the sample space of an experiment. E.g. if I want to know the sum of two dice, we could consider the set of outcomes for the first and second dice throw. (See table below)

Examples

From the above table, note that sample spaces can be finite, (countably) infinite, or a continuum.

Definition of Discrete Probability.

For finite of countably infinite sample spaces, we can define probabilities as follows.

Definition [Probability – Discrete] For a sample space , probabilities are numbers

for each

such that

- (Positive) For

,

- (Sums to one)

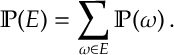

For events, , we get the probability of the event by summing

The above is a good definition of for finite (or countably infinite) sample spaces. When we consider probabilities for continuous sample spaces definitions need to be modified.

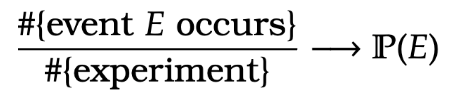

An informal definition. The above definition gives us a working mathematical definition for probability. That said it is worth noting that intuitively we consider probabilities to represent the long-run proportion of time an event (or outcome) has occurred in an experiment. So informally if we repeat a number of experiments, which we denote by #{experiment}, and for those we count the number of times an event occurs #{event occurs}, and if we let the number of experiments get large, that is

, then it should hold that

Later when we are a bit more precise about what we mean to “repeat a number of experiments”, the above statement will more formally be called the Law of Large Numbers.

Examples.

Example 1. For the experiment where we throw two coins, calculate

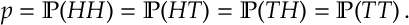

Answer 1. For the sample space , each probability is equally likely, i.e.

Also probabilities sum to one so

This implies and so

.

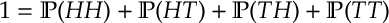

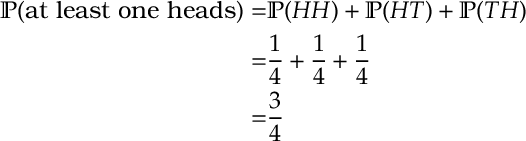

From this point there are two ways to solve the question:

- Since

, we can directly sum over the outcomes in the event

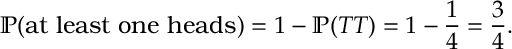

- Since probabilities sum to one

Thus

Thus

Example 2. A bag contains three green balls and a red ball. Two balls are taken out at random what is the probability that both are green?

Answer 2. Here are three ways to answer this question:

1. We can explicitly list by taking the balls out one at a time and count. Here we label the three green balls and the red ball

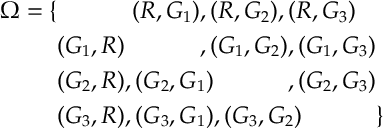

. The probability space is

There are 12 equally likely outcomes and 6 outcomes with both green so

There are 12 equally likely outcomes and 6 outcomes with both green so  (Notice we had to label the three balls

(Notice we had to label the three balls because if we did not, then

. So we could not count up events with equal probability.)

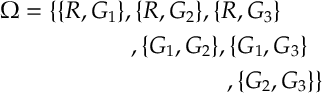

2. We can imagine we take out the balls simultaneously. Again we label the three green balls and the red ball

. Recall we use curly brackets sets where the order does not matter, and we use round brackets when the order matters. (E.g.

but

). The probability space in this case is

There are 6 equally likely outcomes and 3 outcomes with both green so

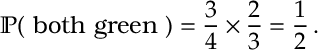

There are 6 equally likely outcomes and 3 outcomes with both green so  3. We can reason as follows. The probability the first ball removed is green is

3. We can reason as follows. The probability the first ball removed is green is , as three out of four balls are green. Given the first ball is green, the probability the 2nd ball is green is

, as now two out of three balls are green. So out of the three quarters of the time where the first ball is green, two thirds of the time the 2nd ball is green. Two thirds of three quarters is a half. So

(This third argument might feel a little vague at first. We go into this in more detail when we discuss conditional probability, a bit later.)

(This third argument might feel a little vague at first. We go into this in more detail when we discuss conditional probability, a bit later.)

- Although we initially spend a fair bit of time on counting, it must be added that there is much more to probability than counting.↩