There are some probability distributions that occur frequently. This is because they either have a particularly natural or simple construction. Or they arise as the limit of some simpler distribution. Here we cover

- Bernoulli random variables

- Binomial distribution

- Geometric distribution

- Poisson distribution.

(This is a section in the notes here.)

Of course there are many other important distributions. Like with counting, it is often easy when first learning about probability to think of different probability distributions as being a main destination for probability. However, it is perhaps better to think of the probability distributions that we cover now as simple building blocks that we can then be later used to construct more expressive probabilistic and statistical models.

Again we focus on probability distributions that take discrete values though shortly we will begin to discuss their continuous counterpart.

Notation. There are many different distributions which often have different parameters. For instance, shortly we will define the Binomial Distribution, which has two parameters and

and we denote by

. If a random variable

has a specific distribution then we use “

” to denote this. E.g. if

is a random variable with parameters

and

then we write

Bernoulli Distribution.

We start with the simplest discrete probability distribution.

Definition [Bernoulli Distribution] A random variable that is either zero or one is a Bernoulli random variable. That is we we write if

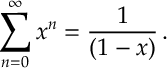

It is a straight-forward calculation to show that

for

![\begin{aligned}

\mathbb E [ X] = p , \qquad \text{and} \qquad \mathbb V(X) = p(1-p) \,.

%\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2021/11/f04ceec54c6e00c856fd1829d2f214d1.png?w=840)

Binomial Distribution.

If we take to be independent Bernoulli random variables with parameter

, and we add then together

then we get a Binomial distribution with parameters and

.

So if we consider an experiment with probability of success and we repeat an experiment

times and count up the number of successes, then the resulting probability distribution is a Binomial distribution.

Let’s briefly consider the probability that . One event where

occurs is when the first

experiments end in success and the rest fail,

. Note that by independence

Indeed the probability of any individual sequence where

. So how may such sequences are there? Well we have seen this before. It is the number of ways we can label

points with

ones (see additional remarks on combinations in Section [sec:Counting]), that is the combination

. Thus we have

This motivates the following definition.

Definition [Binomial Distribution] A random variable has a binomial distribution with parameters

and

, if it has probability mass function

for , and we write

.

Here are some results on Binomial distributions that might be handy.

Lemma 1. If , then

![\begin{aligned}

\mathbb E[X] =np , \quad \text{and} \quad \mathbb V(X) = n p (1-p) \, .

%\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2021/11/d92646c2a2a0a9d35cfec0c5f8887028.png?w=840)

![]()

Lemma 2. If and

and are independent then

![]()

Proof. for

independent and

, and

for

independent and

. So

thus is Bernoulli with parameters

and

.

Lemma 3. If and

are independent Bernoulli random variables with parameter

then

Proof. Since we can write for

. Note that an equivalent way to represent the above random variable is

Since

Since , then the above random variable must be

.

Geometric Distribution

Suppose we throw a biased coin until the first time that it lands on heads. The distribution of the number of throws is a geometric distribution. For instance, the probability that it takes coin throws is the same as the probability of

tails in a row and then one heads which is

where is the probability of heads. In general, the probability we need

throws is

This gives the geometric distribution.

Definition [Geometric distribution] The geometric distribution with success probability is the distribution with probability mass function

for , and we write

.

The following lemma is useful for geometrics distributions but also various forms of compound interest and other applications.

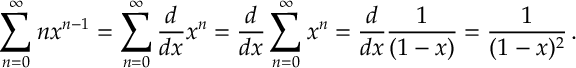

Lemma 4. [Geometric Series]For ,

Proof.

Now subtracting gives

Thus

Differentiating the above with respect to gives

Differentiating again gives

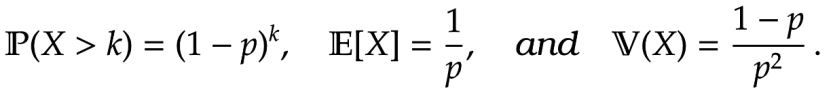

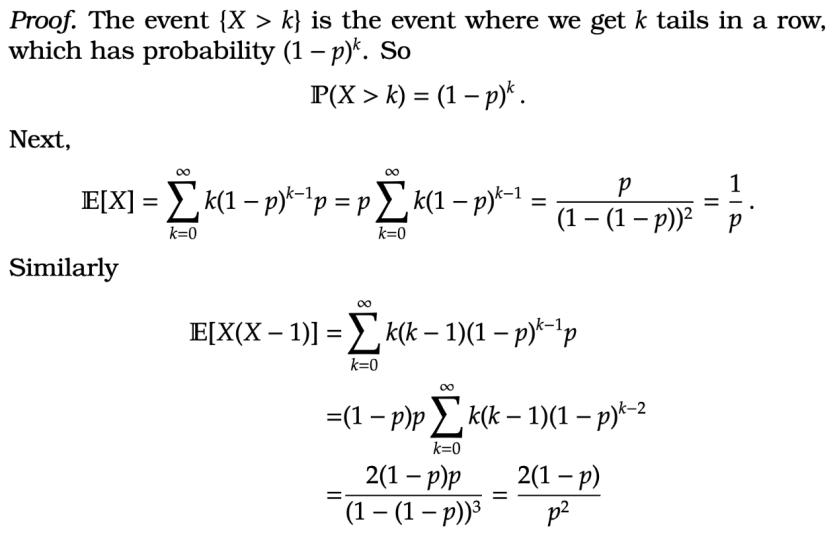

Lemma 4. If then

![\begin{aligned}

\mathbb P (X > k) = (1-p)^k, \quad \mathbb E[ X] = \frac{1}{p} ,\quad \text{and} \quad \mathbb V(X) = \frac{1-p}{p^2} \, .<br />

%\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2021/11/7709730e7afe2669cfc42f635e745209.png?w=840)

If we throw a coin and get tails in a row, and we ask how long should we wait until we next get a heads, then (even though it might feel like we are now due a heads) it is the same as the time we would have expected when we first started throwing the coin. This is key property of the geometric distribution and its called memoryless property.

Lemma 5. [Memoryless Property] If then, conditional on

, the distribution of

is geometrically distributed with parameter

. In otherwords

.

Proof.

Example [Waiting for a bus] At a bus stop, the probability that a bus arrives at any given minute is and is independent from one minute to the next.

- What is the expect gap in the time between any two busses?

- You arrive at the bus stop and there is no bus there. What is the expected gap between the last time a bus arrived and the next bus to arrive?

Answer. 1. The time from one bus to the next is geometric , so the expected wait is

.

2. Given you at a time with no bus the time until the last bus too arrive is geometrically distributed with parameter and so is the time until the next bus to arrive. The time between this bus arrivals is thus the sum of these geometeric distributions, and so the expected time is

.

This is sometimes called the waiting time paradox. Here we see that when we turn up at the bus station the gap between the buses is twice as long as the mean time between the buses. This is because when we turn up and there is no bus there then we are more likely to have chosen a time with a bigger gap between the buses.

Poisson Distribution.

The Poisson distribution arises when we count the number of successes of an unlikely event over a large population. This occurs in all manner of settings from nuclear decay, to insurance, to call over a telephone line.

We present a definition first and then we will motivate the Poisson distribution.

Definition [Poisson distribution] For a parameter , the Poisson distribution has probability mass function

for and we write

Motivation for Poisson Distribution. If we take a Binomial distribution where the number of trails is large but the probability of success in each trail is small, specifically

, then the Binomial distribution is well approximated by a Poisson distribution.

This is the reason the Poisson distribution is a reasonable distribution to represent pheonomena like nuclear decay. In nuclear decay, there are a large number of atoms in a radio-active substance, and, in any given time interval, there is a very small probability of one of these atom undergoing nuclear decay and the emitting a particle (e.g. a gamma-ray). For this reason the distribution of the number of observed gamma-rays over a time interval is well approximated by a Poisson distribution.

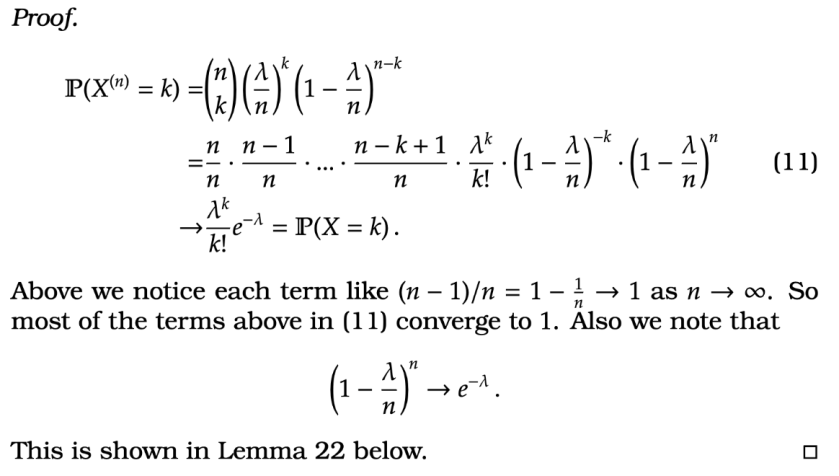

The following lemma sets out how the Poisson distribution approximates the Binomial distribution (again students primarily interested in assessment can skip with argument).

Theorem 1 [Binomial to Poisson Limit] Consider a sequence of Binomial random variables for

, and let

. Then

![\begin{aligned}

\mathbb P(X^{(n)} = k ) \xrightarrow[n \rightarrow \infty]{} \mathbb P(X =k)

%\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2021/11/a2585bb1fd3461f4fab468d9c3abeb07.png?w=840)

That is as gets large the probability of

approaches the probability that

for each

.

Now for some more standard facts about the Poisson distribution.

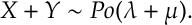

Lemma 6 [Poisson Summation Property] If ,

, and

and

are independent then

Lemma 7 [Poisson Thinning Property] If and, independent of

, we let

be independent Bernoulli random variables with parameter

then

In Lemma 6, we can begin to see how we can think of a Poisson distribution as part of a process that evolves in time. For instance we might say that the number of calls on a set of telephone lines in each minute is Poisson distributed with mean , then the number of calls per hour is Poisson mean

.

In Lemma 7, we can see that if we exclude points according to an independent random variable then the resulting random variable is still Poisson. This is useful for instance in insurance. Here the number of claims an insurance company receives in a given day might be Poisson with mean . The company might split the claims into big and small claims (say on average half the claims are big and half small). Since there is some fixed probability that each claim is, say, big then the resulting number of big claims is Poisson mean

. This is useful for an insurance company as they can divide up, reinsure or resell some of their risk.

Both lemmas can be proved directly by summing things but is a bit of a messy calculation. Intuitively the above lemmas holds because an equivalent results, Lemma 2 and Lemma 3, hold for Binomial random variables. So the both properties persists when we take the limit to a Poisson random variable (like in Theorem 1). The cleanest proof (using moment generating functions) is beyond the scope of this course, so we omit the proof for now.

wow!! 103Zero-Order Stochastic Optimization: Keifer-Wolfowitz

LikeLike