Markov decision processes are essentially the randomized equivalent of a dynamic program.

A Random Example

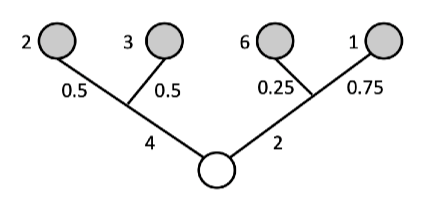

Let’s first consider how to randomize the tree example introduced.

Below is a tree with a root node and four leaf nodes colored grey. At the route node you choose to go left or right. This incurs costs and

, respectively. Further, after making this decision there is a probability for reaching a leaf node. Namely, after going left the probabilities are

&

, and for turning right, the probabilities are

&

. For each leaf node there is there is a cost, namely,

and

.

Given you only know the probabilities (and not what happens when you choose left or right), you’d want to take the decision with lowest expected cost. The expected cost for left is and for right is

. So go right.

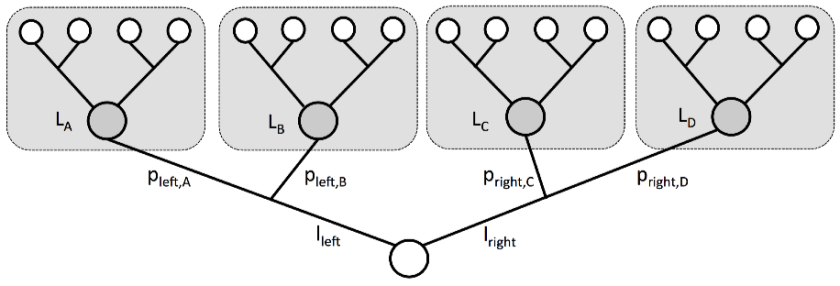

Below we now replace the numbers above with symbols. At the route node you can choose the action to go left or right. These, respective, decisions incur costs of and

. After choosing left, you will move to state

with probability

or to state

with probability

and similarly choosing right states

&

can be reached with probabilities

&

. After reaching node

(resp.

,

,

) the total expected cost thereafter is

(resp.

,

,

).

Show that the optimal expected cost from the route node, satisfies

![L_R = \min_{a\in \{ \text{left}, \text{right}\} } \left\{ l_a + {\mathbb E}_a \left[ L_{X_a}\right]\right\}.](https://appliedprobability.blog/wp-content/uploads/2019/01/4168c92caa0243f55bbfac54e17a3b1b.png?w=840)

where here the random variable denotes the state in

reached after action is taken. The cost from choosing “left“ is :

![l_{\text{left}} + p_{\text{left},A} L_{A} + p_{\text{left},B} L_{B} = l_{\text{left}} + \mathbb E_{\text{left}} [L_{\text{left}}]](https://appliedprobability.blog/wp-content/uploads/2019/01/6454937cdc892384bee3e02133afa5a3.png?w=840)

and the cost for choosing “right” is:

![l_{\text{right}} + p_{\text{right},A} L_{A} + p_{\text{right},B} L_{B} = l_{\text{right}} + \mathbb E_{\text{right}} [L_{\text{right}}].](https://appliedprobability.blog/wp-content/uploads/2019/01/9d757f1bc4c4ea49d5b0027f302aa4c5.png?w=840)

The optimal cost is the minimum of these two is

![L_R = \min_{a\in \{ \text{left}, \text{right}\} } \left\{ l_a + {\mathbb E}_a \left[ L_{X_a}\right]\right\}.](https://appliedprobability.blog/wp-content/uploads/2019/01/4168c92caa0243f55bbfac54e17a3b1b.png?w=840)

where here the random variable denotes the state in

reached after action is taken. Notice how we abstracted away the future behaviour after arriving at

,

,

,

. Into a single cost for each state:

,

,

,

. And we can propagate this back to get the costs at the route state

. I.e. we can essentially apply the same principle as dynamic programming here.

Definitions

A Markov Decision Process (MDP) is a Dynamic Program where the state evolves in a random (Markovian) way.

Def [Markov Decision Process] Like with a dynamic program, we consider discrete times , states

, actions

and rewards

. However, the plant equation and definition of a policy are slightly different.

Like with a Markov chain, the state evolves as a random function of the current state and action, . Here

where are IIDRVs uniform on

. This is called the Plant Equation.

A policy choses an action

at each time

as a function of past states

and past actions

. We let

be the set of policies. A policy, a plant equation, and the resulting sequence of states and rewards describe a Markov Decision Process.

As noted in the equivalence above, we will usually suppress dependence on . Also, we will use the notation

![\mathbb{E}_{x_t, a_t} [G({X}_{t+1})] = \mathbb{E} [G(F_t(x_t,a_t;U))] \quad\text{and} \quad \mathbb{E}_{x, a} [G(\hat{X})] = \mathbb E [ G(f(x,a;U))]](https://appliedprobability.blog/wp-content/uploads/2019/01/d934c978a858260185a99cc1654fe15d.png?w=840)

where here and here after we use to denote the next state (after taking action

in state

). Notice in both equalities above, the term on the right depends on only one random variable,

.

Objective is to find a process that optimizes the expected reward.

Def [Markov Decision Problem] Given initial state , a Markov Decision Problem is the following optimization

Further, let

Further, let (Resp.

) be the objective (Resp. optimal objective) for when the summation is started from time

and state

, rather than

and

. We often call

to value function of the MDP.

The next result shows that the Bellman equation follows essentially as before but now we have to take account for the expected value of the next state.

Def [Bellman Equation] Setting for

![\tag{Bell eq.} V_t(x_t) = \max_{a_t\in\mathcal{A}_t} \left\{ r_t(x_t,a_t) + \mathbb{E}_{x_t,a_t} \left[ V_{t+1}(X_{t+1})\right] \right\}.](https://appliedprobability.blog/wp-content/uploads/2019/01/e6657066819dbba57be5977dfb250aec.png?w=840)

The above equation is Bellman’s equation for a Markov Decision Process.

Let be the set policies that can be implemented from time

to

. Notice it is the product actions at time

and the set of policies from time

onward. That is

.

![\begin{aligned} V_t(x_t) &= \max_{\Pi_t \in \mathcal{P}_t} \mathbb{E}_{x_t \pi_t} \left[ \sum_{t=0}^{T-1} r_t(X_t,\pi_t) + r_T(X_T) \right]\\ &= \max_{\pi_t } \max_{\Pi \in \mathcal{P}_{t+1}} \Bigg\{ r_t(x_t,\pi_t) + \mathbb{E}_{x_t \pi_t} \Bigg[ \mathbb{E}_{X_{t+1} \pi_{t+1}} \Bigg[ \sum_{\tau=t+1}^{T-1} r_{\tau}(X_\tau,\pi_\tau) + r_T(X_T) \Bigg]\Bigg]\Bigg\}\\ &= \max_{a\in\mathcal{A} } \Bigg\{ r_t(x_t,a) + \mathbb{E}_{x_t\, a} \Bigg[ \underbrace{ \max_{\Pi \in \mathcal{P}_{t+1}} \mathbb{E}_{X_{t+1} \pi_{t+1}} \Bigg[ \sum_{\tau=t+1}^{T-1} r_{\tau}(X_\tau,\pi_\tau) + r_T(X_T) \Bigg] }_{ =V_{t+1}(x_{t+1}) } \Bigg]\Bigg\} \end{aligned}](https://appliedprobability.blog/wp-content/uploads/2019/01/530be491aaf31177f64f9487ae36a5e2.png?w=840) 2nd equality uses structure of

2nd equality uses structure of , takes the

term out and then takes conditional expectations. 3rd equality takes the supremum over

, which does not depend on

, inside the expectation and notes the supremum over

is optimized at a fixed action

(i.e. the past information did not help us.)

Ex. You need to sell a car. At every time , you set a price

and a customer then views the car. The probability that the customer buys a car at price

is

. If the car isn’t sold be time

then it is sold for fixed price

,

. Maximize the reward from selling the car and find the recursion for the optimal reward when

.

Ex. You own a call option with strike price . Here you can buy a share at price

making profit

where

is the price of the share at time

. The share must be exercised by time

. The price of stock

satisfies

for

for IIDRV with finite expectation. Show that there exists a decresing sequence

such that it is optimal to exercise whenever

occurs.

Ex. You own an expensive fish. Each day you are offered a price for the fish according to a distribution density . You make the accept or reject this offer. With probability

the fish dies that day. Find the policy that maximizes the profit from selling fish.

Ex. Consider an MDP where rewards are now random, i.e. after we have specified the state and action the reward is still an independent random variable. Argue that this is the same as a MDP with non-random rewards given by

![\bar{r}(x,a) = \mathbb E [r(x,a)]](https://appliedprobability.blog/wp-content/uploads/2019/01/90bf4e94483e1b8a3a0dce5b8fa486aa.png?w=840)

Ex. Indiana Jones is trapped in a room in a temple. There are passages that he can try and escape from. If he attempts to escape from passage

then either: he esacapes with probability

; he dies with probability

; or with probability

the passage is a deadend and he returns to the room which he started from. Determine the order of passages which Indiana Jones must try in order to maximize his probability of escape.

Ex. You invest in properties. The total value of these properties is in year

.

Each year , you gain rent of

and you choose to consume a proportion

of this rent. The remaining proportion is reinvested in buying new property.

Further you pay mortgage payments of which are deducted from your consumed wealth. Here

.

Your objective is to maximize the wealth consumed over years.

Briefly explaining why we can express this problem as a finite time dynamic program with

prove that if for some constant

then

2 thoughts on “Markov Decision Processes”