We quickly recap the recurrence properties of Markov chains. The aim is to give a short review not a full rigorous treatment.

Recurrence and Transience

For a Markov chain , we say that a state

is

Thus, a state is either visited infinitely often (recurrent) or finitely often (transient). If it is recurrent and a positive proportion of time is spent at

, then it is positive recurrent; otherwise, it is null recurrent.

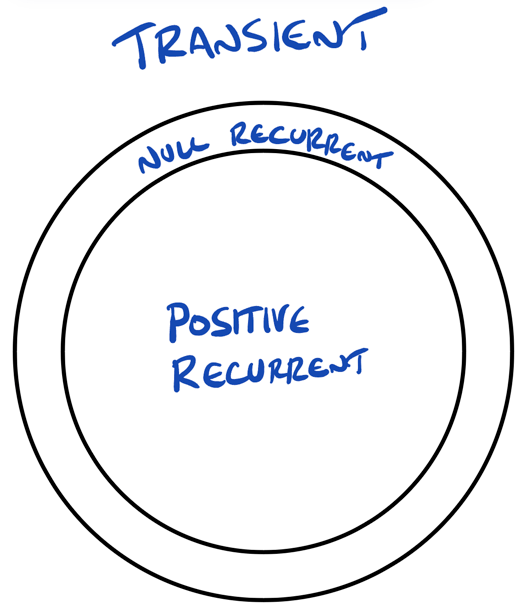

Figure: a Venn diagram of the definitions above.

In queueing systems, we typically want the empty state to be positive recurrent, while transience would be undesirable. Null recurrence can be viewed as a borderline case.

Hitting Times and Recurrence

We can characterize recurrence in terms of return times. Let

and define

Then we have the following characterizations.

Proposition. The state is

These quantities can be computed using the transition matrix .

For , define

and set ,

.

Theorem. For any , the function

satisfies

and satisfies

Proof (sketch).

This follows by conditioning on the first step and using the Markov property.

Communication and Irreducibility

Definition (Communicate). Two states and

communicate if

for some and

.

The recurrence properties of states are determined by communication.

Proposition. If two states communicate, then they are either both positive recurrent, both null recurrent, or both transient.

In many queueing systems, all states communicate with the empty state. Such chains are typically irreducible.

Definition (Irreducible). A Markov chain is irreducible if all states communicate.

For irreducible Markov chains, recurrence is a property of the entire chain rather than individual states.