We want to model queueing systems in continuous time. Continuous-time Markov chains are a natural generalization of discrete-time Markov chains. We focus on developing intuition for continuous-time chains as the natural continuous-time extension of discrete chains, similar to our discussion of Poisson processes.

Continuous vs discrete time models

Often, we want to calculate the stationary distribution of a model to summarize long-run behaviour. We saw that using detailed balance equations is convenient for this.

However, detailed balance does not generally hold for discrete-time models, since multiple events can occur simultaneously, complicating the balance equations. Continuous-time models separate these events and are therefore more convenient.

We will also assume that the time spent in each state is exponential. This is reasonable for arrival processes (which are often modeled as Poisson), though it may be less realistic for service times. Nonetheless, this assumption leads to tractable models and useful insights.

Intuition: from discrete to continuous time

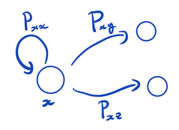

Figure: a discrete-time Markov chain.

The chain jumps to itself with probability and to another state

with probability

.

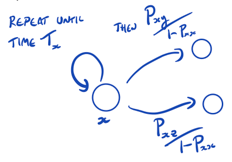

Figure: geometric waiting time interpretation.

That is, the process remains in state for a geometrically distributed number of steps, and then jumps to state

with probability

.

Now suppose we let approach 1 and rescale time appropriately. For small

, define

with and

for

.

To ensure probabilities sum to 1, we require

As , the holding time at state

converges from

to

while the jump probabilities remain fixed.

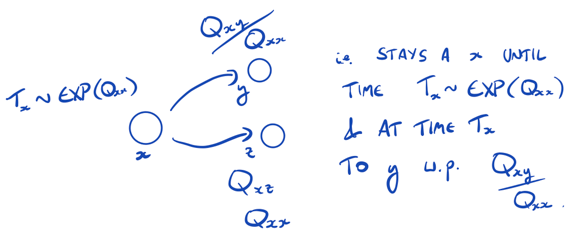

Figure: limiting continuous-time behaviour.

Thus, the process spends an exponential time with parameter in state

, and then jumps to state

with probability

.

A formal definition: continuous-time Markov chain

Definition (Q-matrix). A matrix is a Q-matrix if

for all

for all

Thus, off-diagonal entries are non-negative, diagonal entries are negative, and each row sums to zero. We write and

.

Definition (continuous-time Markov chain). A continuous-time Markov chain is a stochastic process defined by an initial distribution

and a Q-matrix

.

The embedded discrete-time chain has transition probabilities

The holding time in state is

The process is constructed via

Facts about continuous-time Markov chains

- The matrix exponential is

- The values

represent transition rates from

to

, and

is the rate of leaving state

.

- Definitions of recurrence, transience, irreducibility, etc., extend naturally (with sums replaced by integrals).