Multi-Level Monte-Carlo is an Monte-carlo method for calculating numerically accurate estimates when fine grained estimates are expensive, but cheap coarse-grained estimates can be used to supplement this. We considered the simulation of stochastic differential equations, which is the application first proposed, but we note that the approach applies in a variety of other settings.

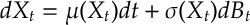

Aim. Suppose obeys the stochastic differential equation

and the aim is to estimate

at some time for some function

. (E.g. think of estimating the expected value of a put or call option – we could consider more exotic path dependent objects too.)

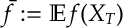

Monte-Carlo. In a “vanilla” Monte-Carlo approach we would estimate with

where ,

, are IID samples of

. Assuming that the variance is finite then the Central Limit Theorem will give a convergence rate to the mean of order

. Or expressed slightly differently to get a Root-Mean-Square (RMS) estimate of order

, i.e.

![\begin{aligned}

\mathbb V(\tilde f_N)^{\frac{1}{2}} := \mathbb E [ (\tilde f_N- \bar f)^2]^{1/2} = O(\epsilon)\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2021/05/c60fd08fbe05dc999404a60ec83eb879.png)

we need about samples.

Numerical and simulation error. However, the assumption here is that we can simulate exactly, which is not in general possible. We need to numerically simulate the SDE (which introduces numerical errors). Suppose now that

,

are now estimates of

(which may not have the same distribution as

and, also, may not be independent). In that case, the usual bias-variance decomposition is

![\begin{aligned}

\mathbb E [ (\tilde f_N - \bar f)^2] =

\mathbb V(\tilde f_N)

+

(\mathbb E [\tilde f_N ]- \bar f )^2 \,.\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2021/05/ac9a3fce9dac1183b14c31bd25d21ffc.png)

As before the variance goes down at under Monte-Carlo simulation but we also need to care about the bias, specifically, we also need to control how good is our approximation is to

.

Numerical Approximation of SDEs. The simplest scheme for simulating an SDE is the Euler-Maruyama scheme: Here  where

where and

. This can be improved by the following scheme due to Milstein:

![\begin{aligned}

\hat X_{t_{n+1}} = \hat X_{t_n} + \mu (\hat X_{t_n} ) h + \sigma (\hat X_{t_n}) \Delta W_{n}

+\frac{1}{2} \sigma'(\hat X_{t_n}) \sigma(\hat X_{t_n}) [ (\Delta W_{n})^2 - h ]

\tag{Milstein}\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2021/05/e5870a32f094361565cb60d334d7296c.png) Is can be shown that the error of the Euler-Maruyama scheme can be found to be

Is can be shown that the error of the Euler-Maruyama scheme can be found to be

![\begin{aligned}

\mathbb E [ f(\hat X_{T})] - \mathbb E[ f(X_T)] = O(h)

\qquad

\text{and}

\qquad

\mathbb E \Big[ \sup_{t\in [0,T]} (\hat X_t - X_t)^2 \Big]^{\frac{1}{2}}= O(h^{\frac{1}{2}})\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2021/05/f60be2b23b4a6e097fd4bbf0aab10261.png)

while for Milstein’s method

![\begin{aligned}

\mathbb E [ f(\hat X_{T})] - \mathbb E[ f(X_T)] = O(h)

\qquad

\text{and}

\qquad

\mathbb E \Big[ \sup_{t\in [0,T]} (\hat X_t - X_t)^2 \Big]^{\frac{1}{2}}= O(h) \,.\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2021/05/822e2592b7bb91eeae1f3919fee9363d.png)

Bias and Variance again. We can now see that the bias and variance from above is

![\begin{aligned}

\mathbb E [ (\tilde f_N - \bar f)^2] =

\mathbb V(\tilde f_N)

+

(\mathbb E \tilde f_N - \bar f )^2

=

O\left( \frac{1}{N} + h^2 \right)\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2021/05/c3a345ce80c302a764d06e1425a0fc95.png)

Thus to get this error to be less than [so that RMS is less than

] we require

samples with time steps of size

. Thus the computational cost is

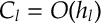

.

The aim of Multi-Level Monte-Carlo (MLMC) is to reduce the computational cost required to get the RMS below . While there are methods for reducing the number of samples

[such as quasi-monte carlo], MLMC aims to reduce the cost of generating paths by performing coarse grained simulations combined with a small number of fine grained simulations.

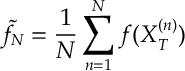

Simulating Brownian Motion at different levels. The easiest way to approximate a brownian motion is to take normal distributions and then take

Here for “level” we can use this to construct a Brownian motion we an approximation of order

. Notice that since

also get for free a Brownian motion at level

, by taking

We can also go the other way from course grained to fine grained, since if and

then

. Although the former method is perhaps simpler than this latter approach.

Numerical Approximation of SDEs with different levels. Now suppose that we wish to compute under the Brownian motion construction above. Since both estimates are calculated under the same Brownian motion this has some impact on the variance. Specifically

![\begin{aligned}

V_l= \mathbb V [ f(X_T^{(l)}) - f(X_T^{(l-1)})]

&=

\mathbb V [ f(X_T^{(l)}) -f(X_T) - f(X_T^{(l-1)}) + f(X_T)]

\\

& \leq

\left(

\mathbb V [f(X_T^{(l)}) -f(X_T) ]^2

+

\mathbb V [ f(X_T^{(l-1)}) -f(X_T) ]^2

\right)^{\frac{1}{2}}

\\

&

=

\begin{cases}

O(h^{(l)}) & \text{Euler-Maruyama}\\

O((h^{(l)})^2) & \text{Milstein}

\end{cases}\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2021/05/6b5c9d20bd57ab132cee7d896217734e.png)

(Here we assume that is constant and thus are of the same order.) The cost of a calculating

is

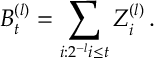

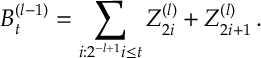

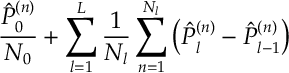

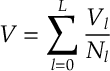

Multi-Level Estimation. Notice that we can form an estimator of with

where we take independent samples with . Here

is the number of samples that we perform at level

. Notice here we apply a differing number of samples to each term in the sum. Notice the estimator is an unbiased estimate of level

because

![\begin{aligned}

\mathbb E [\hat P_L] =\mathbb E [\hat P_0] + \sum_{l=1}^L \mathbb E [ \hat P_l - \hat P_{l-1} ] \, . \end{aligned}](https://appliedprobability.blog/wp-content/uploads/2021/05/e6e2d7294603cc744aa57d2e5256dd09.png)

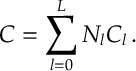

The total cost of the estimation is

The total variance of estimation is

(Here we work on the basis that there is an independent mechanism generating the samples from level to level

as discussed when simulating Brownian motion above.)

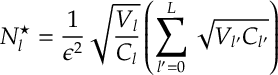

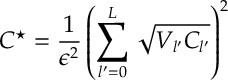

The following result calculates the optimal number of samples to perform at each level.

The cost minimization

has optimal solution

and the optimal cost is

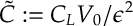

The proof is a straight-forward convex optimization argument. It is interesting that there is a non-trival solution reached. It should be noted that without applying a multi-level approach (i.e. doing just one level) the variance of a single sample path is typically and the cost is a single path is

. I.e. we don’t get any benefits of coupling across levels on the variance of the estimation and we still have to pay the price of each high accuracy simulation. This leads to an overall cost of roughly

If we think of being increasing multiplicatively in

and

as decreasing multiplicatively in

, then the benefit of using a multilevel approach is determined by

In both cases we see a multiplicative reduction in cost for the same variance estimate of the SDE. This bound can be improved by splitting into cases more careful. But, since is roughly

we see that the above bound capture the main charateristics. We refer the reader to Giles’ original paper for more detail.

References.

Multi-Level Monte Carlo is first presented in a very readable paper by Giles ’08. It has since found a wealth of applications. Giles maintains and excellent summary of the area on his webpage [link].

M.B. Giles. ‘Multi-level Monte Carlo path simulation’. Operations Research, 56(3):607-617, 2008.