The exponential family of distributions are a particularly tractable, yet broad, class of probability distributions. They are tractable because of a particularly nice [Fenchel] duality relationship between natural parameters and moment parameters. Moment parameters can be estimated by taking the empirical mean of sufficient statistics and the duality relationship can then recover an estimate of the distributions natural parameters.

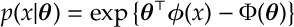

Def [Exponential Family of Distributions] An exponential family is probability distributions of the form

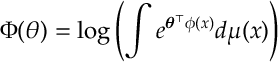

where

for . Here

is some measure [e.g. counting measure or the Lebesgue measure]. The functions

,

, are called sufficient statistics. The special case is where

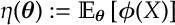

are the natural exponential family of distributions. Finally, we define the moment parameters by

for

for and we let

be the inverse of

.

A happy family! A large number of probability distributions: Normal, Poisson, Geometric, Binomial, Gamma, Exponential… are in the exponential family.

The really nice thing about exponential families is the relationship between sufficient statistics and natural parameters: there is a [Legendre-Fenchel] duality between them.

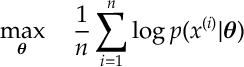

MLEs. A consequence of this is we can often calculate a maximum likelihood estimator (MLE). Suppose we know what the function . If we get data

for

and we assuming the data is IID from an exponential family with

unknown, then we can get the MLE

, i.e. we can solve

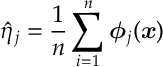

by

by

- Calculating

- and taking

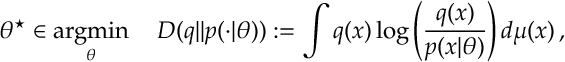

Cross Entropy. We can generalize this MLE statement slightly as follows. Suppose that is some other probability distribution and we wish to minimize the relative entropy between

and

:

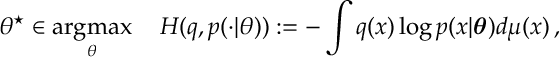

which we note is equivalent to maximizing the cross entropy

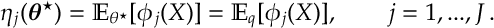

then is the parameter such that the moments match

Some Results. Most of this can be verified through the following sequence of results which we do not prove here but are, for the most part, an application of Legendre-Fenchel duality to the log-likelihood function.

Suppose that is exponential family then

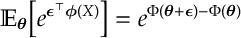

a) [MGF] The moment generating function of is

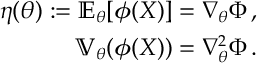

b) [Moments]

c) [Convex] is strictly convex and the Legendre-Fenchel transform

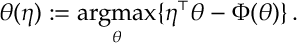

and define

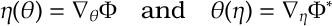

d) [Duality of Moments] If we define then

Hence

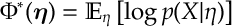

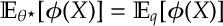

e) [Relative Entropy] As discussed above, for any distribution the cross entropy

is maximized by

such that

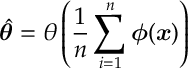

f) [MLE] For data for

the MLE

is given by

A Brief Proof.

a) Follows from definition of .

b) Differentiate the MGF.

c) is positive semi-definite.

is a def at this point so nothing to prove until d).

d) For LF-transforms if strictly convex then gradients [and their inverses] are unique.

e) Note this is just part c) with . Note Fenchel transforms satisfy

and

. f) This is just part e) for the empirical distribution

.

Great reading your bblog post

LikeLike