The Cross Entropy Method (CEM) is a generic optimization technique. It is a zero-th order method, i.e. you don’t gradients.1 So, for instance, it works well on combinatorial optimization problems, as well as reinforcement learning.

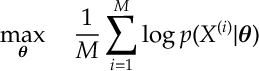

The Basic Idea. You want to maximize a function over

. We assume you can sample RVs

from

according to some parameterized distribution

. We assume that you can fit the parameter

given data

. We let

be the fit to this data. Finally

is an importance parameter used by the cross entropy method. The basic CEM routine is now as follows:

- Sample

from

and evaluate

.

- Order the sample

so

.

- Fit

to the top

samples,

and set

.

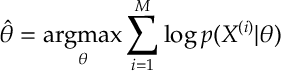

The above three steps are repeated until convergence. The fit step of the cross entropy method uses a specific optimization to find . In particular

maximizes the cross entropy of the sample

.2 We define this shortly. But essentially

is just the maximum likelihood estimator of the data

[assuming it was IID from distribution

for some

].

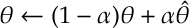

The update for can be smoothed out. Here we replace the update with

for some . Sometime we might want to smooth parameters differently, e.g. the mean and variance should be updated differently.

The Cross Entropy Method is a heuristic algorithm. There is some very limited results on its convergence. That said empirically it has enjoyed a lot a success and is well worth considering as a straight-forward method for a variety optimization problems.

Cross Entropy and Maximum Likelihood Estimation

The CEM recommends the update, , to maximize cross entropy. We define this here. However, really it is just a fancy way of saying that

should be the maximum likelihood estimate (MLE) of our data. Often MLEs can be found by direct calculation, e.g. for multivariate Gaussian distributions or a categorical probability distribution. There’s a reasonably large family of probability distributions – namely, exponential families – for which we can maximize cross entropy and find MLEs in closed form.

If a closed form solutions cannot be found with numerical techniques, then methods, such as Newton methods or stochastic gradient descent, “could” be used. However, in RL, often this is the point where people start to use other gradient based methods directly on the MDP objective (e.g. TD, Q-learning or actor-critic algorithms) rather than expend computation on a secondary MLE parameter optimization. CEM is meant to be a quick easy to implement method to get things working before going the whole-hog into some more complex method [e.g. Deep RL].

Def [Relative Entropy and Entropy] For two probability distributions and

the relative entropy between

and

is

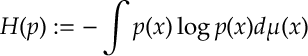

Here is some reference measure.3 The entropy of a distribution is

Relative entropy is a very common measure for the distance between two distributions. It arrises in Sanov’s Theorem. The relative entropy is positive; is convex in and

; and is minimized [equal to zero] when

[almost everywhere w.r.t.

]. The entropy is upto a constant the relative entropy when

is a uniform distribution with respect to

. When we remove add entropy from the relative entropy [which gets rid of the

term], we get the cross entropy:

Def [Cross Entropy] For two probability distributions and

the cross entropy is

Here is some reference measure.

Cross Entropy and MLEs. Notice if is the empirical distribution of some data,

i.e.

and if

is belongs to some parameterized family of distributions

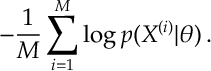

then the cross entropy is

When we apply the Cross Entropy Method, we set

Notice here we minimize the cross entropy . Notice here we have maximized the log-likelihood function of our data. So we could interpret this as finding the maximum likelihood estimator (MLE) of our the data . If we assumed the data is independent and from distribution

for some

.

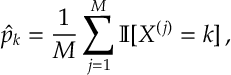

Catagorial. Let . That is

, for

and where

is such that

. Then the MLEs are

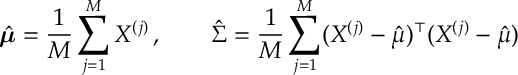

Multivariate Gaussian. If then the MLEs for

and

are

These are the two most important examples, as they deal with continuous and discrete data. We leave the proof of these examples as an exercise. However, we can go maximize MLEs for more general distributions.

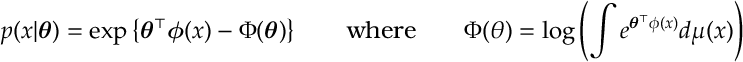

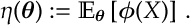

Cross Entropy, MLEs and Exponential Families. We cover exponential families in more detail in this post. However, the main points are that defines an exponential family, when

for natural parameters . The functions

are called sufficient statistics. We define moment parameters by

We let

We let be the inverse of

.4 We can solve

by calculating

- Caveat: though you may [or may not] need gradient methods in the sub-routine that maximizes the cross entropy.↩

- Note the one could instead [and indeed people do] use other alternatives to the cross entropy.↩

- Think of

as the counting measure for a sum or Lesbegue measure which corresponds to the usual integral taught in school.↩

can be calculated by finding the dual of

and differentiating. See Section [ExpFamilies].↩