This is a quick note on policy gradients in bandit problems. There are a number of excellent papers coming out on the convergence of policy gradients [1 ,2, 3]. I wrote this sketch argument a few months ago. Lockdown and homeschooling started and I found I did not have time to pursue it. Then yesterday morning I came across the following paper Mei et al. [1], which uses a different Lyapunov argument to draw an essentially the same conclusion [first for bandits then for general RL]. Although most of the time I don’t talk about my own research on this blog, I wrote this short note here just in case this different Lyapunov argument is of interest.

Further, all these results on policy gradient are [thus far] for deterministic models. I wrote a short paper that deals with a stochastic regret bound for a policy gradient algorithm much closer to projected gradient descent, again in the bandit setting. I’ll summarize this, as it might be possible to use it to derive stochastic regret bounds for policy gradients more generally. At the end, I discuss some issues around getting some proofs to work in the stochastic case and emphasize the point that is a good choice of lyapunov function [where $latex $q^\star$ is the probability of not choosing the optimal action.]

We consider a multi-arm bandit setting. Here there are a finite set of arms . At each time you can choose one arm

and you receive a reward

which we assume is an independent

random variable with mean

. You only get to see the reward of the arm that you choose and over time you want to move towards choosing the optimal [highest reward] arm.

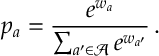

A policy gradient algorithm is an algorithm where you directly parameterize the probability of playing each arm and then you perform a gradient descent/stochastic approximation update on these parameters. The most popular parameterization is soft-max: here the probability of playing arm is

Here there are the weights ,

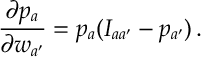

are applied to each arm. A quick calculation gives that

where here is the indicator function for

, i.e.

if

and

otherwise.

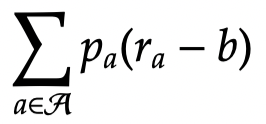

We want to maximize expected the reward (plus or minus a constant )

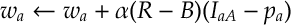

So given the last two expressions, for each arm , you can then perform the following stochastic gradient update:

where is the reward of the arm played;

is some baseline [which is a function that does not depend on

];

is the index of the arm that was played;

is the learning rate of the algorithm.

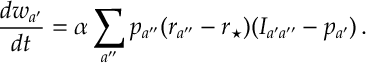

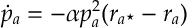

If we can model change in these weights over time with the following o.d.e.

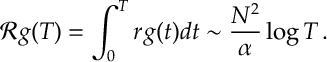

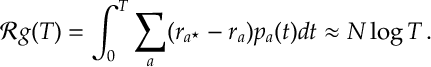

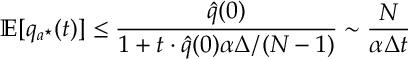

Here we let be the reward of the optimal arm [Note this term does not play a role the dynamics of our model but will be useful for analyzing regret.] The regret of the algorithm is defined to be

This measures how much reward has been lost by not playing the optimal arm.

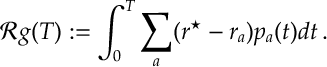

Given the above we also define

The following short argument bounds the change in the regret:

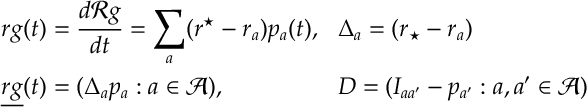

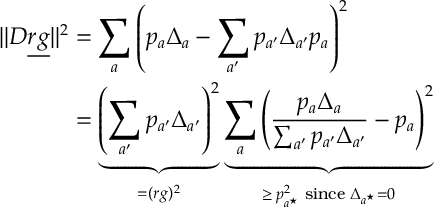

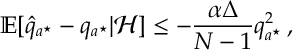

Let’s analyze the above term

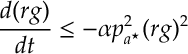

Therefore we have the bound

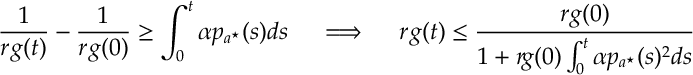

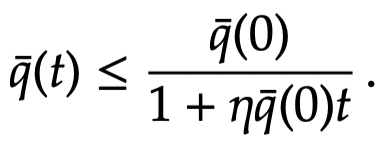

Dividing by and integrating gives

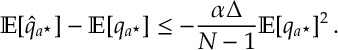

So notice things depend on the probability of playing the optimal arm. Assuming all arms start are equal the optimal arm will increase from

where

is the number of arms. Thus we get a bound:

Thus we have

(Notice the dependence on is very pessimistic since

. Also note the lower-bound on

is more rigorously bounded in [1] and [2] below)

Stochastic Regret Bounds

So do these sorts of convergence results flesh out when we allow for stochastic effects. A proof for a slightly different policy works, and I’ll sketch out the main argument then the technical issues.

First convergence for probabilities can’t go faster than as we know the regret of bandit problems is

. [For RL, it would seem that the similar probabilities should converge at

if actions belong to the boundary and

if they belong to an interior stationary point. Though I don’t have a proof for this other than s.d.e. heuristics.] Notice the algorithm above optimizes the following update when parameterize

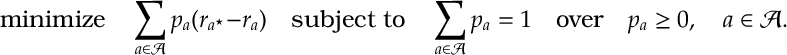

with a soft-max objective.

We can just not reparameterize and apply projected gradient descent.

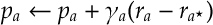

SAMBA. Analogous to the soft-max discussion above. This is how to derive a stochastic policy gradient algorithm in this case. [Projected] Gradient descent the perform the update  for

for . However, since the mean rewards

are not known, a stochastic gradient descent must be considered:

,

. Also, the optimal arm is unknown. So instead of

, we let

be the arm for which

is maximized and, in place, consider the update

,

. Since the reward from only one arm can be observed at each step, we apply importance sampling:

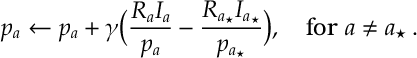

This gives a simple recursion for a multi-arm bandit problem. A name for this is SAMBA: stochastic approximation multi-arm bandit. Catchy acronyms aside, one can see this is really a stochastic gradient descent algorithm with some correction to make sure we don’t get too close to the boundary. Shortly, I’ll argue that we need to let depend on

and we should take

for

suitably small. A motivation is projected gradient descent or barrier methods in optimization [there is probably a regularization interpretation as well]. For policy gradients some form of projection/barrier seems to be required to maintain exploration. The general forms that work seems to be an important open problem.

Learning rate and o.d.e. analysis. Let’s consider the learning rate . Again, consider the gradient descent update

, for

. Notice if we let

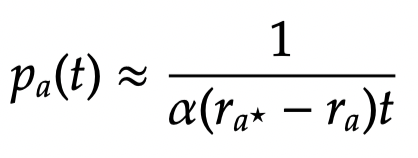

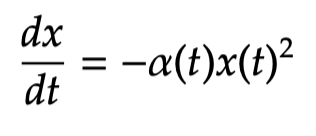

then the gradient descent algorithm approximately obeys the following differential equation:

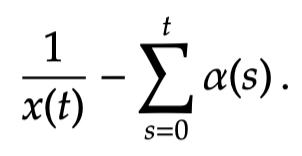

whose solution is

This implies

This suggest a learning rate of , applied to each

, gives a logarithmic regret.

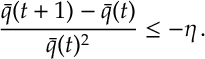

Discrete time analysis. A discrete time version of the above result is the following lemma

Lemma. If is positive with

for some , then

A proof of this is given at the end of this post.

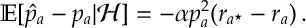

martingale analysis. Notice if we let be the update on the right-hand side of then the expected change in the update [given history

] is

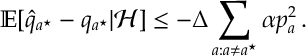

If we define , summing over

gives

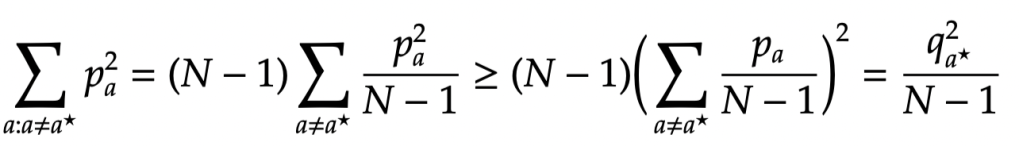

where .

By Jensen’s inequality:

Thus applying the above bound gives

Here we have a positive super-martingale, so by Doob’s Martingale Convergence Theorem converges as time goes to infinity. With a few standard tricks you can show the limit must be zero. We can get a rate of convergence from the discrete time analysis above. Taking expectations and applying Jensen’s inequality one more time gives:

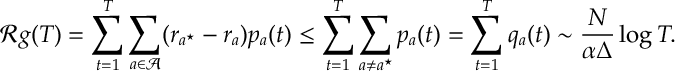

Thus the lemma above gives

which then leads the following stochastic regret bound

Discussion on Convergence Issues. Both soft-max and SAMBA step rules require some form of best arm identification. For SAMBA this is explicit in that we need the highest probability arm to equal the optimal arm

. [And a lot of the technical leg work in our paper involves proving this happens]. For soft-max it is clear that if

gets small for a sustained period of time then this slows convergence. So in both cases we need

to get big in a reasonable length of time. This argument is more straight-forward in the o.d.e case where we can bound

away from zero by a constant. In the stochastic case we need sub-martingale arguments to do this for us. And this can be fiddly as we are essentially dealing with a random walk that is close to threshold between recurrence and transience.

One thing that seems to come out of the analysis for both soft-max [when you include 2nd order terms] and SAMBA is that if the learning rate is too big then then this random walk switches from being transient [and thus converging on the correct arm] to being recurrent [and thus walking around the interior of the probability simplex within some region of the optimal arm]. One way to deal with this is to slow decrease the learning rate either as a function of either time or as a function of the state

. This appears to multiply on an extra

term on the regret bound in both cases while guaranteeing global convergence in the bandit setting. We can consider more slowly decreasing functions which impact regret to an arbitrarily small amount. So it seems like there is a regret of

for

arbitrarily small.

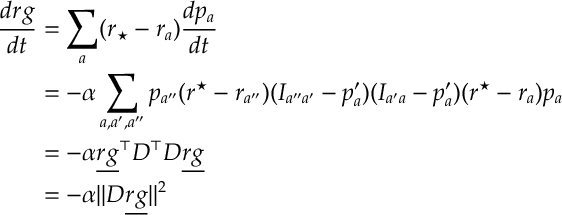

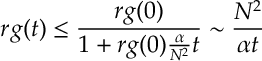

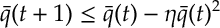

A final point is that in all the analysis so far [both softmax and SAMBA], we have used an o.d.e. of the form

which suggests that we apply a Lyapunov function of the form:

Proving martingales properties for these Lyapunov functions seems to be a key ingredient for getting stochastic proofs to work.

Appendix.

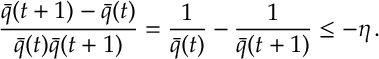

Proof of Lemma. Dividing the expression by gives

Since is less than

but still positive, dividing by

rather that

decreases the previous lower bound

Summing from gives

which rearranges to give

as required.

References

- Mei J, Xiao C, Szepesvari C, Schuurmans D. On the Global Convergence Rates of Softmax Policy Gradient Methods. arXiv preprint arXiv:2005.06392. 2020 May 13.

- Agarwal, A., Kakade, S. M., Lee, J. D., and Mahajan, G. Optimality and approximation with policy gradient methods in markov decision processes. arXiv preprint arXiv:1908.00261, 2019.

- Bhandari J, Russo D. Global optimality guarantees for policy gradient methods. arXiv preprint arXiv:1906.01786. 2019 Jun 5.