For a Markov chain , consider the reward function

![\label{TD:Reward} R(x) := \mathbb E_{x} \left[ \sum_{t=0}^\infty r(\hat x_t) \right]](https://appliedprobability.blog/wp-content/uploads/2019/04/d85d889e45a368c6da679e24fe864bee.png?w=840)

associated with rewards given by . We approximate the reward function

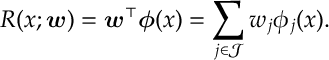

with a linear approximation,

Here we have taken our state and extracted features,

for

in finite set

, that we believe to be important to determining the overall reward function

. We then apply a vector of weights

to each of these features. Our job is to find weights that give a good approximation to

.

We know for instance that is a solution to the fixed point equation

![R(x) = \mathbb E_x [ \underbrace{ r(x) + \beta R(\hat{x}) }_{ =:\text{Target}(x) }],\qquad x\in \mathcal X. \label{TD:REqn}](https://appliedprobability.blog/wp-content/uploads/2019/04/f37eafd74f72c0026ae309ff66e9fef4.png?w=840)

The target, , is an estimate of the true value of

Here the target random variable considered is the TD(0) target. Other targets can be used, e.g. the term in the sum, , would be the Monte-carlo target, and there are various options in between, c.f. TD(

). In function approximation, we cannot get the expected reward to equal its target. So we attempt to minimize the objective

![\underset{\bm w}{\text{minimize}}\quad \mathbb E_\mu \left[ \left( \text{Target}(x) - R(x;\bm w) \right)^2 \right]\, .](https://appliedprobability.blog/wp-content/uploads/2019/04/1addbd180564f52b54ba473f29c91567.png?w=840)

Here the expectation is over , the stationary distribution of our Markov process. We can’t minimize this since we do not know the stationary distribution

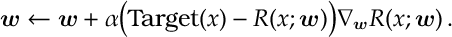

. We can only get samples and so we can instead apply Robbin’s-Munro/Stochastic Gradient Descent update to

Like with tabular methods these updates can be applied online, offline, first-visit, every-visit.

Quick Analysis of TD(0).

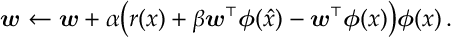

Let’s do an informal analysis of TD(0). For TD(0) our target is , where

is the next state after

. Under a linear function approximation this gives an update

Suppose that our Markov chain for is stationary with stationary distribution

. If we look at the expected change in our update term we get

![\begin{aligned} &\sum_x \mu(x) r(x) \bm\phi(x) + \sum _x \sum_y \mu(x) ( \beta \bm \phi(x) P_{xy} \bm \phi(y)^\top -\bm \phi(x) \bm \phi(x)^\top )\bm w \\ = & \underbrace{\Phi^\top M \bm r }_{=: \bm b} - \underbrace{ \Phi^\top M [I-\beta P] \Phi }_{=: A}\bm w\, .\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2019/04/0d986a70dd4e78e0426226d3f0700a4c.png?w=840)

Above is the

matrix with entries

and

is the

diagonal matrix with diagonal entries given by

. We use these to define the length

vector

and the

matrix

, as defined above.

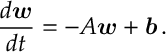

So, roughly, moves according to the differential equation

Now is the transition matrix of a Markov chain. Since its rows sum to

, its biggest eigenvalue is

. So we can expect that

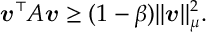

is in some sense “negative”, specifically it can be shown that

, where

. This then implies that

This is sufficient to give convergence of the above differential equation: take such that

and take

then

Thus we see that .

Convergence is great and everything, but we must verify that the solution obtained, , is a “good” solution. First, notice that the reward function

satisfies

This is just with interpreted as a vector and the expectation as a matrix operation with respect to transition matrix

.

Second, notice the approximation that is closest to the rewards

, is given by a projection, specifically

Third, we see the equations satisfied by can be expressed in a form somewhat similar to above expression

. Specifically, we rearrange the expression

![\begin{aligned} A \bm w^* = \bm b & \iff \Phi^\top M [I-\beta P] \Phi \bm w^*\, = {\Phi^\top M \bm r } \\ & \iff \Phi^\top M \Phi \bm w^*\, = {\Phi^\top M \bm r } + \Phi^\top M \beta P \Phi \bm w^* \\ & \iff \underbrace{ \Phi \bm w^* }_{ \bm R(\bm w^*) } = \underbrace{ \Phi [\Phi^\top M \Phi ]^{-1} {\Phi^\top M } }_{ \Pi } \underbrace{ (\bm r + \beta P \Phi \bm w^*) }_{ T_0(\bm R^*(\bm w)) }\, .\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2019/04/a9eb4578a8ca51a7569bfa217f43aaa7.png?w=840)

So, while satisfies

, we see that

satisfies

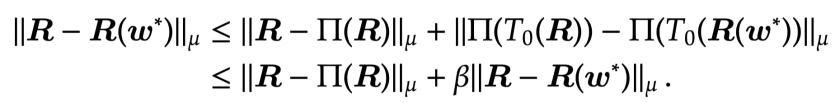

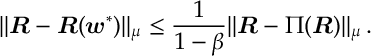

We can use these identities satisfied by and

to show that approximation is comparable to the best approximation of

. Since

is a

-contraction and that projections always move distances closer (both properties are relatively easy to verify):

So

Thus we see we reach an approximation that is a constant away from the “best” linear approximation.

References.

The exposition here is due to Van Roy and Tsitsiklis.

Tsitsiklis, John N., and Benjamin Van Roy. “Analysis of temporal-diffference learning with function approximation.” Advances in neural information processing systems. 1997.