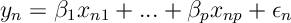

We consider the following formulation of Lai, Robbins and Wei (1979), and Lai and Wei (1982). Consider the following regression problem,

for where

are unobservable random errors and

are unknown parameters.

Typically for a regression problem, it is assumed that inputs are given and errors are IID random variables. However, we now want to consider a setting where we sequentially choose inputs

and then get observations

, and errors

are a martingale difference sequence with respect to the filtration

generated by

.

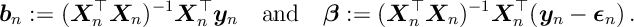

We let be the matrix of inputs and

be the matrix of outputs. Further we let

be the least squares estimate of

given

and

.

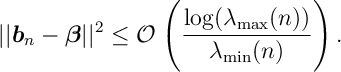

The following result gives a condition on the eigenvalues of the design matrix for

to converge to

and also gives a rate of convergence.

If and

are, respectively, the minimum and maximum eigenvalues of the design matrix

and if we assume that for some

, almost surely,

Then whenever we have

![\lambda_{\min}(n) \xrightarrow[n\rightarrow \infty ]{} \infty \quad \text{and} \quad \frac{\log ( \lambda_{\max}(n) ) }{\lambda_{\min} (n) } \xrightarrow[n\rightarrow \infty ]{} 0](https://appliedprobability.blog/wp-content/uploads/2019/02/bfbdf810da03807299056fe464e05b40.png?w=840)

then converges to

and

In what follows, is the Euclidean norm for a vector and for a matrix

. (Note that it is well known that

the maximum eigenvalue of

and

)

Outline of proof. The least squares estimate to the above regression problem is given by

So where

. To prove the above theorem first note that

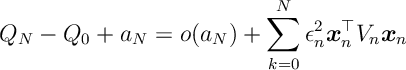

The inequality above we apply the Cauchey-Schwartz inequality. We bound using the Sherman-Morrison formula. Specifically we will show that

where is some positive increasing sequence. So since

convergence is determine by the rate of convergence of the sequence

which, with some linear algebra, can be bounded by . Thus we arrive at a bound of the form

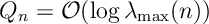

In what follows we must study the asumptotic behaviour of . What we will show is

Proposition. Almost surely

Proof. To prove this proposition we will require some lemmas, such as the Sherman-Morris formula. These are stated and proven after the proof of this result.

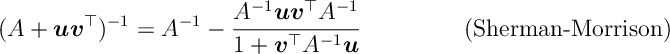

The Sherman-Morrison Formula states that:

Note that

Thus

Thus

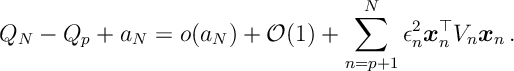

Thus summing and rearranging a little

Notice in the above, the first summation (before the equals sign) only acts to decrease , while on the right hand side, the first term is a martingale difference sequence and the second term is a quadratic form.

Now because the above Martingale difference sequence is a martingale we have that

In the second equality above, we use that . Thus we have that

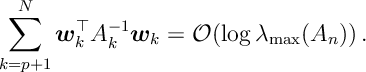

By Lemma 2 (below) we have that

Thus we have that

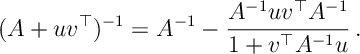

Lemma 1 [Sherman-Morrison Formula] For an invertible Matrix and two vectors

and

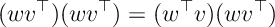

Proof. Recalling that the outer-product of two vectors is the matrix

it holds that

(Nb. This is matrix multiplication: each column is a constant times and every row is a constant time

, so the dot product comes out.)

Using this identity note that

![\begin{aligned} \bigg( I - \frac{wv^\top}{I + v^\top w} \bigg) \bigg( 1 + w v^\top \bigg) & = I + wv^\top - \frac{wv^\top }{1+v^\top w} + \frac{1}{1+v^\top w} (wv^\top) ( wv^\top ) \\ & = I + wv^\top - \frac{wv^\top}{1+ v^\top w} \left[ 1 + w^\top v\right] \\ & = I\, . \end{aligned}](https://appliedprobability.blog/wp-content/uploads/2019/02/c4588bb75c7c2f1f2f16f3eb268c0870.png?w=840) So

So . Now letting

,

as required.

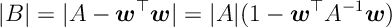

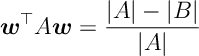

The following in some sense repeatedly analyses to the determinant under the Sherman-Morrison formula.

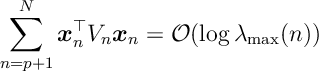

Lemma 2. If are a sequence of vectors and we let

then

Proof. First note that if then, as was also in the Sherman-Morris formula

Thus

which should remind you of the derivative of the logarithm. (Also note that this tells us that determinant is increasing and that .) If we apply this to the above sum and apply the concavity of the logarithm

Since is the product of all eigenvalues

. So we see that

References

This is based on reading:

T.L Lai, Herbert Robbins, C.Z Wei, “Strong consistency of least squares estimates in multiple regression II”, Journal of Multivariate Analysis, Volume 9, Issue 3, 1979, Pages 343-361.

Lai, Tze Leung; Wei, Ching Zong. Least Squares Estimates in Stochastic Regression Models with Applications to Identification and Control of Dynamic Systems. Ann. Statist. 10 (1982), no. 1, 154–166. doi:10.1214/aos/1176345697.