This section is intended as a brief introductory recap of Markov chains. A much fuller explanation and introduction is provided in standard texts e.g. Norris, Bremaud, or Levin & Peres (see references below).

Introductory example: snakes and ladders

We highlight some of the key properties of Markov chains: how to calculate transitions, how the past effects the current movement of the processes, how to construct a chain, what the long run behavior of the process may (or may not) look like. We give an initial example to better position our intuition.

Below in Figure [Snakes_and_ladders], we are given a game of snakes and ladders (or shoots and ladders in the US). Here a counter (coloured red) is placed on the board at the start. You roll a dice. You move along the numbered squares by an amount given by the dice. The objective is to get to the finish. If the counter lands on a square with a snake’s head, you must go back to the square at the snakes tail and, if you land on a square at the bottom of a ladder, go to the top of the ladder.

We let be the position of the counter on the board after the dice has been thrown

times. The processes

is a discrete time Markov chain. Two things to note: First, note that given the counter is currently at a state, e.g. on square

, the next square reached by the counter – or indeed the sequence of states visited by the counter after being on square

– is not effected by the path that was used to reach the square. I.e. This is called the Markov Property. Second, notice each movement of the counter from one state is a function of two pieces of information the current state and the independent random roll of the dice. In this way we can construct (or simulate) the random process.

Definitions

Let be a countable set. An initial distribution

is a positive vector whose components sums to one. A transition matrix is a postive matrix whose rows sum to one, that is, for

With an initial distribution and a transition matrix

, you can define a Markov chain. Basically

determines the probability the process starts in state

Vand

gives the probability of going to

if you are currently in state

.

Def [Discrete Time Markov Chain] We say that a sequence of random variables is a discrete time Markov chain, with initial distribution

and transition matrix

if for

,

and

The condition is often called the Markov property and is the key defining feature of a Markov chain or, more generally, Markov process. It states that the past and future

are conditionally independent of the present

. Otherwise stated, is says that, when we know the past and present states

, the distribution of the future states

is only determined by the present state

. Think of a board game like snakes and ladders, where you go in the future is only determined by where you are now and not how you got there; this is the Markov property.

The following proposition shows that the evolution of a Markov chain can be constructed from its the current state and an independent dice throw.

Prop 1 [Constructing Markov Chains] Take a function ,

a random variable on

, and

, independent uniform

random variables. The sequence

constructed with the recursion

is a discrete time Markov chain. Moreover all discrete time Markov chains can be constructed in this way.

The following proposition will be useful when we want to sum up a long sequence of rewards.

Prop 2 [Markov Chains and Potential Functions] For be a bounded function and for

,

![R(x) = \mathbb{E}_x \left[ \sum_{t=0}^{\infty} \beta^t r(X_t) \right]](https://appliedprobability.blog/wp-content/uploads/2019/01/c92fec1d382004fee568c67d426cd236.png?w=840)

is the unique solution to the equation

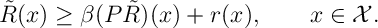

Moreover, if function satisfies

then ,

.

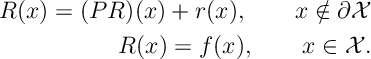

The following is an alternative formulation of the previous proposition.

Prop 3. Let be a subset of

and let

be the hitting time on

i.e.

and take

then

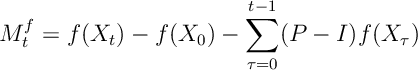

There is a close connection between Markov chains and Martingales that we will want to use later when considering Markov Decision Processes.

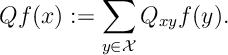

Prop 4 [Markov Chains and Martingale Problems] Show that a sequence of random variables is a Markov chain if and only if, for all bounded functions

, the process

is a Martingale with respect to the natural filtration of . Here for any matrix, say

, we define

Some references

- Norris, J.R., 1997. Markov chains. Cambridge University Press.

- Bremaud, P., 2013. Markov chains: Gibbs fields, Monte Carlo simulation, and queues (Vol. 31). Springer Science & Business Media.

- Levin, D.A. and Peres, Y., 2017. Markov chains and mixing times (Vol. 107). American Mathematical Soc..

2 thoughts on “Markov Chains: A Quick Review”