For infinite time MDPs, we cannot apply to induction on Bellman’s equation from some initial state – like we could for finite time MDP. So we need some algorithms to solve MDPs.

At a high level, for a Markov Decision Processes (where the transitions are known), an algorithm solving a Markov Decision Process involves two steps:

- (Policy Improvement) Here you take your initial policy

and find a new improved new policy

, for instance by solving Bellman’s equation:

![\pi(x) \in \argmax_{a\in \mathcal A} \left\{ r(x,a) + \beta \mathbb{E}_{x,a} \left[ R(\hat{X},\pi_0) \right] \right\}](https://appliedprobability.blog/wp-content/uploads/2019/01/b498c90d70da814b5e8114fea4f09f37.png?w=840)

- (Policy Evaluation) Here you find the value of your policy. For instance by finding the reward function for policy

:

![R(x,\pi) = \mathbb E^\pi_{x} \left[ \sum_{t=0}^\infty \beta r(X_t,\pi(X_t))\right]](https://appliedprobability.blog/wp-content/uploads/2019/01/f7270d2c72c480595dd2bb86a83192a4.png?w=840)

Value iteration

Value iteration provides an important practical scheme for approximating the solution of an infinite time horizon Markov decision process.

Def. Take

and recursively calculate

![\begin{aligned} \pi_{s+1}(x) \in & \argmax_{a\in {\mathcal A} } \left\{ r(x,a) + \beta \mathbb{E}_{x,a} \left[ V_s(\hat{X}) \right] \right\} \\ V_{s+1}(x) & = \max_{a\in {\mathcal A} } \left\{ r(x,a) + \beta \mathbb{E}_{x,a} \left[ V_s(\hat{X}) \right] \right\}\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2019/01/cb6aeccf69eee99c3b72edba44cc8614.png?w=840)

for this is called value iteration.

We can think of the two display equations above, respectively, as the policy improvement and policy evaluation steps. Notice, that we don’t really need to do the policy improvement step to do each iteration. Notice the policy evaluation step evalutes one action under the new policy afterwards the value is

.

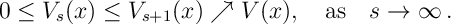

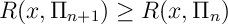

The following result shows that Value Iteration converges to the optimal policy.

Thrm 1. For positive programming, i.e. where all rewards are positive and the discount factor belongs to the interval

, then

Here is the optimal value function.

The following lemma is the key property for value iterations convergence, as well as a number of other algorithms.

Lemma 1. For reward function define

![\mathcal L R(x) = \max_{a\in {\mathcal A} } \left\{ r(x,a) + \beta \mathbb{E}_{x,a} \left[ R(\hat{X}) \right] \right\}.](https://appliedprobability.blog/wp-content/uploads/2019/01/a3e1fb623f3a80aaa02c31922e54a94f.png?w=840)

Show that if for all

then

for all

Proof. Clearly,

![r(x,a) + \beta \mathbb{E}_{x,a} \left[ R(\hat{X}) \right] \geq r(x,a) + \beta \mathbb{E}_{x,a} \left[ \tilde{R}(\hat{X}) \right].](https://appliedprobability.blog/wp-content/uploads/2019/01/99582ce5a214079285c7b972a949a0ef.png?w=840)

Now maximize both sides over .

Proof of Thrm 1. Note that Now, since

, repeatedly applying Lemma [IDP:Cont_0] to the inequality

gives that

Since is increasing

for some function

. We must show that

is the optimal value function from the MDP.

Next note that is the optimal value function for the finite time MDP with rewards

and duration

. So

and thus

. Further, for any policy

,

Now take limits . Now maximize over

to see that

. So

as required.

Ex. A robot is placed on the following grid.

The robot can chose the action to move left, right, up or down provided it does not hit a wall, in this case it stays in the same position. (Walls are colored black.) With probability 0.8, the robot does not follow its chosen action and instead makes a random action. The rewards for the different end states are colored above. Write a program that uses, Value Iteration to find the optimal policy for the robot.

Ans. Notice that the robot does not just take the shortest root. (I.e. some forward planning is required)

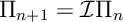

Policy Iteration

We consider a discounted program with rewards and discount factor

.

Def [Policy Iteration] Given the stationary policy , we may define a new (improved) stationary policy,

, by choosing for each

the action

that solves the following maximization

![{\mathcal I}\Pi (x) \in \argmax_{a\in{\mathcal A} } \; r(x,a) + \beta \mathbb{E}_{x,a} \left[ R(\hat{X},\Pi) \right]](https://appliedprobability.blog/wp-content/uploads/2019/01/f62894b144333bf596b79660d82fccf9.png?w=840)

where is the value function for policy

. We then calculate

. Recall that, by Thrm 2 in Markov Chains: A Quick Review, this solves the equation

![R(x,\mathcal I \Pi) = r(x,\mathcal I \Pi (x)) + \beta \mathbb{E}_{x,a} \left[ R(\hat{X},\mathcal I \Pi) \right]](https://appliedprobability.blog/wp-content/uploads/2019/01/3989a96503a5e70fdbb5236cf232520d.png?w=840)

Policy iteration is the algorithm that takes

Starting from a stationary policy .

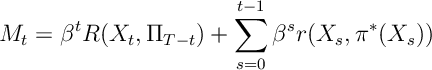

Thrm 2. Under Policy Iteration

and, for bounded programming,

By the optimality of with respect to

we have

![\begin{aligned} R(x,\Pi) = r(x,\pi(x)) + \beta \mathbb E_{x,\pi(x)} \left[ R(\hat{X},\Pi) \right] \leq r(x,\mathcal I \Pi (x)) + \beta \mathbb E_{x,\mathcal I \pi(x)} \left[ R(\hat{X},\Pi) \right]\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2019/01/481ce83141f0956ced2927eff942117a.png?w=840)

Thus from the last part of Thrm 2 in Markov Chains: A Quick Review, we know that . This show that Policy iteration improves solutions. Now we must show it improves to the optimal solution.

First note that

![\begin{aligned} r(x,a) + \beta \mathbb E_{x,a} \left[ R(\hat{X},\Pi) \right] & \leq r(x,\mathcal I \pi(x)) + \beta \mathbb E_{x,\mathcal I(x)} \left[ R(\hat{X},\Pi) \right] \\ & { \leq } r(x, \mathcal I \pi(x)) + \beta \mathbb E_{x,\mathcal I \Pi} \left[ R(\hat{X},\mathcal I \Pi) \right] = R(x,\mathcal I \Pi) .\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2019/01/c247a644cee16657a78f3f35b76e14c5.png?w=840)

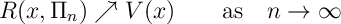

We can use the above inequality to show that the following process is a supermartingale

where is the optimal policy.1 To see taking expectations with respect to the optimal policy

gives

![\begin{aligned} &\mathbb E^* \left[ M_{t+1} - M_t | \mathcal F_t \right] \\ & = \beta^t \mathbb E^* \left[ \beta R(X_{t+1},\Pi_{T-t-1}) + r(X_t, \pi^*(X)t) - R(X_t,\Pi_{T-t}) \Big| \mathcal F_t \right] \\ & = \beta^t \mathbb E^* \left[ \beta \mathbb E^*_{X_t,\pi^*(X_t)} \left[ \beta R(\hat{X},\Pi_{T-t-1}) + r(X_t,\pi^*(X_t)) - R(X_t,\Pi_{T-t}) \right] \Big| \mathcal F_t \right] \\ & \leq 0\, .\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2019/01/c95176c3690c3603ce5efd2f005643ea.png?w=840)

Since is a supermartingale:

Therefore, as required,

Ex. Write a program that uses, Policy iteration to find the optimal policy for the robot below:

Ans.

- Note we are implicity assuming an optimal stationary policy exists. We can remove this assumption by considering a

-optimal (non-stationary) policy. However, the proof is a little cleaner under our assumption.↩