The Simplex Theorem suggests a method for solving linear programs . It is called the Simplex Algorithm.

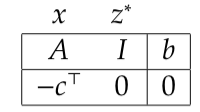

- The tableau in Step 2 is called the Simplex Tableau. It stores all the information required in the Simplex Theorem: matrix

expressed in terms of basis

,

; the basic feasible solution excluding non-zero entries

; the reduced cost vector

, and the cost of the current solution

.

- The bottom row of the simplex tableau is called the objective row.

- Observe that the columns of the matrix

corresponding to the basis

necessary form an identity matrix. Note, by reading along the tableau, we can match each

in this identity matrix to an entry of the column vector

. From this, we can recover the bfs given by basis

,

.

- The Simplex Algorithm also works for inequality constraints. For an optimization with inequality constraints

, when we add slack variables

, we have equality constraints

. Observe for new matrix

,

is a basic solution. Thus, provided

,

is a basic feasible solution.

- In Step 3, we call

the pivot row,

the pivot column, and

the pivot.

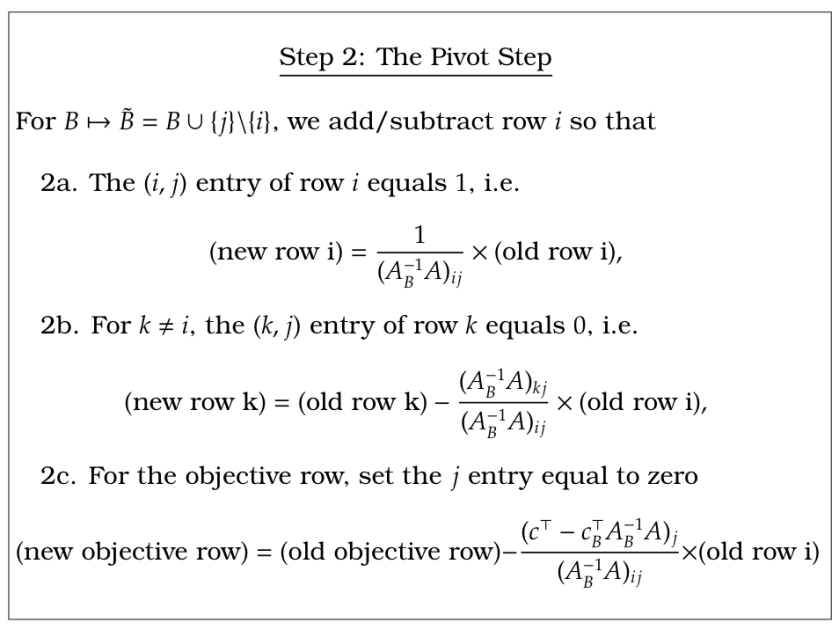

If Step 2 follows Step 3, we need to calculate from

. In other words, we need to apply operation

. We can break down Step 2 into the following routine

- In other words, The matrix

is exactly the matrix formed by multiplying and adding rows of

so that the resulting matrix has an identity matrix in the columns corresponding to basis

. The reduced cost vector

is exactly the vector formed by multiplying and adding rows of

so that there are zeros in the components corresponding to basis

. Because these identity matrix and zero components are necessary, you can always tell if your simplex tableau is in the correct form.

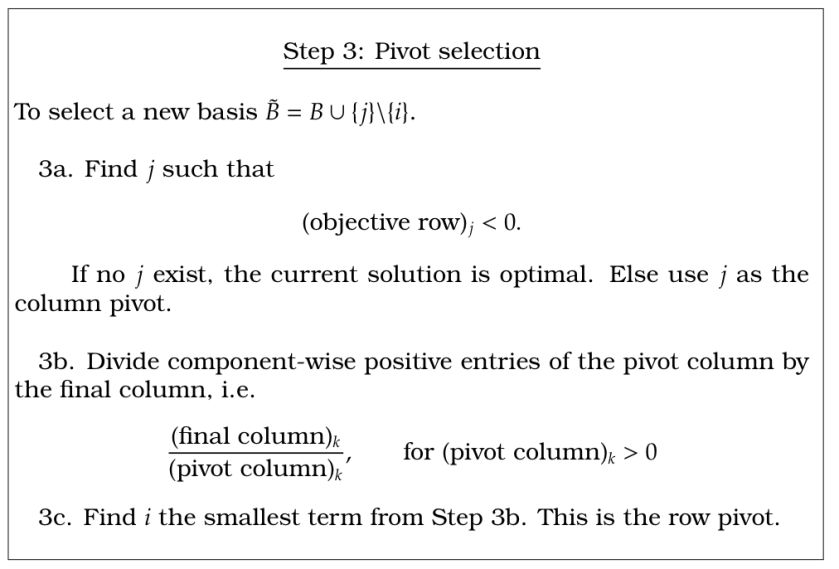

In addition, Step 3 can be expressed in terms of the Simplex Tableau in the following way.

- In Step 3a, we do not include the final entry of the objective row in our calculations.

- In Step 3b, we do not include the entry

, corresponding to the objective row, in our calculations.

Let’s now go through a simple example in detail.

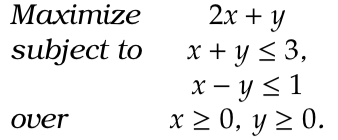

Example 1.

Answer 1. This is the same as

Let’s go through the steps of the algorithm.

Step 1: we find a basis. As we noted inequality constraints mean we can start with all slack variables in the basis.

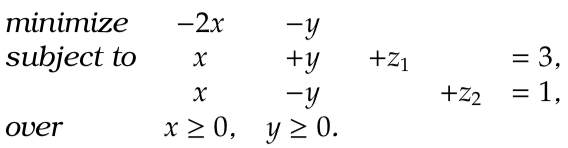

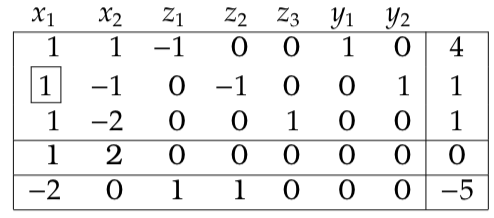

Step 2: write out the simplex tableau:

The basis variables, and

, are starred and our current solution can be read from the final column:

. As required, there is an identity matrix beneath the basis variables; as required, there are zeros beneath basis variables in the objective row. So the tableau is in the correct form.

Step 3: the reduced cost for both variables and

are negative:

and

respectively. Thus, we could pivot on either column. We decide to pivot on

. So,

will be added to the basis. We need to remove a variable from the basis. We perform Step 3b.

The -row divided by the final row gives

and

. As

is smallest we pivot on the second row. For book keeping, we place a box around the pivot. We now repeat to Step 2.

Step 2: For the matrix to be in the correct form, we must multiply and subtract the pivot row from all other rows so that the only non-zero entry in the pivot row is a at the pivot. In this case, the pivot row is in the correct form (the pivot contains a

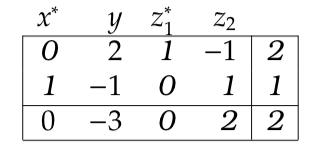

), we deduce the pivot row from the 1st row, and we add two lots of the pivot row to the objective row. This gives a tableau

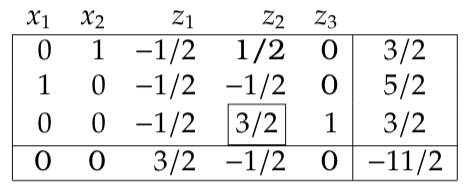

Step 3: The only negative reduced cost is for . This gives the pivot column. The only positive term from Step 3b is from the first row; this is our pivot row.

Step 2: dividing the pivot row by and adding that to the 2nd row and three times to the objective row gives

The reduced costs in the objective row are all positive. Thus, the current solution is optimal. Once again we read this solution from the simplex tableau by associating basis variables with the final column through the ’s in the identity submatrix. In particular,

and

and the value of the maximum is

.

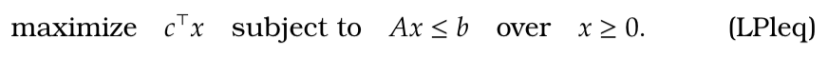

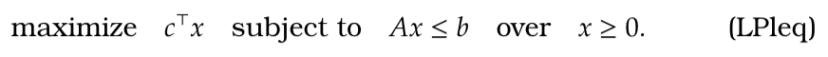

We can make more general observations from our example, for the following optimization:

- Once slack variables are added to , these slack variables can form a basis with

.

- If

then

,

forms a basic feasible solution, and since our objective does not constrain slack variables, the following tableau forms a correctly initialized simplex tableau

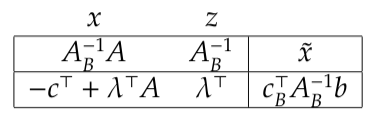

- Now considering this tableau again with respect to another basis,

. Letting

and

, we get

- From the tableau above, once again

gives the values of basic variables; we can also find our current inverse matrix

; we can find our dual variables

.

- The Simplex Algorithm maintains primal feasibility

; as any variable in the basis has a zero in the objective row, the Simplex Algorithm maintains complementary slackness

and

; when the Simplex Algorithm is complete the objective row is positive, so we have dual feasibility

and

.

- In other words, whilst maintaining primal feasibility and complementary slackness, we search for dual feasibility. Once this is found by Linear Programming Lemma 2, our solution is optimal both for the primal and dual problem.

Two Phase Simplex

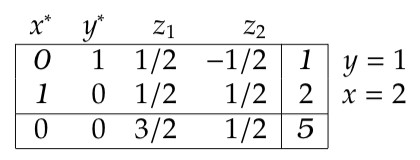

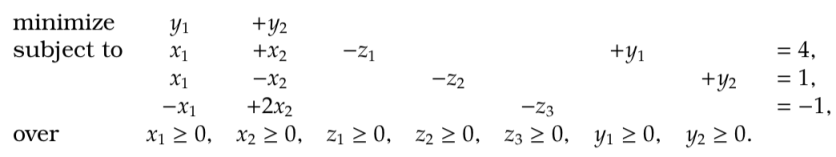

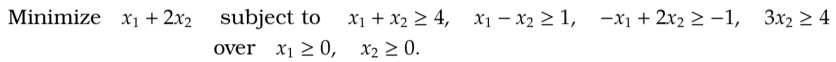

We consider the method of two stage simplex with the following example.  which, adding slack variables, is equivalent to this first problem

which, adding slack variables, is equivalent to this first problem

Observe that, unlike before, setting does not yield a basic feasible solution. In particular, if

then

,

which are not feasible. So, we need to find a bfs to start from. The trick is to solve a different optimization problem whose optimal solution is a bfs for our original problem.

For example, we consider the second problem

- We added an extra variable

to each constraint that was negative in our original problem. The positive variable

tracts the amount we have violated any of our constraints.

- We then chose to minimize

which we interpret as the total amount that we have violated our constraints.

- Note by adding these extra variables there is a bfs with

, where

. So, we can solve the second problem using the Simplex Algorithm.

Both problems have the same number of non-zero entries in a bfs, namely, . There is a feasible solution to the first problem iff the optimal value of the second problem is zero. Thus, once we have solved the second problem, we will be at a basic feasible solution, where

. Removing variables

and

, this is then a basic feasible solution to the first problem: all the violated constraints are satisfied and there are the right number of non-zero terms. So we should solve this problem in two phases:

Phase I: Solve the second problem, where we added additional variables for violated constraints.

Phase II: Removing the additional variables we have a bfs for the first problem. We then solve the second problem.

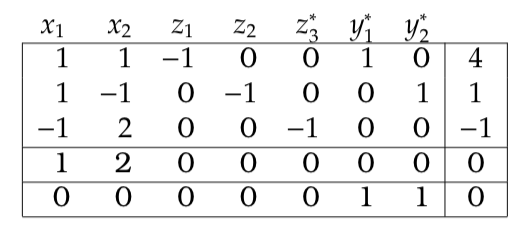

So now we can solve this problem using the Simplex algorithm in two phases:

Phase I:

We have written down the simplex tableau for the second problem and added an additional objective row for the objective of the first problem. Notice the simplex tableau is not in the correct form. First, we want ,

and

to be basis elements. So we need to multiply the 3rd row by

. Second, the Phase I objective row contains non-zero entries above basic feasible variables

,

. So we need to subtract the first and second rows of the simplex tableau from the bottom objective row.

We now proceed to optimize the this tableau whilst treating the additional objective row as though it was just an additional constraint.

We are now at an optimal solution for the the first phase. In particular, we are at a basic feasible solution where and

. We can now remove

and

, the bottom row and solve the second phase.

Phase II:

So we have now solved our original optimization problem. The optimal solution is ,

with optimal value

.

Dual Simplex

Dual simplex is another method for finding a basic feasible solution from a non-feasible basic solution. The Simplex Algorithm maintains feasibility of the primal problem; this is why we minimize ). The Simplex Algorithm searches for a solution that is dual feasible. The dual simplex takes a solution that is dual feasible and searches for a primal feasible solution.

We continue our example from the last section. Here we solved, . We now notice we forgot a constraints . So we want to solve

Our previous optimal simplex tableau was

But now notice, our current solution ,

is no longer feasible as

. We now wish to solve this problem by adding this constraint and its a slack variable to our tableau:

Multiplying the th row by

and then adding

times the first row to the fourth puts the tableau in the correct form for our basis.

Our current basic solution has . So our basis is not feasible for our primal problem ; however, the objective row suggests a dual feasible solution. Note below our slack variables in our , our tableau contained a negative identity matrix

, and

and

.

We select a pivot on rows that maintains dual feasibility and increases our objective to a primal feasible solution i.e. . We choose a row

for which primal feasibility is violated i.e.

. In our example, this is the

th row. We then divide the objective row with the negative entries from this row and find the least negative entries. In our example, the third column gives

and the fifth column gives

. So as

, we pivot on the fifth column. . This gives the tableau

Our current solution is feasible and, as our pivot kept our objective row positive, our solution is now optimal. In particular, .

Linear programming and simplex results

Theorem 1. The extreme points of are exactly the set of basic feasible solutions.

Proof. Recall that we assume each submatrix of

is invertible. Thus for each basis,

there is a unique basic feasible solution (bfs)

. Take this

and suppose

for some

. If

then, because

, then either

for some

or

for some

, which contradicts the feasibility of

and

. If

then

and so by uniqueness of bfs

,

. Thus any bfs

is an extreme point.

Conversely, take any feasible that is not a basic feasible solution. Let

and

as

is not a basic feasible solution

has more than

points. So the submatrix

has linearly dependent columns, i.e. there exists a

such that

. Take

,

where

is sufficiently small so that

. Since

,

and

are feasible and also

. So

is not an extreme point.

Theorem 2. Any linear program with a finite optimum has at least one optimum at an extreme point.

Lemma 1. For a closed convex set , if

is a separating hyperplane, i.e.

, them an extreme point in

is an extreme point in

.

Proof. Assume for

for

. Then

. By assumption

and

. So,

and

. Thus

but

is an extreme point in

so

. So

is an extreme point in

.

Theorem 3 [Carathéodory’s Theorem] Every closed bounded convex set has an extreme point and every point in

is a convex combination of its extreme points. Moreover, the number of extreme points required in this convex combination can be chosen to be less than or equal to

.

Proof. We prove the result by induction on . For

,

is a closed bounded interval and the extreme points are the end points of the interval. Assuming the result holds for dimensions less than

, we prove the result for

. Firstly, note if

has empty interior then

is a subset of an affine space,

and this space must have dimension of

or less otherwise

would have a non-empty interior. In otherwords, if

, then problem is immediately reduced to the

dimensional case.

Take if

is an extreme point then everything is trivial. So let’s suppose

is not an extreme point, in which case there exist an

and

such that

and

. Consider the line

, for

. Let

where

is the largest

such that

and let

where

is the smallest

such that

. Note

and

are well defined because

is closed and bounded. It is also clear that

for some

and

. Thus the applies to

and

. Let

and

be the hyperplanes respectively sepaarating

and

from

. Then

and

convex sets of dimension less than

. Thus by the induction hypothesis both

and

are a convex combination of the extreme points which exist in

and

respectively, which by Lemma 1 are extreme points of

. So,as

is a convex combination of

and

,

is a convex combination of extreme points existing in

.

Now, we reduce the number of extreme points required to express . Suppose

, where

,

and where each

is an extreme point. If

then the vectors

are linearly dependent. Thus there exists

where, defining

, we have

. Notice because

and because by definition

, there must exist some

We, now, consider

. If we take

, then any

maximizing this expression is zero in the sum

. In otherwords,

in now the convex combination of less than

extreme points. Continuing inductively, we find

can be expressed as the convex combination of

points or less.

Proof of Theorem 2. Let be the finite optimum to a linear program with objective

and feasible set given by polytope

. So for hyperplane

,

defines a non-empty polytope of optimal solutions. We aim to apply Caratheodory’s Theorem. Since

, for

for all

. Also note

is closed and bounded for all

. Thus

, the minimum

over

, is well defined and attained. Thus, as

is a closed bounded set and so

is a closed bounded non-empty polytope of optimal solutions, which by Caratheordory’s Theorem must contain an extreme point. Applying Theorem 1 twice – once for

and once for

– this optimal point must be an extreme point in

also.