Foster’s Lemma provides a natural condition to prove the positive recurrence of a Markov chain.

- Let

be a discrete-time Markov chain on countable, irreducible state-space

.

Theorem [Foster’s Lemma] If is a function with

and such that for some

and some

,

is finite, and

![\begin{aligned} &\bE[L(X_n)| X_{n-1}] < K, && \text{if }\quad L(X_{n-1})\leq k, \\ &\bE [ L(X_n) -L(X_{n-1}) | X_{n-1}] < -\epsilon, && \text{if }\quad L(X_{n-1})>k.\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2018/06/0506ba6e0a400a989cad991bdf4343e6.png?w=840)

then is positive recurrent.

Consider a process that jumps to then, at each unit of time, moves down

until it moves below

and then subsequently jumps up to

again. For every one unit of time below

, this process spends

units of time above

. So the proportion of time the process is below

is about

. In other words, the process is positive recurrent in states less than or equal to

. This is Foster’s Lemma in a nutshell; however, the conditions of Forster’s Lemma says that on average this is the worst that can happen to

. Need to turn this intuition about our average process to a rigorous proof about our stochastic process.

- We will often refer to the function

used in Foster’s Lemma as a Lyapunov function.

Proof. For any discrete time Markov chain exists and, moreover, the limit is positive iff

is positive recurrent.

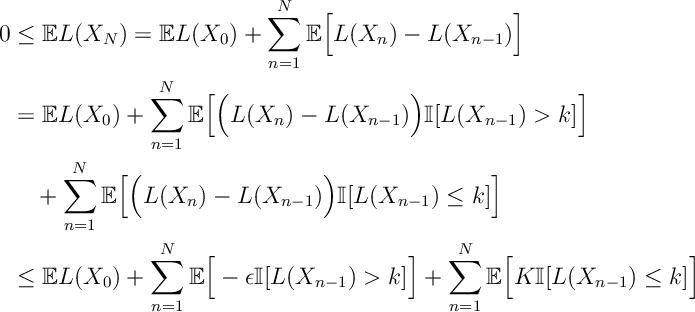

![\begin{aligned} 0&\leq \bE L(X_N)= \bE L(X_0) + \sum_{n=1}^N \bE\Big[ L(X_n)-L(X_{n-1}) \Big]\\ &=\bE L(X_0) + \sum_{n=1}^N \bE\Big[ \Big( L(X_n) -L(X_{n-1}) \Big) \bI[L(X_{n-1}) > k ]\Big] \\ & \quad + \sum_{n=1}^N \mathbb E\Big[ \Big(L(X_n) -L(X_{n-1}) \Big) \mathbb I[L(X_{n-1}) \leq k ] \Big]\\ &\leq \mathbb E L(X_0) + \sum_{n=1}^N \mathbb E\Big[ -\epsilon \mathbb I[L(X_{n-1}) > k ] \Big] + \sum_{n=1}^N \mathbb E\Big[ K \mathbb I[L(X_{n-1}) \leq k ] \Big] &&\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2018/06/7d2df48fc8ce4876c58d4959f68e9925.png?w=840)

Rearranging this expression and taking limits, we get that

![\liminf_{N\rightarrow \infty} \frac{1}{N} \mathbb E \sum_{n=1}^N \mathbb I[L(X_{n-1}) \leq k ] \geq \frac{\epsilon}{\epsilon+K}.](https://appliedprobability.blog/wp-content/uploads/2018/06/51852ccc1052d0176ac9e52690695dcf.png?w=840)

Thus since is finite, for some

,

![\liminf_{N\rightarrow \infty} \frac{1}{N} \mathbb E \sum_{n=1}^N \mathbb I[X_{n-1}=x] \geq \frac{\epsilon}{|\mathcal X(k)|(\epsilon+K)}>0.](https://appliedprobability.blog/wp-content/uploads/2018/06/4328c08eacc512c8bdea91484a06a61c.png?w=840)

In other words, is positive recurrent and thus our irreducible Markov chain

is positive recurrent.

There are numerous generalizations of Foster’s Lemma, but all roughly follow this structure.

We consider one such generalization, the Multiplicative Foster’s Lemma. Here we allow the Markov chain to have a downward drift over a period of time proportional to the size of its initial state. This version of Foster’s Lemma will be useful when we take a deterministic process as the limit of our stochastic process.

- Let

be a continuous-time Markov chain on countable, irreducible state-space

.

- We let

define a norm on

. 1

Theorem [Multiplicative Foster’s Lemma] If and if for some

, some

and some

,

is finite, and

![\begin{aligned} &\mathbb E\Big[\big|X(\tau)\big| \Big| X(0)=x_0\Big] < K, && \text{if }\quad |x_0|\leq k,\\ &\mathbb E \Big[ \big|X(T|x_0|)\big| - |x_0| \Big| X(0)=x_0\Big] < -\epsilon |x_0|, && \text{if }\quad |x_0|>k,\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2018/06/6be83f3a216488992492e64a41dc5036.png?w=840)

where is the next jump time of the Markov chain then

is positive recurrent.

- When we take a fluid limit, we rescaled space and time proportionally to get a deterministic limit. Observe this result remains invariant when we rescaled space and time proportionally.

Proof. We consider our Markov chain at specific stopping times: and for

,

where is the time to the next jump of our Markov chain after time

. Similar to Foster’s Lemma

![\begin{aligned} 0 &\leq \mathbb E |X(t_N)| = \mathbb E | X(0)| + \sum_{n=1}^N \mathbb E\left[ |X(t_n)| -|X(t_{n-1}|\right]\\ &= \mathbb E | X(0)| + \sum_{n=1}^N \mathbb E\left[ \Big(|X(t_n)| -|X(t_{n-1})|\Big) \bI\big[|X(t_{n-1})| > k \big] \right] \\ & \quad + \sum_{n=1}^N \mathbb E\left[ \Big(|X(t_n)| -|X(t_{n-1})|\Big) \mathbb I\big[|X(t_{n-1})| \leq k \big] \right]\\ &\leq \mathbb E | X(0)| -\epsilon \sum_{n=1}^N \mathbb E\Big[ |X(t_{n-1})| \mathbb I\big[|X(t_{n-1})| > k \big] \Big]+ K \sum_{n=1}^N \mathbb E\Big[ \mathbb I\big[|X(t_{n-1})| \leq k \big] \Big]\\ &\leq \mathbb E | X(0)| -\epsilon k \sum_{n=1}^N \mathbb E\Big[ \mathbb I\big[|X(t_{n-1})| > k \big] \Big]+ K \sum_{n=1}^N \mathbb E\Big[ \mathbb I\big[|X(t_{n-1})| \leq k \big] \Big]\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2018/06/02ae4b6fc8fa982738fed2d9b31bb69a.png?w=840) Rearranging, dividing by

Rearranging, dividing by and taking limits, we gain the expression

![\liminf_{N\rightarrow \infty} \frac{1}{N} \mathbb E\Big[ \sum_{n=1}^N \mathbb I\big[|X(t_{n-1})| \leq k \big] \Big] \geq \frac{\epsilon k}{K+\epsilon k}>0.](https://appliedprobability.blog/wp-content/uploads/2018/06/7eff3fe348571fb5dd941d9f0d9aef00.png?w=840)

Thus, is positive recurrent as it is positive recurrent in the finite set of state

.

- We do not really need a norms here. Just some notion of distance, but to be safe we assume it is a norm.↩