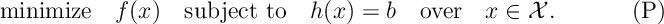

We are interested in solving the constrained optimization problem

- The variables

must belong to the constraint region

, where

is a subset of

.

- The variables must satisfy the functional constraints

, where $latex h:

{\mathcal X}\rightarrow{\mathbb R}^d$ and

{\mathcal X}\rightarrow{\mathbb R}^d$ and .

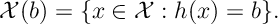

- Variables that belong to the constraint region and satisfy the functional constraints are called feasible and belong to the feasible set

- The variables are judged according to the relative magnitude of the objective function

.

- A feasible solution to the optimization,

, is called optimal when

for all

.

- In general, an optimum

need not exist.

- Inequality constraints

can be rewritten as equality constraints

, where

. Then an optimization of the form can be solved over variables

. The variables

are called slack variables.

, so maximizing is the same as minimizing.

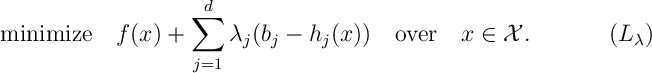

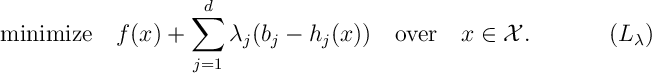

The set could incorporate the functional constraints

, but generally we assume

is a set where the unconstrained optimization,

, is easier to solve. For example,

might be

and

might be solved by differentiating and finding local minima. What makes the problem difficult is the functional constraints. To get some solution, we might penalize going over these constraints. If so, we could remove these constraints and solve a different optimization

- In the above optimization, the objective function

is called the Lagrangian of the optimization problem .

- We call the components of the vector,

, Lagrange multipliers.

- We use

to notate its optimum of , if it exists.

- For each inequality constraint

, a variable

is added and the term

is replaced by

. Because we introduced a new term

, for a finite solution to , we require

and thus, after minimizing over

, we have

. This equality is called complementary slackness.

- If

our optimal Lagrangian solution is also feasible then complementary slackness states

The unconstrained optimization is, in principle, much easier to solve and a good choice of can be used to penalize constraints that are not satisfied. For example, if we solve the optimization problem with optimum

and found that

then, intuitively, we would make

bigger in order to make the price of overrunning constraint

higher. Hopefully, this would then make the solution of closer to . The minimum of the Lagrangian is always smaller.

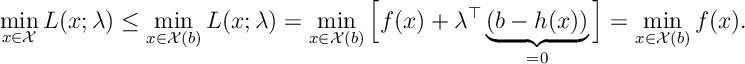

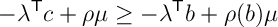

Lemma [Weak Duality] For all

Proof

In fact, if we can make the two solutions equal then we have optimality.

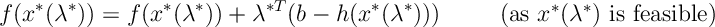

Theorem 1 [Lagrangian Sufficiency Theorem] If there exists Lagrange multipliers and a solution

to

such that

is feasible for then

solves the optimization problem (P).

Proof As is feasible then certainly

. Now,

So, .

This result gives a simple procedure to solve an optimization:

- Take your optimization and, if necessary, add slack variables to make all inequality constraints equalities.

- Write out the Lagrangian and solve optimization for

.

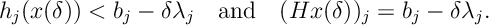

- By solving the constraints

over

, find a

so that

is feasible.By Lagrangian Sufficiency Theorem,

is optimal.

Duality

Since weak duality holds, we want to make the minimized Lagrangian as big as possible. Only then can a feasible Lagrangian optimum be found to solve the optimization . Thus we consider the following optimization,

- Here

and

.

- We call this optimization problem the dual optimization and, in turn, we call the primal optimization.

- Making dependence on

explicit, use

to denote the optimal value of the primal problem and

to denote the optimal value of the dual. By weak duality

. If

then we say strong duality holds.

- Observe,

, if

and

, otherwise. So,

and by definition

. So strong duality corresponds exactly to the saddle point condition that

Essentially, the Lagrangian approach will only be effective when we can find a such that

. This is equivalent to the existence of a supporting hyperplane for

.

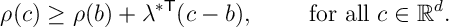

Theorem 2. iff

and

, together satisfy the property

Proof.

So iff

iff

for all

, as required.

- Informally, states that there exists a tangent to

at point

and that the function

lies above this tangent.

- The set

defines a hyperplane containing

and

lies in the half-space above

. So we call this “tangent”

a supporting hyperplane to

at

with slope

.

- Any function

is convex if

, for

. It can be verified that this is equivalent to the condition . Optimization of convex functions may be possible with Lagrangian methods.

- We discuss the relationship between convexity, hyperplanes, duality and Lagrangian optimization in the supplementary section, Section [DHLO].

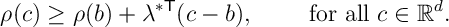

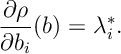

Given , for , we can say that

Thus, if is differentiable at

then

- For a maximization problem, if we interpreted

as the optimal profit (or cost for a minimization problem) made when manufacturing using

units of raw material then can be interpreted as the change in profit made from gaining a small additional quantity of material

and thus

is interpreted at the price the manufacturer is prepared to pay to secure those extra goods.

- From this interpretation, we call the optimal Lagrange multiplier

a shadow price.

As noted above if is convex then we can find a

with which we can apply the Lagrangian approach.

- For a constrained optimization problem we say Slater’s Condition is satisfied if the objective function

is a convex function, if constraint region

is a convex set, if for each equality constraint

the function

is linear, if there exist a feasible solution

such that all inequality constraint are satisfied with strict inequality

.

- To make life easier we assume the functions

and

are continuous at the feasible

assumed in slater’s condition and we assume there exists some non-optimal feasible solution in

.

Theorem 3. If Slater’s Condition holds then is a convex function and thus there exists

such that

.

To prove this we will require the following (intuitively true) result.

Theorem 4. [Supporting Hyperplane Theorem] If is a convex set with non-empty interior and

then there exist a supporting hyperplane

that is a set

with

and

belonging a closed half-space associated with

(i.e.

or

.

We can prove this result later, we will prove Theorem 3.

Proof of Theorem 3. By Slater’s condition the equality constraints are given by a matrix , i.e.

for

. We assume that the image of

has dimension

, otherwise, as row rank

column rank for matricies, we can remove a redundant constraint.1 Consider the set

So, roughly speaking, is all the stuff above

for each

.

We show that is convex. For

,

and

corresponding feasible points used in the definition of

above, consider

and

The three statements above show that belongs to

. So

is convex.

Take . As

, by the Supporting Hyperplane Theorem there exists a

such

Provided we can choose to be strictly positive then

has a supporting hyperplane in the sense of Theorem [LO:OptSHP] and so we are done. It is clear

is positive: if we take

and

for some non-optimal

the above inequality reads

for all

, which is only true if

.

Now we show . Let’s look for a contradiction: if

then the supposting hyperplane would be vertical with respect to the component

and we would have

. Now applying Slater’s condition, there is a point

where the inequality constraints are strict. By continuity of our inequality constraint function and by continuity of linear map

for any

suitably small we can choose

(close to

) such that

By continuity of we can choose

so that

. Thus, it is clear that

but

, which contradicts the earlier assertion that thus

. Thus by taking

we have

Thus, by Theorem 2 as required. $\latex \square $

- We can choose not to remove these redundant constraints but then we have to mess arround with relative interiors, see Section [DHLO].↩