We prove a powerful inequality which provides very tight gaussian tail bounds “” for probabilities on product state spaces

. Talagrand’s Inequality has found lots of applications in probability and combinatorial optimization and, if one can apply it, it generally outperforms inequalities like Azzuma-Hoeffding.

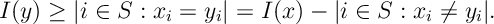

Thrm [Talagrand’ Concentration Inequality] For a measurable subset of the product space

,

and, consequently,

Here,

![\begin{aligned} d(x,A)&= \sup_{\substack{\alpha\in\bR_+^n\\ ||\alpha||_2\leq 1}} \inf_{y\in A} \sum_{k=1}^n \alpha_k \bI[x_k\neq y_k]= \inf_{v\in < V(x,A)>} ||v||_2 \end{aligned}](https://appliedprobability.blog/wp-content/uploads/2017/09/0bc45f0af82c4acb5364fa036a44e720.png?w=840)

where, .

Proof. The duality between the two characterizations of are given in the appendix to this section.

We will prove the inequality holds by induction on . Note for the case

,

so holds since

.1

Now assuming our induction hypothesis up to , we prove the result for the

case. We do so by proving the following claim

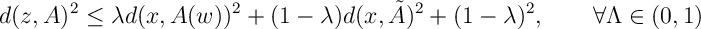

Claim: For ,

and

,

where,

- In equality , we over estimate

by two distances on

: the first, a refined distance using information about

,

; and the second, a cruder distance using only information about

and

,

.

for rectangular sets only.

Proof of claim: We prove the bound by considering the convex combination of points in and

. Note if

is a point in

then there exists a

such that

for each

, also

for the

case. So, if

then

. By a similar easy argument, we see that if

then

. It is also clear that these inclusions extend on convex combinations

and

. Thus we see that if

and

then

Also, using convexity of ,

Optimizing over and

, as required, we get

This completes the proof of our claim and we continue with the proof of Talagrand’s Inequality.

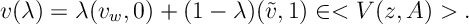

Continuing to write and applying of inequality , we have for the conditional expectation that

For second inequality, above, we apply Holder’s Inequality; in the third inequality, we apply our induction hypothesis; in the fourth inequality, we use the fact that

For second inequality, above, we apply Holder’s Inequality; in the third inequality, we apply our induction hypothesis; in the fourth inequality, we use the fact that , which we prove in the appendix to this section.

Now taking expectations over , and observing

, we gain the required result:

Applications

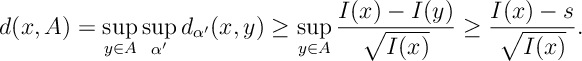

Let’s informally discuss the application of Talagrand’s Inequality and then give a few examples of how to apply the result. First we recall that the Hamming distance is , so Talagrand considers a weighted Hamming distance from the set of

,

. A result of a similar form was known to hold previously to Talagrand’s, namely,2

However the bound is vastly improved by allowing us to optimize over given

: from an event

it is much more possible to deduce that for some

,

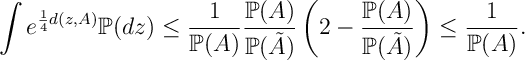

. In particular, we are in a strong position if we can show a bound of the form

![F(x) \geq F(y) - \sum_{i=1}^N \alpha_i^x \bI [x_i\neq y_i] \qquad\text{or}\qquad F(x) \leq F(y) + \sum_{i=1}^N \alpha_i^x \bI [x_i\neq y_i].](https://appliedprobability.blog/wp-content/uploads/2017/09/20945ace0da9b001d94a5db54f278b07.png?w=840)

Note by multiplying by both bounds are equivalent. In both cases, we imagine that, if making a change so some of the compenents of

gives

, then we have a way of estimating the change in

for each component changed. Note the (left) bound above implies

Thus if we can bound above by

, (the smaller the better) and we take an event

then

. Thus, for this choice of

,

implies

. Thus applying Talagrand gives

For median, , inspecting the above bound with

and

and then with

and

yields a bound

At this point, we have a pretty convincing concentration bound about the median of the distribution of .

One might prefer work with the mean of F(x), , rather than the median,

. Note, a Cantelli’s inequality implies that the the mean is withing one standard deviation (see Lemma [Ineqs:MeanMedian]). We can estimate the variance of

from the above concentration inequality: the variance about the mean is always minimal so

So, which gives

and so

So once again we get a tail-bound with the same concentration behaviour.

Longest Increasing Subsequence

Given independent random variables are independent real valued random variables, we consider the problem of finding the following Longest Increasing Subsequence:

Comparing two (similar) sequences and

notice a longest subsequence of

,

could be used as a (long) subsequence for

. That is

We take such that

if

and

otherwise. If we consider the distance

, then we have

which, on the event , gives

Note given we are working with an event of the form we look for an event

that implies

is of a certian size. Since the function

is increasing for

, then

implies

. Applying this then Talagrands inequality gives

For the median of this distibution, then setting

and setting

gives two tail bounds in the following result.

Theorem

Note the bounds do not look not terribly symmetric but, in order to achieve a guaranteed probability in both bounds, we must choose of the same order,

, in both bounds.

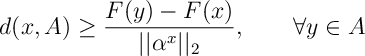

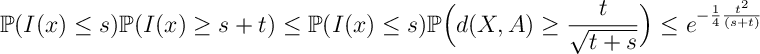

Appendix

We first show the equality of our primal-dual characterization for .

![\begin{aligned} \sup_{\substack{\alpha\in\bR_+^n\\ ||\alpha||_2\leq 1}} \inf_{y\in A} \sum_{k=1}^n \alpha_k \bI[x_k\neq y_k]= \inf_{v\in < U(x,A)>} ||v||_2 \end{aligned}](https://appliedprobability.blog/wp-content/uploads/2017/09/adb9ff9e2fe92a73cbe23418872755d9.png?w=840)

For

It is that this is equivalent to showing that

![\inf_{\lambda\in[0,1]} \frac{1}{4}(1-\lambda)^2 -\lambda \log r \leq \log (2-r).](https://appliedprobability.blog/wp-content/uploads/2017/09/1e35d1264b7b8b596fcbecca4704a095.png?w=840)

The left-hand side is minimized by . Thus, after substituting, we are required to show

We can equivalently expressed this inequality as an integral

![0\geq \int^1_r \frac{1}{x} +\frac{2\log x}{x} -\frac{1}{2-x} dx = \int_r^1 \frac{2}{x(2-x)}\left[ 2 \log x - x+1 - x\log x \right]dx.](https://appliedprobability.blog/wp-content/uploads/2017/09/f7dec8adef91c26f4c2d95502ee033d1.png?w=840)

The term in the square brackets is negative: is concave and

is convex, both terms are equal when

so the rest of the time

. This completes the lemma.

Wow, wonderful weblog layout! How lengthy have you ever been blogging for? you make blogging look easy. The overall look of your site is excellent, as neatly as the content material!

LikeLike