We consider the setting of sequentially optimizing the average of a sequence of functions, so called online convex optimization.

For a convex set and discrete time

, we consider the setting where

- An unknown convex loss function

is set.

- the algorithm picks a point

and incurs a loss

.

- The gradient

is revealed.

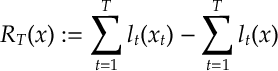

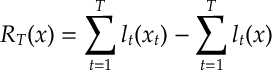

The quantity that we are interested in minimizing is the regret of the aggregate loss between a sequence of choices , and a fixed choice

:

We now define a specific selection rule for , which we called online mirror descent. Each mirror descent algorithm is defined by a convex function

.

Mirror Descent

Initialize by setting by setting , then for

- set

- As described above the algorithm the incurs loss

and observes

.

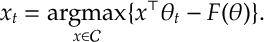

- The algorithm then selects

- Notice by Fenchel-Duality, here that

where

is the Legendre-Fenchel Transform of

. In other words, we don’t need to solve the optimization of

, in principle we can just choose

from an appropriate convex function.

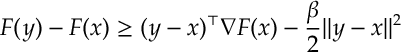

- We will say that

is

-strongly convex if

- We will say that

is

-smooth if it is differentiable and its derivative is Lipschitz continuous with constant

, i.e.

- It is left as an exercise, to show that if

is

-strongly convex then its Legendre-Fenchel Transform

is

– smooth.

Thrm: If is

-strongly convex, then

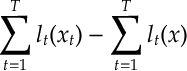

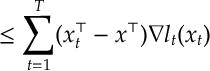

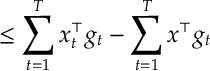

Proof: Notice by the convexity of , we can upper-bound the regret as follows

Where in the equality, we just introduced the notation .

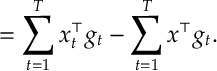

Now notice that given our definition of in ,

as defined in is given by

In some sense our choice of is attempting to optimize the bound on the right-hand side of .

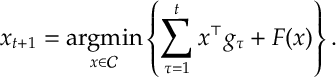

Now since is

-strongly convex, then

is

–smooth.

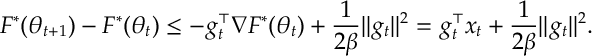

Where we note that, by definition, . Further by the Fenchel-Young Inequality, we have that

where in the last inequality we note the definition of is the sum of

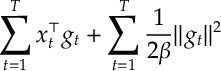

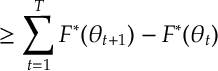

. Summing the interpolation terms in and applying the above bound we have that

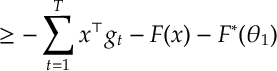

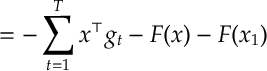

In the last equality, we apply the Fenchel-Young Inequality to once more. Now, rearranging the above inequality and applying our first bound , we have that

as required.