Due to some projects, I’ve decided to start a set of posts on Monte-Carlo and its variants. These include Monte-Carlo (MC), Markov chain Monte-Carlo (MCMC), Sequential Monte-Carlo (SMC) and Multi-Level Monte-Carlo (MLMC). I’ll probably expand these posts further at a later point.

Here we cover “vanilla” Monte-Carlo, importance sampling and self-normalized importance sampling:

The idea of Monte-Carlo simulation is quite simple. You want to evaluate the expectation of where

is a some random variable (or set of random variables) with distribution

and

is a real-valued. Note that the expectation is really an integral:

![\begin{aligned}

\mathbb E_{\theta \sim \mu} [ f(\theta)] = \int f(\theta) d \mu(\theta)\,.\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2021/05/7cd26e491cca1d47409109f6b542ce2d.png)

So we can think of Monte-carlo as evaluating an integral.

Given you can sample then the expectation

can be approximated by

![\begin{aligned}

\hat{\mathbb E}_{N} \left[ f(\theta) \right] := \frac{1}{N} \sum_{i=1}^N f(\theta_i) \, .\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2021/05/eddb88b161acc97b5af5d910b11ff180.png)

In particular, if the samples are i.i.d. the strong law of large numbers gives that, with probability ,

![\begin{aligned}

\frac{1}{N} \sum_{i=1}^N f(\theta_i) \xrightarrow[N\rightarrow \infty]{} \mathbb E_{\theta \sim \mu} [ f(\theta)] \, .\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2021/05/7fad5a01e891431761d34f938946d9c2.png)

Also it holds that

![\begin{aligned}

\label{MCMC:rate}

\mathbb E \left[

\left(

\hat{\mathbb E}_N [f(\theta)] - \mathbb E_{\theta \sim \mu} [f(\theta)]

\right)^2

\right]^{\frac{1}{2}}

\leq

\frac{

\mathbb V(f(\theta))^{\frac{1}{2}}

}{

\sqrt{N}

}\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2021/05/ab8a809cc855ee9fe6058c78c8109e08.png)

(since the variance satisfies for

and

independent). So here we see the errors go down at rate

.

A Classical Example. Let where

are independent. Let

![\begin{aligned}

f(\theta ) = \mathbb I [ \theta_1^2 + \theta_2^2 \leq 1]\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2021/05/b66128e5ef201a37e5ad7c33cf332194.png) Note that the area of the quarter circle

Note that the area of the quarter circle is

. Then

![\begin{aligned}

\frac{1}{N} \sum_{i=1}^N \mathbb I [ \theta_1^2 + \theta_2^2 \leq 1]

\xrightarrow[N\rightarrow\infty ]{} \frac{\pi}{4} \,.\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2021/05/1e5b2b31fb5ab258be3cb66212ef3fd5.png)

The rate of convergence in this example is pretty atrocious when compared with numerical methods. 1 However the example gets the main idea across: there is some difficult to calculate quantity (namely ), we generate random variables

we do a calculation to get a random variable of interest

and then we repeat until we get a good average. The method is extremely simple and generalizable (to situations where other numerical methods are not readily available).

Importance Sampling.

If we want to calculate

![\begin{aligned}

\mathbb E_{\mu} \left[ Z \right] \qquad\text{where}\qquad Z=f(\theta)\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2021/05/f451624a1188c8a9ae50ad9d7c1c4f51.png)

we don’t need to sample from , we can sample from another distribution

instead (and this can help improve convergence). We can use

instead of

because

![\begin{aligned}

\mathbb E_{\theta \sim \mu} \left[ Z\right] & = \int f(\theta) d \mu (\theta)

\notag

\\

& = \int f(\theta) \frac{d \mu}{d \nu} (\theta) d\nu (\theta)

\notag

\\

&= \mathbb E_{\theta\sim \nu} [\tilde Z]

\label{MC:EZ}\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2021/05/e1a195e316f75864d5b21401154ff9f9.png)

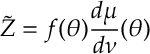

where

(Above is the probability density function (pdf) of

over the pdf of

for continuous random variables or is the probability mass function of

over

for discrete random variables, and in general is the Radon-Nikodym derivative.)

Thus when applying important sampling, we sample and we perform the estimate

![\begin{aligned}

\hat{\mathbb E}_N[\tilde Z] =\frac{1}{N}\sum_{i=1}^N f(\theta_i) \frac{d \mu}{d \nu}(\theta_i)\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2021/05/c569b92ae00864fa5488a866f5ffd5fa.png)

The following lemma, although not entirely practical, gives good insights as to why importance sampling can help

Lemma [the Perfect Importance Sampler] If and we sample from

with

![\begin{aligned}

\frac{d\mu}{d \nu} = \frac{\mathbb E_\mu [ Z ]}{Z}\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2021/05/47ce12e7da0e13bf08fa794e2b610c0b.png)

then the estimator is such that

Proof.

![\begin{aligned}

\mathbb E_{\nu} [ \tilde Z^2 ]

=

\mathbb E_\mu \left[

Z^2

\frac{\mathbb E_\mu [Z]}{Z}

\right]

=

\mathbb E_\mu [ Z]^2

=

\mathbb E_\nu [ \tilde Z]^2 \, ,\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2021/05/4660d97e187783dc462032ab1efc1d61.png)

where the last inequality follows by . Therefore .

The above suggest that we should choose with probability proportional to

to get low variance.2 Of course, we don’t know

in advance, so we cannot sample in this way. However, in practice, any sampling mechanism that concentrates selection around the area of interest would likely have a good impact on performance. Indeed importance sampling can substantially improve selection related to sampling from the underlying distribution

.

Self-Normalized Importance Sampling.

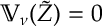

In importance sampling, we apply a weight to each sample, here we know that

. However, sometimes we only know these weights upto some constant (i.e. we don’t know the correct normalizing constant which happens a lot in Bayesian statistics) In that case, we can renormalize with the following self-normalized importance sample:

![\begin{aligned}

\mathbb E_{\mu} [f(\theta) ]

\approx \hat{\mathbb E}[f(\theta) ] = \frac{\sum_{i=1}^N f(\theta_i ) w(\theta_i) }{\sum_{i=1}^N w(\theta_i) } \, .\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2021/05/5eda3b9626ffb2e2157457c25fce80ee.png)

So long as the weights remain bounded, the rate of convergence is comparable to that of MCMC.

Proposition. If the weights are bounded then for all bounded function

it holds that

![\begin{aligned}

||\hat{\mathbb E}[f(\theta_i) ] - \mathbb E_\mu [ f(\theta)]||_{L_2}

\leq

2 \frac{f_{\max} w_{\max}}{\sqrt{N}}\, .\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2021/05/fdbba877867eb7f22c08cbfa98cdf3ed.png)

Proof. Note that for

![\begin{aligned}

\frac{\sum_{i=1}^N f(\theta_i ) w(\theta_i) }{\sum_{i=1}^N w(\theta_i) } - \frac{\sum_{i=1}^N f(\theta_i ) \bar w(\theta_i) }{ N }

=

\left[ \frac{\sum_{i=1}^N f(\theta_i ) \bar w(\theta_i) }{\sum_{i=1}^N \bar w(\theta_i) } \right]

\left\{

1 - \frac{\sum_i \bar w(\theta_i)}{N}

\right\}\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2021/05/51bbdc85e413d979bd5e7e85feead90b.png)

Note that the term in square brackets is bounded by . Thus applying this equality we have that

![\begin{aligned}

& ||\hat{\mathbb E}[f(\theta_i) ] - \mathbb E_\mu [ f(\theta)]||_{L_2}

\notag

\\

\leq &

f_{\max}

\left\|

1 - \frac{\sum_i \bar w(\theta_i)}{N}

\right\|_{L_2}

+

\left\|

\frac{\sum_i \bar w(\theta_i) f(\theta_i)}{N}

-

\mathbb E [f(\theta) ]

\right\|_{L_2}

\label{MCMC:Ieq}\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2021/05/da83320eb2d15f8bcc73c0a30c04dbda.png)

Now notice that, since , we have that

![\begin{aligned}

\left\|

\frac{\sum_i \bar w(\theta_i) f(\theta_i)}{N}

-

\mathbb E [f(\theta) ]

\right\|_{L_2}

= \sqrt{ \frac{\mathbb V_{\nu}(\bar w(\theta)f(\theta))}{N} } \leq \frac{w_{\max} f_{\max} }{\sqrt{N}}\,.\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2021/05/94a5ac6649a6ebe5a9ed80be0a328030.png)

The above inequality applies to both terms in (by taking ). Thus we have, as required, that

![\begin{aligned}

||\hat{\mathbb E}[f(\theta_i) ] - \mathbb E_\mu [ f(\theta)]||_{L_2}

\leq

2 \frac{f_{\max} w_{\max}}{\sqrt{N}}\, .\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2021/05/fdbba877867eb7f22c08cbfa98cdf3ed.png)

References

Monte-Carlo is by now a very standard method. Buffon’s Needle and the calculation of by Ulam and Von Neumann and coauthors are classical early examples, see Metropolis. The texts of Kroese et al. and Asmussen and Glynn provide good text book accounts.

Metropolis, N. “The Beginning of the Monto-Carlo Method.” Los Alamos Science 15 (1987): 125-130.

Asmussen, Søren, and Peter W. Glynn. Stochastic simulation: algorithms and analysis. Vol. 57. Springer Science & Business Media, 2007.

Kroese, Dirk P., Thomas Taimre, and Zdravko I. Botev. Handbook of monte carlo methods. Vol. 706. John Wiley & Sons, 2013.