Lyapunov functions are an extremely convenient device for proving that a dynamical system converges. We cover:

- The Lyapunov argument

- La Salle’s Invariance Principle

- An Alternative argument for Convex Functions

- Exponential Convergence Rates

- For some continuous function

, we suppose

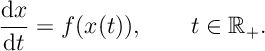

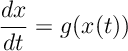

obeys the differential equation

- A Lyapunov function is a continuously differentiable function

with unique minimum at

such that

- We add the additional assumption that

is a compact set for every

.

Theorem 1. If a Lyapunov exists for differential equation then as

and

![x(t)\xrightarrow[t\rightarrow\infty]{}x^*.](https://appliedprobability.blog/wp-content/uploads/2020/02/162b01039865e9b44bfa2cf712203479.png?w=840)

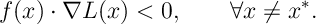

Proof. Firstly,

So is decreasing. Suppose it decreases to

. By the Fundamental Theorem of Calculus

![\tilde{L}-L(x(t))=\int_t^\infty \frac{d L(x(s))}{ds} ds \xrightarrow[t\rightarrow\infty]{} 0](https://appliedprobability.blog/wp-content/uploads/2020/02/12e0fac383daa829a92b7cecbfb8dd13.png?w=840)

Thus we can take a sequence of times such that

as

. As

is compact, we can take a subsequence of times

,

such that

converges. Suppose it converges to

. By continuity,

Thus by definition . Thus

and thus by continuity of

at

we must have

QED.

- One can check this proof follows more-or-less unchanged if

, the minimum of

, is not unique.

La Salle’s Invariance Principle

We generalize and strengthen the Lyapunov convergence result in Theorem 1. Here we no longer consider convergence to a unique global minimum of . Instead, we find convergence to points

The set

The set is called the set of invariant points. Notice, under the conditions of Theorem 1,

. In general,

contains all local [and global] minima of

. If the dynamics exclude invariant points which are non-local minima [for instance by taking

] then

is exactly the set of local minima. The result which proves convergence to invariant points is called Salle’s Invariance Prinicple.

We assume the following

- The process

,

obeys the o.d.e.

for a continuous function.

where

is a compact set such that if

then

for all

.

- The Lyapunov function

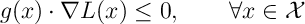

is a continuously differentiable function such that

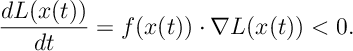

- and we let

La Salle’s Invariance Prinicple is as follows1

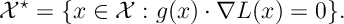

Theorem 2. [La Salle’s Invariance Prinicple] As ,

convergences to

uniformly over initial conditions

. That is:

such that

Proof. If belongs to a compact set then

for some

. For

, we let

. Since

and

, for time

is must be that

for all

. [This is the main part of the argument completed.]

It is reasonable to assume that for

suitably small then

must be close to

. This is true and to show this we prove the following claim: for

such that if

then

for some

. The proof is a fairly standard analysis argument: suppose the claim is not true, then there exists a sequence

such that

and

for all

. Since

is compact

over some subsequence

. By continuity

and

for all

, which is a contradiction since

means

. This contradiction thus such thata there there exists a value

such that for all

such that

implies

. QED.

Remark. We assume for some compact set

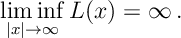

. Notice a natural condition that implies compactness is that

Specifically this implies that is compact and by assumption that

is decreasing, if

then

for all

.

Convex Lyapunov Functions

Next if we assume a bit more about we can ask more about the rate of convergence. We assume that

is convex,

for some

Note, before we proceed recall that is a convex function iff

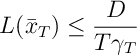

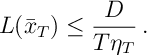

Theorem 3. Given the assumptions itemized above,

where .

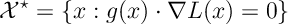

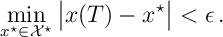

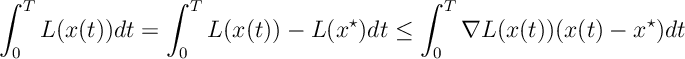

Proof. By Jensen’s inequality,

So we analyse the integral of .

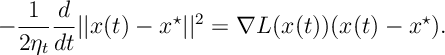

Notice that since we have

Substituting this into the integral above gives

![\begin{aligned} \int_0^T L(x(t))dt &\leq - \int_0^T \frac{1}{2\eta_t}\frac{d}{dt} ||x(t) - x^\star||^2 dt \\ &= -\left[\frac{1}{2\eta_t}||x(t) - x^\star||^2 \right]_0^T + \int_0^T \frac{1}{2}||x(t) - x^\star||^2\frac{d \eta^{-1}}{dt} dt \\ & \leq \frac{D}{2\eta_T} + \frac{D}{2\eta_T} = \frac{D}{\eta_T}\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2020/02/c152d498cc9fcb21ced92d78be3da5fb.png?w=840) Finally, applying this to our Jensen bound on

Finally, applying this to our Jensen bound on gives

QED.

Exponential Convergence

We now place some assumptions where we can make further comments about rates of convergence.

If we further assume that and

satisfy the conditions

for some

.

such that

.

.

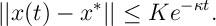

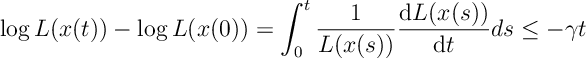

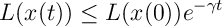

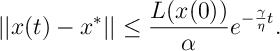

Theorem 5. There exists a constants such that for all

Proof.

So long as ,

, thus dividing by

and integrating gives

Rearrganging gives

This gives exponential convergence in and quick application of the bound in the 2nd assumption gives

QED.

- We can assume the 2nd assumption only holds on a ball arround

. We have convergence from Theorem [Lya:ConvThrm], so when

is such that assumption 2 is satisfied we can then apply the same analysis for an exponential convergence rate. Ensuring the 2nd assumption locally is more easy to check, eg. check

is positive definite at

.

References

The Lyapunov method goes back to Lyapunov in (1892). Extensions were considered by La Salle. A widely used textbook treatment is Khalil. Applications to internet congestion control are given by Srikant. Theorem 3 is a differential equation version of the proof of Zinkevich (2003).

H. Khalil. Nonlinear Systems. Pearson Education. Prentice Hall, 2002. ISBN 9780130673893.

J. LaSalle. Some extensions of liapunov’s second method. IRE Transactions on circuit theory, 7(4):520–527, 1960.

A. M. Lyapunov. The general problem of the stability of motion. International journal of control, 55(3):531–534, 1992.

R. Srikant. The mathematics of Internet congestion control. Springer Science & Business Media, 2012.

Zinkevich, M. (2003). Online Convex Programming and Generalized Infinitesimal Gradient Ascent. ICML, 928–936.

- The original result of La Salle proves point-wise convergence rather than uniform convergence, other than this the proof closely follows La Salle’s argument.↩

One thought on “Lyapunov Functions”