We review a method for finding fixed points then extend it to slightly more general, modern proofs. This is a much more developed version of an earlier post. We now cover the basic Robbin-Monro proof, Robbins-Siegmund Theorem, Stochastic Gradient Descent and Asynchronous update (as is required for Q-learning).

Often it is important to find a solution to the equation

by evaluating at a sequence of points. For instance Newton’s method would perform the updates

. However, Robbins and Monro consider the setting where we cannot directly observe

but we might observe some random variable whose mean is

. Thus we observe

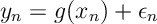

and hope solve for . Notice in this setting, even if we can find

, Newton’s method may not converge. The key idea of Robbins and Monro is to use a schema where

where we chose the sequence so that

Before proceeding here are a few different use cases:

- Quartiles. We want to find

such that

for some fixed

. But we can only sample the random variable

.

- Regression. We preform regression

, but rather than estimate

we want to know where

.

- Optimization. We want to optimize a convex function

whose gradient is

. Assume that

for some large

. To find the optimum at

we randomly sample (uniformly)

whose gradient,

, is an bias estimate of

.

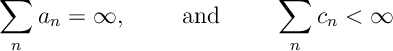

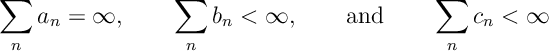

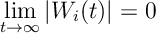

The following result contains the key elements of the Robbins-Monro proof

Prop 1. Suppose that is a positive sequence such that

where and

are positive sequences such that

then .

Proof. We can assume that equality holds, i.e., . We can achieve this by increasing

or decreasing

in the inequality ; neither of which effect the conditions on

and

, .

Now for all we have the following lower-bound

Since it must be that

. Thus since both sums converge it must be that

converges. Finally since

and

it must be that

.

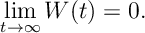

The following proposition is a Martingale version of the above result.

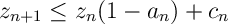

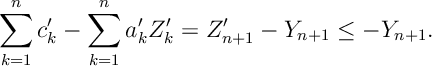

Thrm 1 [Robbins-Siegmund Theorem] If

![\label{RM:RS} \mathbb E [Z_{n+1} | \mathcal F_n ] \leq (1-a_n+b_n) Z_n +c_n](https://appliedprobability.blog/wp-content/uploads/2019/01/c97d02865b042c6d3f7050b087912ed5.png?w=840)

for positive adaptive RVs such that with probability 1,

then .

Proof. The results is some manipulations analogous to the Robbins-Monro proof and a bunch of nice reductions to Doob’s Martingale Convergence Theorem.

First note the result is equivalent to proving the result with for all

. If we divide both sides of by

we get

![\mathbb E [Z'_{n+1} | \mathcal F_n ] \leq (1-a'_n) Z'_n +c'_n\,,](https://appliedprobability.blog/wp-content/uploads/2019/01/930a5703bbcddac7fdc5d7381666fa7a.png?w=840)

where ,

and

. Notice since

converges then so does

. Thus

,

and

have the same convergence properties as those required for

,

and

. Thus, we now assume

for all

.

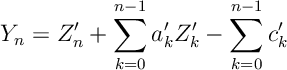

Now notice

is a super-martingale. We want to use Doob’s Martingale convergence theorem; however, we need to apply a localization argument to apply this. Specifically, let . This is a stopping time. Notice

So is a super-martingale and below by

. Thus by Doob’s Martingale Convergnce Theorem,

exists for each

, and

for some

, since

. Thus

exists.

Now notice

So like in the last proposition, since and

exists, we see that

converges. And thus

converges.

Finally since we assume and we know that

it must be that

converges to zero.

Stochastic Gradient Decent

Suppose that we have some function

![F(\theta) = \mathbb E_X [ f(X ; \theta) ]](https://appliedprobability.blog/wp-content/uploads/2019/01/7cf42f924dc51a486e9fe7aa505e3dee.png?w=840)

that we wish to minimize. We suppose that the function is known and so is its gradient

, where

is the gradient of

. The difficulty is that we do not have direct access to the distribution of

, but we can draw random samples

. We can use the Robbins-Monro Schema to optimize

. Specifically we take

where

where and

. The above sequence is often referred to as Stochastic Gradient Descent. We chose the sequence

so that

(Note here we may assume that is a function of previous parameters and observations

and

.) We let

be the Euclidean norm. We can prove that convergence

to the minimizer of

.

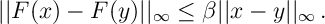

Thrm 2 [Stochastic Gradient Descent] Suppose that ,

, and

in Stochastic Gradient Descent satisfy the following conditions

such that

,

Then where

and assuming

are deterministic then

Let’s quickly review the conditions above. First consider Condition 1. Note condition implies moving in the direction of

always decreases the

, so

minimizes

. The statement

is equivalent to

being strongly convex. So this is enough to give Condition 1. Condition 2 can be interpreted as a gradient condition, or that the steps

do not grow unboundedly. Condition 3 is natural given our analysis so far.

Proof.

Taking expecations with respect to we get

![\begin{aligned} & \mathbb E [ || \theta_{n+1} - \theta^* ||_2^2 - || \theta_{n} - \theta^* ||_2^2 | \mathcal F_n] \\ = & - \alpha_n G(\theta_n) \cdot ( \theta_n -\theta^* ) + \alpha^2_n \mathbb E [ || \epsilon_n||_2^2 | \mathcal F_n ] + \alpha^2_n ||G(\theta_n) ||_2^2 \\ \leq & - \alpha_n \mu || \theta_n -\theta^* ||_2^2 + \alpha^2_n K + \alpha^2_n (A+ B ||\theta_n -\theta^* ||_2^2)\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2019/01/8c475df74d13cc3632eb43a64816cc2b.png?w=840)

Thus, rearranging

![\mathbb E [ || \theta_{n+1} - \theta^* ||_2^2 | \mathcal F_n] \leq (1- \alpha_n \mu + \alpha_n^2 B ) || \theta_{n} - \theta^* ||_2^2 + \alpha_n^2 (K+A)](https://appliedprobability.blog/wp-content/uploads/2019/01/2742fd33d853ec8abf220ab495c31b46.png?w=840)

Thus by Thrm 1, . Further taking expectations on both sides above we have

![\mathbb E [ || \theta_{n+1} - \theta^* ||_2^2 ] \leq (1- \alpha_n \mu + \alpha_n^2 B ) \mathbb E [ || \theta_{n} - \theta^* ||_2^2 ] + \alpha_n^2 (K+A)](https://appliedprobability.blog/wp-content/uploads/2019/01/00f1c485ea70c19926e4b47feab23abb.png?w=840)

We can apply Prop 1 (note that will be positive for suitably large

), to give that

as

as required.

Finally we remark that in the proof we analyzed but equally we could have analyzed

instead.

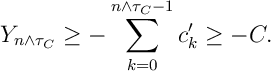

Fixed Points and Asynchronous Update

We now consider Robbins-Monro from a slightly different perspective. Suppose we have a continuous function and we wish to find a fixed point

such that

. We assume that

is a contraction namely that, for some

,

Here . (Note this contraction condition implies the existence of a fixed point). (Note the previous analysis was somewhat restricted to euclidean space.) If we suppose that we do not observe

but instead some perturbed version whose mean is

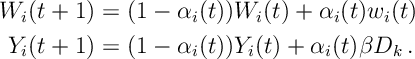

, then we can perform the Robbins-Monro update for each component

:

where is a sequence such that for all

for some constant . Further we suppose that

is measurable with respect to

, the filtration generated by

measurable and

![\mathbb E [ \epsilon_i(t) | \mathcal F_t ] = 0\, . \qquad \text{and} \qquad \mathbb E [ \epsilon^2_i(t) | \mathcal F_t ] \leq A + B \max_j | x_j(t) |^2\, . \label{RM:noise}](https://appliedprobability.blog/wp-content/uploads/2019/01/98dbce2288c0029a07d0813c3feba8cb.png?w=840)

Note that in the above we let the step rule depend on . For instance at each time

we could chose to update one component only at each step, e.g., to update component

only, we would set

for all

. Thus we can consider this step rule to be asynchronous.

We can analyze the convergence of this similarly

Thrm 3. Suppose that is a contraction with respect to

, suppose the vector

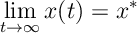

obeys the step rule with step sizes satisfying and further suppose the noise terms satisfy , then

where is the fixed point

.

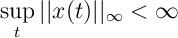

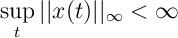

We will prove the result under the assumption that is bounded in

, this is the proposition, Prop 3, below. We then prove that

is bounded in

to complete the proof.

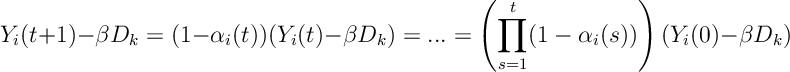

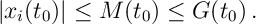

Prop 2. If we further assume that

with probability 1 then Thrm 3 holds.

We may assume with out loss of generality that , since the recursion above is equivalent to

Given the assumption above that for all

, further define

Here we choose so that

so that

. By induction, we will show that, given

for all

for some

, then there exists a

such that for all

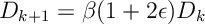

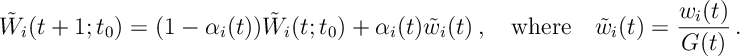

We use two recursions to bound the behavior of :

for , where

and

. We use

to summarize the effect of noise on the recursion for

and we use

to bound the error arising from the function

in the recursion for

. Specifically we show that

![]()

in Lemma 1 below. Further we notice that is a Robbin-Monro recursion for to go to zero and

to go to

.

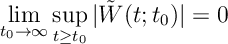

Lemma 1.

![]()

Proof. We prove the result by induction. The result is clearly true for .

In the inequality above with apply the induction hypothesis on

In the inequality above with apply the induction hypothesis on and bounds of

. The second equality just applies the definitions of

and

. Similar inequalities hold in the other direction and give the result.

Lemma 2.

Proof. Letting , we know

![\mathbb E [ W(t+1)^2 | \mathcal F_t ] \leq (1- 2\alpha(t) + \alpha^2) W(t)^2 + \alpha(t)^2 \mathbb E [ \epsilon (t)^2 | \mathcal F_t ].](https://appliedprobability.blog/wp-content/uploads/2019/01/3301c739af3c2e8549c3459b697f1aa4.png?w=840)

From the Robbins-Siegmund Theorem (Prop 1), we know that

Lemma 3. ![]()

Proof. Notice  The result holds since

The result holds since .

We can now prove Prop [Tsit:Prop].

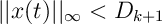

Proof of Prop 2. We know that for all

and we assume

for all

. By Prop 3 and then by Lemmas 2 and 3

![|x_i(t) | \leq Y_i(t) + | W_i(t ) | \xrightarrow[t\rightarrow\infty]{} \beta D_k](https://appliedprobability.blog/wp-content/uploads/2019/01/83f77a6de0777c0eaa5efce1b2b3b3f8.png?w=840)

as required. Thus these exists such that

. Thus by induction we see that

decreases through sequence of levels

as

, thus

goes to zero as required.

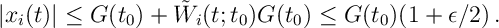

Proving Boundedness of

We now prove that remains bounded

Prop 3.

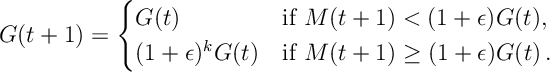

To prove this proposition, we define a processes that bounds the max of from above in increments of size

. Specfically we let

and we define and let

where in the above is the smallest integer such that

. Note that

is adapted. Further note

for some

and

, since

for suitable choice of

and

. We use

in the definition of

above and we choose

so that

.

Also we define and

Notice, like before, is a Robbin-Monro recursion that goes to zero and

is bounded by a recursion of this type.

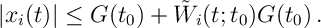

Lemma 4. If for

then

Proof. The result is somewhat similar to Lemma [Tsit:bdd]. At , we have that

Now assuming that the bound is true at time

Now assuming that the bound is true at time .

![\begin{aligned} x_i(t+1) & = (1-\alpha_i(t)) x_i(t) + \alpha_i(t) F_i(\bm x^i(t)) + \alpha_i(t) w_i(t) \\ \leq & (1-\alpha_i(t)) \{ G(t_0) + \tilde W_i(t;t_0) G(t_0) \} + \alpha_i(t) \gamma G(t_0) (1+\epsilon) + \alpha_i(t) \tilde w_i(t) G(t_0) \\ \leq & G(t_0) + [ (1-\alpha_i) \tilde W_i(t;t_0) + \alpha_i \tilde w_i(t) ] G(t_0)\, .\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2019/01/c85f7ae4d48d27611aa157bc8ffe5386.png?w=840)

Above we bound knowing the bound holds at time

and we bound

using the fact that we know

has not yet increased. We then use the fact we chose

so that

. A similar bound holds on the other side.

Lemma 5.

Proof. Since is adapted and from our assumptions on

, we have that

![\mathbb E [ \tilde{w}_i(t) | \mathcal F_t ] = 0 \quad \text{and} \quad \mathbb E [ \tilde w_i(t)^2 | \mathcal F_t ] \leq K\, .](https://appliedprobability.blog/wp-content/uploads/2019/01/5383aa6b8c543c39b82be19dee0ae43d.png?w=840)

We know that – the argument for this is identical to Lemma 2. Further notice for all

we have

![\begin{aligned} W_i(t; 0 ) - W_i(t; t_0 ) & = (1 - \alpha_i(t) ) \Big[ W_i(t-1; 0 ) - W_i(t-1; t_0 ) \Big] \\ & =\dots = \prod_{s=t_0}^t (1-\alpha(s) ) \cdot W(t_0; 0)\, .\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2019/01/52dc32b1c14e2d34985796116b8daf2c.png?w=840) Thus,

Thus,

As required both terms on the righthand-side go to zero.

Proof of Prop 3. To prove the proposition we will show that at some point must remain bounded. Suppose

is a time just after

increased. Note if

grew unboundedly then we could chose

as large as we like.

So we’d know by Lemma 5 that there exists a such that for all

,

. Thus applying this to Lemma 4 we see that

Thus since for all

. So

can not longer increase and thus we arrive at a contradiction.

must be bounded in

.

Literature

The Robbin-Munro proceedure is due to Robbins and Munro and the Robbin Sigmund Theorem is due to Robbins and Sigmund. Good notes are given by Francis Bach. The Asynchronous implementation is due to Tsitsiklis, though we replace the a couple of results their with the Robbins-Sigmund theorem.

Robbins, Herbert; Monro, Sutton. A Stochastic Approximation Method. Ann. Math. Statist. 22 (1951), no. 3, 400–407. doi:10.1214/aoms/1177729586.

Robbins, Herbert, and David Siegmund. “A convergence theorem for non negative almost supermartingales and some applications.” Optimizing methods in statistics. Academic Press, 1971. 233-257.

Francis Bach. Optimisation et Apprentissage Statistique https://www.di.ens.fr/~fbach/orsay2019.html

Tsitsiklis, John N. “Asynchronous stochastic approximation and Q-learning.” Machine learning 16.3 (1994): 185-202.

One thought on “Robbins-Monro”