- Weighted majority algorithm its variant for Bandit Problems.

We consider the problem of learning the ‘best’ action amongst a fixed reference set. Although our modelling assumptions will be quite different, we follow notation is somewhat similar to Section [DP]. We consider the following setting:

Consider actions and outcomes

. After choosing an action

, an outcome

occurs and you receives a reward

. We assume that the set of actions in of size

.

Def [Policy] Over time outcomes ,

occur. We do not (currently) assume that there is an underlying stochastic process determining the values of

; they may be chosen arbitrarily.

At each time , a policy

chooses an action

as a function of the past outcomes

,

and their rewards

In other words at each time the past states and rewards are known the policy. The policy then must do the best it can to accumulate rewards.

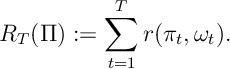

Def [Rewards/Regret] The total reward by time of policy

is

The regret of policy is

![\mathcal{R}\! g_T(\Pi) := \max_{a\in {\mathcal A} } R_T(a) - \bE [R_T(\Pi)].](https://appliedprobability.blog/wp-content/uploads/2018/04/0b06fde2f7c718895ff3063ccda5a578.png?w=840)

- It is good to think of outcome

as an outcome in a probability space. (Indeed sometimes we will assume this.)

- It is good to think of rewards

in the same way as we consider rewards for an MDP

(Though since there is no state we do not require notation for

).

- The regret compare how well our policy compares with the best fixed reward. I.e. retrospectively, we “regret” not making the best fixed choice.

- A regret of zero as

means we do as well as the best fixed choice. This is sometimes called Hannan consistency.

We are interested in how a policy performs in comparison to each fixed policy

, that is a policy that chooses the same action

at each time. Notice this is a weaker assumption compared to finding the best of all policies. This weaker notion of optimality is required because we are making much weaker assumptions about the evolution of the process

.

Ex 1. [A Regret Lower-Bound] Consider that there are two outcomes and two actions

where

and

. I.e. There is an action to go to

or

and if you match the outcome you get a reward of

, otherwise the reward is

.

Suppose that each is chosen uniformly at random from

and is independent. Show that, regardless of what policy is used,

![\mathbb E\left[ {\mathcal R}\! g_T(\Pi) \right]\geq C \sqrt{T}](https://appliedprobability.blog/wp-content/uploads/2018/04/5bfdf5b705982d6712e322fc1ac2b668.png?w=840)

for some constant . [Hint: Use the central limit theorem.]

Ans 1. Note that, since rewards are IID with probability for any policy

we have that

![\mathbb E [ R_T(\Pi) ] = \frac{1}{2} T](https://appliedprobability.blog/wp-content/uploads/2018/04/7340e6c76e021f302d4e53583f2d82a4.png?w=840)

Further note that thus

We know that is the error of an iid sequence about its mean so the central limit theorem applies convergence in distribution

![\frac{\epsilon_T}{\sqrt{T}} \xrightarrow[T\rightarrow\infty ]{\mathcal D} \mathcal N \big(0,\frac{1}{2}\big)](https://appliedprobability.blog/wp-content/uploads/2018/04/9e286d223f883c7bb2546e7594294bee.png?w=840)

and

![\mathcal{R}\! g_T(\Pi) := \max_{a\in {\mathcal A} } R_T(a) - \bE [R_T(\Pi)] = | \epsilon_T | .](https://appliedprobability.blog/wp-content/uploads/2018/04/ef4f8e1309a70af3a8d9ed9379bf5af4.png?w=840)

These last to observations give the result.

The Weighted Majority Algorithm

We now consider a policy, that can achieve the lower bound suggested by [[OL:Ran]].

We prove the following result for the Weighted Majority Algorithm.

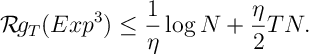

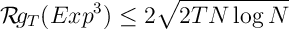

Thrm 1

![\label{WMA:reg} \mathbb E \left[ \mathcal R\! g_T (\mathcal W_{Maj}) \right] \leq \eta^{-1} \log(N) + \frac{\eta T}{2},](https://appliedprobability.blog/wp-content/uploads/2018/04/3ed67bb9c4c19f50f4976fd8725868f0.png?w=840)

and, ,

![\label{WMA:reg2} \mathbb E \left[ \mathcal R\! g_T (\mathcal W_{Maj}) \right] \leq 2\sqrt{2T \log(N)}.](https://appliedprobability.blog/wp-content/uploads/2018/04/2afd10f7986fa1b7f9f4f1686cf3c010.png?w=840)

Other related results are also possible. We also require the following probability bound:

Lemma [Hoeffding’s Inequality] For a Random variable bounded on

![\mathbb E [e^{\eta X}] \leq e^{\eta \mathbb E X + \frac{\eta^2}{2}}](https://appliedprobability.blog/wp-content/uploads/2018/04/c8a42be47135c075d22ea87f779528cd.png?w=840)

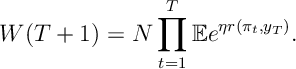

Ex 2 Prove that

Ans 2 ![\begin{aligned} W_{T+1} = W_0 \prod_{t=0}^{T-1} \frac{W_{t+1}}{W_t} = N \prod_{t=0}^{T-1} \sum_i \frac{e^{\eta R_t(i) } }{W_t} & = N \prod_{t=1}^T \sum_i e^{\eta r_t(i)} \underbrace{ \frac{e^{\eta R_{t-1}(i)}}{W_t} }_{ = P_t(i) }\\ & = N \prod_{t=1}^T \mathbb E\left[ e^{\eta r(\pi_t,\omega_t)} \right] \end{aligned}](https://appliedprobability.blog/wp-content/uploads/2018/04/a26a828e6ec9bac8985038d6073d9c2f.png?w=840)

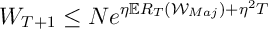

Ex 3 [Continued] Show that

Ans 3 Apply Hoeffding’s Inequality.

![{\mathbb E} [ e^{\eta r_t(\pi_t)}] \leq \exp\Big\{ \eta {\mathbb E} r_t(\pi_t) + \frac{\eta^2}{2}\Big\}.](https://appliedprobability.blog/wp-content/uploads/2018/04/e8f1d6c5aebe4e1969c43d7db7f4143a.png?w=840)

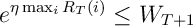

Ex 4 [Continued]

Ans 4 Trivial from definitions.

Ex 5 [Continued]

![{\mathbb E} [ {\mathcal R}\! g_T({\mathcal W}\! _{Maj})] \leq \eta^{-1} \log(N) + \frac{\eta T}{2}.](https://appliedprobability.blog/wp-content/uploads/2018/04/f1ac6f6028c9284b8d0e169d4595573e.png?w=840)

Ans 5 Combine [3] and [4], take logs and rearrange.

Ex 6 [Continued] Finally show that

![{\mathbb E}[ {\mathcal R}\! g_T({\mathcal W}\! _{Maj}) ] \leq 2\sqrt{2T \log(N)}.](https://appliedprobability.blog/wp-content/uploads/2018/04/e7faa695b088664506127438d93fac04.png?w=840)

Ans 6 Minimize [5] over .

Multi-armed Bandits: Non-Stochastic

We consider a multi-armed bandit setting for online learning.

Def [Bandit Policy] A bandit policy is a policy, cf. [[OL:Policy]], where is a function of the previous actions chosen

,

and their costs

. I.e. you cannot observe what cost would have happened if you had chosen a different action.

We consider a version of the weighted majority algorithm for this multi-armed bandit problem.

Note here that, since the policy choice is random,

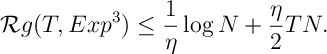

is a random variable. We show the following

and for appropriate

First we collect together a few facts then the proof proceeds very similar to the weight majority algorithm proof.

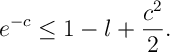

Ex 7 Show that for

(We do the result for losses rather than rewards because of this bound.1)

Ans 7 Follows as 4th term in the Taylor expansion is positive.

Ex 8 Show that

![{\mathbb E}[\hat{c}_t(a; \omega_t)] = c(a;\omega_t)](https://appliedprobability.blog/wp-content/uploads/2018/04/077f605887067d0efd738874beb785e3.png?w=840)

Ans 8

![{\mathbb E}[\hat{c}_t(a ; \omega_t)] = P_t({a}) \frac{c(a;\omega)}{P_t(a)} = c(a;\omega)](https://appliedprobability.blog/wp-content/uploads/2018/04/18a2fdbd5407f2d35fb00beda8364d80.png?w=840)

Ex 9 Show that

![P_t(a){\mathbb E}[\hat{c}_t(a,\omega_t)^2] \leq 1](https://appliedprobability.blog/wp-content/uploads/2018/04/0c85f17d1c95a36f89059fe951526563.png?w=840)

Ans 9 Similar to [8],

![P_t(a){\mathbb E}[\hat{c}_t(a,\omega_t)^2] = P_t(a)\cdot P_t({a}) \frac{c(a;\omega)^2}{P_t(a)^2} = c(a;\omega)^2 \leq 1 \, .](https://appliedprobability.blog/wp-content/uploads/2018/04/eac4c61d6bf4503fc02fd0a6aeb8a5c4.png?w=840)

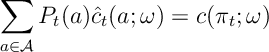

Ex 10 Show that

(Note: here we are not taking an expectation with respect to but we are just taking a sum weighted by

.)

Ans 10

![\sum_a P_t(a) \hat{c}_t(a;\omega_{t}) = \sum_a P_t(a) \frac{c(a;\omega_{t})}{P_t(a)} {\mathbb I}[\pi_{t}=a] = c(\pi_t;\omega_{t})](https://appliedprobability.blog/wp-content/uploads/2018/04/182682067b2563c8a7839d9fcce25016.png?w=840)

Ex 11 Show that

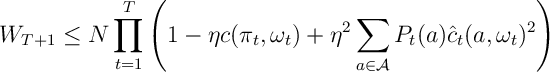

Ans 11 This is the same as [2-3] but use [7] at the last step instead of Hoeffding’s Inequality. Specifically,

![\begin{aligned} \frac{W_{t+1}}{W_t} = \sum_{a\in\mathcal A} \frac{w_{t+1}(a)}{W_t} = \sum_{a\in\mathcal A} \frac{w_{t}(a)}{W_t} e^{-\eta \hat{c}_t(a;\omega_t)} & \leq \sum_{a\in\mathcal A} P_{t}(a) \left( 1- \eta \hat{c}_t(a ; \omega_t) + \frac{\eta^2}{2} \hat{c}_t(a ; \omega_t )^2 \right) \\ & \overset{[\ref{OL:EEE3.5}]}{=} 1 - \eta c(\pi_t,\omega_t) + \frac{\eta^2}{2} \sum_{a\in\mathcal A} P_{t}(a) \hat{c}_t(a;\omega_t )^2.\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2018/04/aa92684cf0ff1be798eb051c1a238b6b.png?w=840) Now multiply for

Now multiply for .

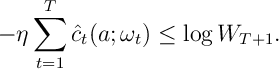

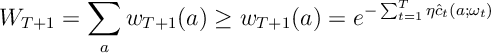

Ex 12 Show that for all

Ans 12 Similar to [4] ,

Now take logs.

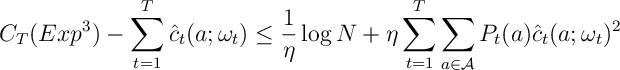

Ex 13 Show that

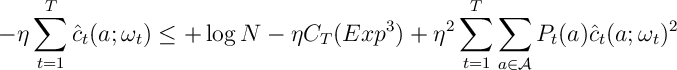

Ans 13 Combine inequalities [11] and [12] and taking logs gives

and rearrange. (Note: you need to use that

and rearrange. (Note: you need to use that for all

.)

Ex 14 Show that

Ans 14 Take expectations on both sides of [13]

![\mathbb E \left[ C_T(Exp^3) \right] - \underbrace{ \mathbb E \left[ \sum_{t=1}^T \hat{c}_t(a;\omega_t) \right] }_{ \overset{[\ref{OL:EEE2}]}{=} C_T(a) } \leq \frac{1}{\eta}\log N + \eta \sum_{t=1}^T \sum_{a\in{\mathcal A}} \underbrace{ \mathbb E \left[ P_{t}(a)\hat{c}_t(a; \omega_t)^2 \right] }_{ \overset{[\ref{OL:EEE3}]}{\leq} 1 }](https://appliedprobability.blog/wp-content/uploads/2018/04/97b9796ada95ba2d4f611ae9b63c4e17.png?w=840) (Here the first “[??]” is Ex 8 the second is Ex 9) Now minimize the lefthand-side to give the regret.

(Here the first “[??]” is Ex 8 the second is Ex 9) Now minimize the lefthand-side to give the regret.

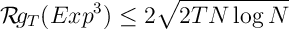

Ex 15. Show that, for an appropriate choice of ,

Ans 15. Now minimize over the righthand bound in [14].

- We could modify the policy for rewards by un-elegantly subtracting from the max reward.↩