- Positive Programming, Negative Programming & Discounted Programming.

- Optimality Conditions.

Thus far we have considered finite time Markov decision processes. We now want to solve MDPs of the form

![V(x) = \maxi_{\Pi \in {\mathcal P} } \quad R(x,\Pi) := \mathbb{E}_{x_0} \left[ \sum_{t=0}^{\infty} \beta^{t} r(X_t,\pi_t) \right]](https://appliedprobability.blog/wp-content/uploads/2018/02/3594a6e0fbb20e7a32ced216da73392e.png?w=840)

(Notice rewards no longer depend on time.)

Def 1. [Positive, Negative, and Bounded programming] [IDP:PosNegDis]

Maximizing positive rewards, is called positive programming.

Minimizing positive losses, , is called negative programming.

Maximizing bounded discounted rewards, &

is called discounted programming.

Def 2. [Minimizing Losses]So far we have considered the maximization of rewards; however often we want to minimize losses or costs. When we do so we will use the following notation:

![L(x) = \mini_{\Pi \in {\mathcal P} } \quad C(x,\Pi) := \mathbb{E} \left[ \sum_{t=0}^{\infty} \beta^t l(X_t, \pi_t) \right]](https://appliedprobability.blog/wp-content/uploads/2018/02/2cdcf473821714bd9d391530f0afaffb.png?w=840)

Ex 1. Show that, for positive programming,

![R_T(x,\Pi) := \mathbb{E} \Big[ \sum_{t=0}^{T -1} \beta^{t} r(X_t,\pi_t) \Big] \nearrow R(x,\Pi) \leq V(x).](https://appliedprobability.blog/wp-content/uploads/2018/02/a838a673c3f3412a2223fbe55066ff88.png?w=840)

Ex 2. [Continued] Show that, for discounted programming,

![R_T(x,\Pi) := \mathbb{E} \Big[ \sum_{t=0}^{T -1} \beta^{t} r(X_t,\pi_t) \Big] \rightarrow R(x,\Pi) \leq V(x).](https://appliedprobability.blog/wp-content/uploads/2018/02/c0e91b45cfac243692a915a7fc247b2e.png?w=840)

Ex 3. [Continued] Show that

![C_T(x,\Pi) := \mathbb{E} \Big[ \sum_{t=0}^{T -1} \beta^{t} l(X_t,\pi_t) \Big] \nearrow C(x,\Pi) \geq L(x).](https://appliedprobability.blog/wp-content/uploads/2018/02/e6234a759ff2bf3e4c91852bd92c61c6.png?w=840)

for a negative program.

Note that negative programming is not simply multiplying positive programming by minus one, because from [3] we see that the terms go up and over the optimal loss, while for positive programming each iteration moves towards the optimal objective [1].

Bellman’s Equation for positive programming

We now discuss Bellman’s equation in the infinite time horizon setting. Previously we solved Markov Decisions Processes inductively with Bellman’s equation. In infinite time, we can not directly apply induction; however, we see that Bellman’s equation still holds and we can use this to solve our MDP.

Thrm 1. Consider a positive program or a discounted program. Given the limit is well defined for each policy

, the optimal policy

satisfies

![V(x) = \max_{a\in {\mathcal A}} \Big\{ r(x,a) + \beta {\mathbb E}_{x,a} \left[ V(\hat{X}) \right] \Big\}.](https://appliedprobability.blog/wp-content/uploads/2018/02/59eb3d8eddf1452495e360435d2c24fc.png?w=840)

Moreover, if we find a function such that

![R(x) = \max_{a\in {\mathcal A} } \left\{ r(x,a) + \beta \mathbb{E}_{x,a} \left[ R(\hat{X}) \right] \right\}](https://appliedprobability.blog/wp-content/uploads/2018/02/5aa0bdd68ed86cd780c71db98f7fe1c7.png?w=840)

and we find a function such that

![\pi(x) \in \argmax_{a\in \mathcal A} \left\{ r(x,a) + \beta \mathbb E_{x,a} \left[ R(\hat{X} \right] \right\}](https://appliedprobability.blog/wp-content/uploads/2018/02/7b86e424f2ac6990d8fa45ac839e254e.png?w=840)

Then is optimal and

the optimal value function.

The above theorem covers the cases of positive and discounted programming. The case of negative programming is a little more subtle. We, now, prove Thrm 1. On first reading, you may wish to take this theorem as a given and skip to [15].

Ex 4. [Proof of Thrm 1] Show that

![R(x,\Pi) = r(x,\pi_0) + \beta \mathbb E_{x,\pi_0} \left[ R(\hat{X},\hat{\Pi}) \right]](https://appliedprobability.blog/wp-content/uploads/2018/02/b4ec64a39423044e3ceef6ae3782c203.png?w=840)

where is the policy after by policy

after taking action

from state

.

Ex 5. [Continued] Show that

![V(x) \leq \sup_{\pi_0 \in {\mathcal A}} \left\{ r(x,\pi_0) + \beta \mathbb{E}_{x,\pi_0} \left[ V(\hat{X}) \right] \right\}.](https://appliedprobability.blog/wp-content/uploads/2018/02/9613eff6520747960e79455a7537d4da.png?w=840)

In the following exercise, we let be the policy that chooses action

and then, from the next state

, follows a policy

which satisfies

Ex 6. [Continued] Show that

Ex 6. [Continued] Show that

![V(x) \geq r(x,a) + \beta \mathbb E_{x,a} [V(\hat{X})] - \epsilon \beta .](https://appliedprobability.blog/wp-content/uploads/2018/02/ed450b8f3ed7651c37d8facb30291f42.png?w=840)

Ex 7. [Continued]

![V(x) = \max_{a\in {\mathcal A}} \Big\{ r(x,a) + \beta {\mathbb E}_{x,a} \left[ V(\hat{X}) \right] \Big\}.](https://appliedprobability.blog/wp-content/uploads/2018/02/59eb3d8eddf1452495e360435d2c24fc.png?w=840)

At this point we have shown the first part of Thrm 1. Now we need to show that a policy that satisfies the Bellman Equation is also optimal.

Ex 8. [Thrm 1, 2nd part] Show that if if we find a function and a function

such that

![R(x) = \max_{a\in {\mathcal A} } \left\{ r(x,a) + \beta \mathbb{E}_{x,a} \left[ R(\hat{X}) \right] \right\}, \quad \pi(x) \in \argmax_{a\in \mathcal A} \left\{ r(x,a) + \beta \mathbb E_{x,a} \left[ R(\hat{X} \right] \right\}](https://appliedprobability.blog/wp-content/uploads/2018/02/74c26912393065a7d954166c0480270b.png?w=840)

then .

Ex 9. For positive and discounted programming, suppose that is a policy satisfying

![R(x,\Pi) \geq \max_{a\in {\mathcal A} } \left\{ r(x,a) + \beta \mathbb{E}_{x,a} \left[ R(\hat{X},\pi) \right] \right\}](https://appliedprobability.blog/wp-content/uploads/2018/02/b7af13c9c35c64de3cd91b4796ac3eae.png?w=840)

show that

![R(x,\Pi) \geq R_t(x,\tilde \Pi ) + \beta^t \mathbb E [ R(\tilde{X}_t,\Pi ) ],](https://appliedprobability.blog/wp-content/uploads/2018/02/d2c62b8deb53377736ad8012ffc59e30.png?w=840)

where here is the random variable representing the state reached by policy

after

steps.

Ex 10. [Continued] Now show that

and thus show that the policy is optimal.

Negative Programming

The analogous result to Thrm [IDP:Bellman] for Negative programming is much weaker. This can be see from [IDP:CostLimit] when compared with [[IDP:RewardLimit]]. Essentially interations of negative programming over shoot the optimal value function.

Def 3. A policy is called a stationary policy if its action only depends on the current state (and is non-random and does not depend on time).

Ex 11. Consider a MDP with a finite number of actions and assume the Bellman equation has a solution. Show that there is a stationary policy solving the Bellman equation.

Thrm 2. Consider a negative program. Given the limit is well defined for each policy

, the optimal policy

satisfies

![L(x) = \min_{a\in {\mathcal A}} \Big\{ l(x,a) + \beta {\mathbb E}_{x,a} \left[ L(\hat{X}) \right] \Big\}.](https://appliedprobability.blog/wp-content/uploads/2018/02/c8c75c9027e6ba9490cc167c6780dcb5.png?w=840)

Moreover, any stationary policy that solves the Bellman equation:

![\pi(x) \in \argmin_{a\in {\mathcal A} } \left\{ c(x,a) + \beta \mathbb{E}_{x,a} \left[ L(\hat{X}) \right] \right\}](https://appliedprobability.blog/wp-content/uploads/2018/02/2bd4c3a0af89c3599be0aa675dd80de6.png?w=840)

is optimal.

So the Bellman equation is still correct, but as the above result suggests, simply finding a solution to the Bellman equation is not sufficient. We need to find the optimal solution first and then we need to solve with a stationary policy.

Ex 12. Show that the optimal value function satisfies

![L(x) = \min_{a\in {\mathcal A}} \Big\{ l(x,a) + \beta {\mathbb E}_{x,a} \left[ L(\hat{X}) \right] \Big\}.](https://appliedprobability.blog/wp-content/uploads/2018/02/c8c75c9027e6ba9490cc167c6780dcb5.png?w=840)

Ex 13. Argue that the stationary policy , described in Thrm 2 satisfies

![L(x)= C_T(x,\pi) +\beta^T \mathbb E_{x,\pi} [L(X_{T+1})]](https://appliedprobability.blog/wp-content/uploads/2018/02/705fc4fa4458aabfede8113a937e59f0.png?w=840) Ex 14. [Continued] Show that

Ex 14. [Continued] Show that is optimal i.e.

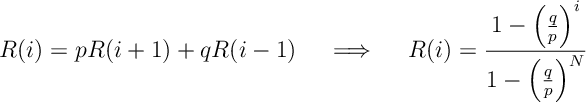

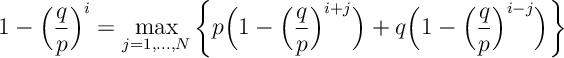

Ex 15. A gambler has pounds and wants to win

pounds. The gambler can bet any

less than or equal to

. The gambler wins with probability

and loses with probability

and wins

or loses

. The game and when either

or

is reached. Assuming that

, argue that it is always optimal for the gambler to gamble Gamble 1 pound.

Ex 16. [Geometric stopping or discount factor] Consider a positive program and suppose that after an independent geometrically distributed parameter number of steps the MDP enters an exit state with zero reward. Here a policy

has reward function

![R(x,\Pi) = \mathbb E_{x} \bigg[ \sum_{t=0}^{\tau_\beta } r(X_t,\pi_t)\bigg]\, .](https://appliedprobability.blog/wp-content/uploads/2018/02/60dff76680fc2bc80e73e25de01e40ad.png?w=840) Argue that this has the same rewards as a discounted program with discount factor

Argue that this has the same rewards as a discounted program with discount factor .

Answers

Ans 1. Apply the Monotone Convergence Theorem.

Ans 2. Apply the Bounded Convergence Theorem.

Ans 3. Again apply Monotone Convergence Theorem.

Ans 4. We know that

![R_t(x,\Pi) = r(x,\pi_0) + \beta \mathbb E [ R_{t-1}(\hat{X},\hat{ \Pi} ) ]](https://appliedprobability.blog/wp-content/uploads/2018/02/c70dcd06f2d37a59d953b15c63e9eaf3.png?w=840)

Applying limits as on both sides and monotone convergence theorem gives the results.

Ans 5. By [4] and the optimality of

![\begin{aligned} R(x,\Pi) & = r(x,\pi_0) + \beta \mathbb E_{x,\pi_0} \left[ R(\hat{X},\hat{\Pi}) \right] \\ & \leq r(x,\pi_0) + \beta \mathbb E_{x,\pi_0} \left[ V(\hat{X}) \right]\, .\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2018/02/0253788e04edc3b10b53c3fc830bebdd.png?w=840)

Now maximize the left hand side of the above inequality.

Ans 6. We have that

![\begin{aligned} V(x) & \geq R(x,\pi_\epsilon) = r(x,a) + \beta \mathbb{E}_{x,a} \left[ R(\hat{X},\hat{\Pi}_\epsilon) \right] \\ & \geq r(x,a) + \beta \mathbb{E}_{x,a} \left[ V(\hat{X}) \right] - \epsilon \beta\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2018/02/408cbd8586c6d8a219023f1370b25c9f.png?w=840)

The first inequality holds by the sub-optimality of and the second holds by the assumption on

.

Ans 7. Take the inequality in [6], maximize over , and take

gives

![V(x) \geq \max_{a\in {\mathcal A}} \Big\{ r(x,a) + \beta {\mathbb E}_{x,a} \left[ V(\hat{X}) \right] \Big\}.](https://appliedprobability.blog/wp-content/uploads/2018/02/763b8252481ac8c7185b9b4f4a235d41.png?w=840)

The above inequality and [5] give the result.

Ans 8. It is clear that

![R(x) = r(x,\pi(x)) + \beta \mathbb E_{x,\pi(x)} [R(\hat{X})].](https://appliedprobability.blog/wp-content/uploads/2018/02/dee079d732d6184aabdf04657553997d.png?w=840)

Thus by Ex 8 in Markov chains, we have that .

Ans 9. Suppose that policy follows actions

over states

. Then applying our initial inequality in [9], we have that

![\begin{aligned} R(x,\Pi) & \geq r(\tilde{X}_0,\tilde A_0) + \beta \mathbb E_{\tilde{X}_0,\tilde A_0} \left[ R(\tilde X_1 , \Pi ) \right] \\ & \geq r(\tilde X_0,\tilde A_0) + \beta \mathbb E_{\tilde X_0,\tilde A_0} \left[ r(\tilde{X}_1,\tilde{A}_1) + \mathbb E_{\tilde X_1,\tilde A_1} [ R(\tilde{X}_2,,\Pi) ] \right] \\ &\; \vdots \\ &\geq \mathbb E \underbrace { \left[ \sum_{\tau=0}^{t-1} \beta^{\tau} r(\tilde{X}_{\tau},\tilde{A}_{\tau}) \right] }_{ = R_t(x,\tilde \Pi ) } + \beta^t \mathbb E \left[ R(\tilde{X}_t,\Pi) \right]\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2018/02/d2f66dce80b9e017fabcd5c20e8001f7.png?w=840)

In the second inequality we again apply our initial inequality (this time to the term . We repeat this

times. We, then, note that the terms involving

are the total reward of policy

up until time

.

Ans 10. By [9] we have that

![R(x,\Pi) \geq R_t(x,\tilde \Pi ) + \beta^t \mathbb E [ R(\tilde{X}_t,\Pi ) ] \geq R_t(x,\tilde{\Pi}) \xrightarrow[t\rightarrow \infty]{} R(x,\tilde \Pi ).](https://appliedprobability.blog/wp-content/uploads/2018/02/5de81f06d97d64402c295b0b55e82170.png?w=840)

Since is an arbitrary policy and

has higher reward,

must be optimal.

Ans 11. As actions are finite, for each there exists a

solving

![\pi(x) \in \argmin_{a\in {\mathcal A} } \left\{ c(x,a) + \beta \mathbb{E}_{x,a} \left[ L(\hat{X}) \right] \right\}](https://appliedprobability.blog/wp-content/uploads/2018/02/2bd4c3a0af89c3599be0aa675dd80de6.png?w=840)

Ans 12. Convince yourself that [4-7] apply in this case.

Ans 13.

![\begin{aligned} L(x) & = \min_{a\in \mathcal A} \left\{ c(x,a) + \beta \mathbb E_{x,a} [ L(X_1) ] \right\} \\ & = c(x,\pi(x)) + \beta \mathbb E_{x,\pi(x)} \left[ L(X_1) \right]\\ & = c(X_0,\pi(X_0)) + \beta \mathbb E_{X_0,\pi(X_0)} \left[ c(X_1,\pi(X_1)) + \beta \mathbb E_{X_1,\pi(X_1)} \left[ L(X_2) \right] \right]\\ & =C_1(x,\pi) +\beta^2 \mathbb E_{x,\pi} [L(X_2)] \\ & \;\;\vdots \\ & = C_T(x,\pi) +\beta^T \mathbb E_{x,\pi} [L(X_{T+1})] \end{aligned}](https://appliedprobability.blog/wp-content/uploads/2018/02/f875963e8f42a454615987f783b3d0a3.png?w=840)

Ans 14.

![L(x) = C_T(x,\pi) + \beta^{T} \mathbb E_{x,\pi} [L(x_{T+1})] \\ \geq C_T(x,\pi) \xrightarrow[T\rightarrow \infty]{} C(x,\pi)](https://appliedprobability.blog/wp-content/uploads/2018/02/73510619012b05445cf317653c075b4a.png?w=840) So the policy has lower cost, thus is optimal.

So the policy has lower cost, thus is optimal.

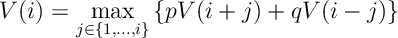

Ans 15. The Bellman equation for this problem is

Let be the reward for the policy that always bets

pound. You can see that

solves the recursion

You can check that solves the Bellman Equation above: I.e. check

where the maximum above is maximized at . (Hint: verify by differentiation, that the function

is decreasing.)

The solves the Bellman Equation and so by Thrm [IDP:Bellman] it is optimal to always bet

pound.

Ans 16.

![\mathbb E_{x} \bigg[ \sum_{t=0}^{\tau_\beta } r(X_t,\pi_t)\bigg] = \mathbb E_{x} \bigg[ \sum_{t=0}^{\infty } \mathbb I [\tau_\beta \geq t] r(X_t,\pi_t)\bigg] = \mathbb E_{x} \bigg[ \sum_{t=0}^\infty \beta^t r(X_t,\pi_t) \bigg].](https://appliedprobability.blog/wp-content/uploads/2018/02/e185e4a9ab59ea4d23fb246eb29c1c73.png?w=840)