We show that relative entropy decreases for continuous time Markov chains.

Consider aan irreducible continuous time Markov chain with state space ,

-matrix

and stationary distribution

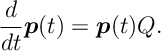

. The forward equation for this Markov chain is given by

Throughout this section we assume that and

are two solutions to the above system of differential equations.

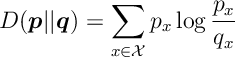

Recall that the relative entropy between distributions and

is

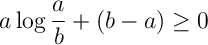

Ex 1. Show that, for positive numbers and

,

with equality iff .

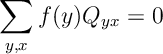

Ex 2. Show that for any function ,

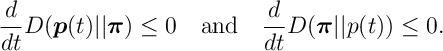

Ex 3. Show that

where we define .

Ex 4. Show that

with equality iff .

Ex 5. If is the stationary distribution, then

(From here standard Lyapunov argument can be used to prove ergodicity.)

Answers

Ans 1. The function is strictly convex. The remaining terms are the tangent to this function at

.

Ans 2. Trivial since .

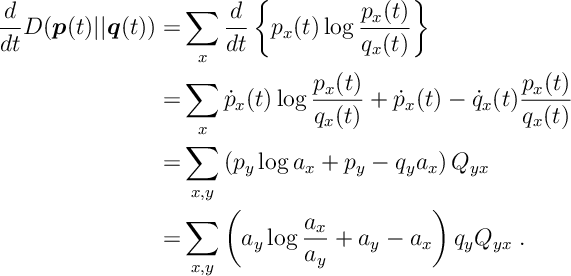

Ans 3. Define .

In the 3rd equality we apply the forward equation . In the 4th equality we apply [2] with .

Ans 4. The inequality holds by applying [1] to [3].

For the equality condition suppose that . By irreducibility

. Take two states that commute

i.e.

. For this

and

the term in the sum given in [3] can only be zero if

. By irreducibility, this holds for all pairs thus

.

Ans 5. Obvious.