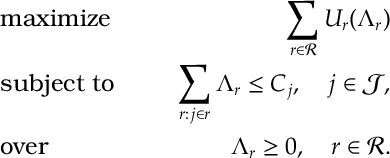

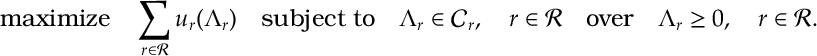

We consider a decomposition of the following network utility optimization problem

SYS:

Here each route had a utility

for rate

. We then maximize the aggregate of these utilities subject to the condition that the rates using a resource

do not exceed the capacity of that resource

.

Here, we saw two examples of queueing systems which implicitly optimized a network utility optimization: a closed queueing network, optimizing a function; and TCP Reno, optimizing an inverse square function. The closed queueing network just naively stored-and-forwarded packets on each route. TCP Reno had an internal network which, just like the closed network, stored-and-forwarded packets but also included a congestion controllers on the each route which controlled the number of packets on each route. In other words, adding the congestion controllers changed the optimization from a log utility function to an inverse square function. We now wish to mathematically describe that how that change in the optimization occurred.

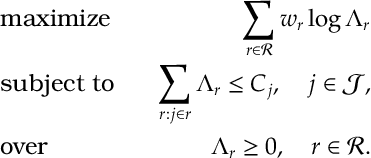

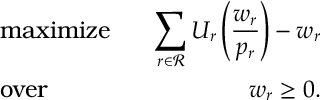

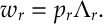

In addition to the system optimization , we consider the following two further optimization problems:

NET():

USR:

LIN:

- The network optimization represents the rate allocated by a queueing network.

- The user optimization represents the optimization conducted by a congestion controller.

We show that the system optimization can be decomposed into the network and the users sub-problems.

Decomposition: a quick discussion

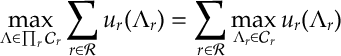

Consider the following optimization

- Here for each route

,

is a concave function and each

is a convex set.

It is fairly, immediate that this is equivalent to each route, , solving the optimization, i.e.

In this way the optimization is decomposed in to smaller problems that can be solved for each route in .

The Network Decomposition: further discussion

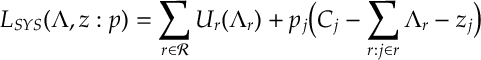

Unfortunately, the optimization does not in general have the nice product structure discussed above. So, instead, let’s look at it’s Lagrangian function:

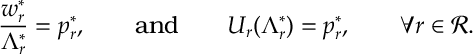

[Network Decomposition] Given , there exists variables

,

,

where

optimizes

,

optimizes USR

(

) for

, and LIN

holds iff

optimizes SYS.

Let’s see what conditions must satisfy in order for NET(

) to have a solution that is also optimal for SYS.

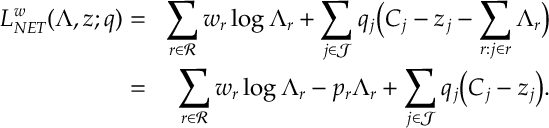

After adding slack variables, , and Lagrange multipliers,

, the Lagrangian of NET(

) is

Here, we use the shorthand . The Lagrangian for SYS differs having

in place of

. The (KKT) conditions for optimality are complementary slackenss, dual/primal feasiblity, and stationarity. The complementary slackness and dual/primal feasibility conditions:

and,

,

, are the same for NET(

) and SYS. Thus, the optimality condition that differs is stationarity. For the NET(

) and SYS problem, this is

Due to the first equality, we can equivalently express in terms of

in the second equality

Notice the first term is LIN, and the second term is precisely the optimality condition for USR

. In otherwords, the conditions which

must satisfy for the optimal solution to NET(

) to be optimal for SYS are precisely to satisfy LIN

and optimality of USR

. So, we can use NET(

), USR

and LIN

to construct a solution to SYS.

Conversely, we know an optimal solution to SYS exists. As discussed, this satisfies the same complementary slackness and feasibility conditions as NET() [for any

]. The SYS problem must also satisfy the stationarity condition

. So we can just define a variable

. Thus we have LIN

and the stationarity condition for NET(

) and, as argued between and ,

is also an optimal solution to USR

. Thus the optimality of SYS implies the existence of solutions simultaneously solving

, USR

(

) and LIN

.

- Decomposition results often rely on some form of separability. Eg.

where

is an objective function or a Lagrangian. This decomposition is somewhat different: it considers what conditions the parameters of certain sub-optimizations must satisfy to solve a global optimization.

In the context of a queueing network, we interpret this result as follows. A congestion controller on each route , chooses a number of packets to send across the network

. As we saw for a closed queueing network, the network solves the optimization

the packets receive rate

and delay/loss

satisfying

. On receiving

, the controller updates

to optimize USR

. This feedback loop optimizes

by respectively solving

,

and

. In the context of allocating resources, we interpret

as wealth decided to be spent by route

and

the price per unit of resource

.