The Neural Tangent Kernel is a way of understanding the training performance of Neural Networks by relating them to Kernel methods. Here we overview the results of the paper [Jacot et al. here]. The paper considers a deep neural network with a fixed amount of data and a fixed depth. The weights applied to neurons are initially independent and normally distributed. We take a limit where the width of each layer tends to infinity.

The main observations of the paper are the following:

- The function represented by a neural network changes according to a kernel when undergoing gradient descent training. This is called the Neural Tangent Kernel (NTK).

- Under a random normally distributed initialization, the NTK is random. However, under an infinite width limit, this kernel is non-random and the output of each neuron an independent gaussian distribution with zero mean and a fixed covariance.

- In the limit, these weights and thus this kernel will not change during training. This is called “Lazy training”.

- In this limiting regime, the neural network parameter converge quickly to a global minimum with weight parameters that are arbitrarily close to the initialized neural network.

These results are significant as they give a way of understanding why Neural networks converge to a optimal solution. (Neural networks are know to be highly non-convex objects and so understanding their convergence under training is highly non-trivial.) What the following argument does not (fully) explain is why neural networks are so expressive, why they generalize well to unseen, or why in practice neural networks outperform kernel methods.

Why Lazy Training Helps Convergence.

We give a short heuristic explanation as to why Lazy training helps us understand the convergence of neural networks. Suppose that the weights of a neural network remain close to the values of their initial weights. That is

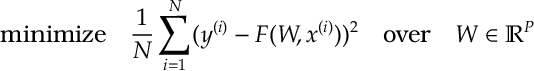

Now suppose we wish to solve

where here ,

is our data.

Notice under stochastic gradient decent evolves (approximately) according to the o.d.e.

![\begin{aligned}

\label{NTK:WTrain}

\frac{dW}{dt} = \hat{\mathbb E} [ \nabla_W F({W(t)},\hat x)(\hat y - F({W(t)},\hat x) ) ] \, ,\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2021/03/ccd2ae005eb44d6c329c1e188face681.png?w=840)

where here is the empirical distribution of our data. (I.e.

is selected uniformly at random from

,

.) Therefore, by the chain rule, we expect

to evolve as

![\begin{aligned}

\frac{d F({W(t)},x)}{dt}

& =\nabla_W F({W(t)},x)^\top \frac{dW}{dt}\notag \\

& = - \hat{\mathbb E} \left[ \Big\{\nabla_W F({W(t)},x)^\top \nabla_W F({W(t)},\hat x) \Big\}( F({W(t)},\hat x) - \hat y) \right]\, . \label{NTK:diff0}\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2021/03/87349193e7736cfe88e8447dd94f0bea.png?w=840)

The term in curly brackets defines a kernel:

This kernel is the Neural Tangent Kernel; it’s the kernel that you get from the tangent of a neural network, . Under the assumption that training is lazy this kernel should be constant i.e.

Now if the Kernel defines a positive definate matrix on the data (I.e. if is a positive definite matrix), then for

becomes

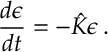

(*)

We can analyze the error between the Neural network’s estimate and the data for which becomes

Since is positive definite

![\begin{aligned}

\epsilon (t) = \epsilon (0) e^{-\hat K t} \xrightarrow[t\rightarrow\infty]{} 0 \, .\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2021/03/173f286a876590564d555ce2f6bebca1.png?w=840)

Now we see that if weights and the Neural Tangent Kernel defined by is (approximately) constant then the neural network trained under converges fast to a state with zero loss.

Next we need to argue that weights and the neural tangent kernel remain approximately constant during training. But first we define a bit more notation.

Neural Network Model.

We consider a dense -layer neural network. Here

gives the number of activations on each layer. The activation functions on each layer are given by the same Lipschitz continuous function

.

We let weights for the

-th layer, and we let

be the weights applied at each layer. We let

.

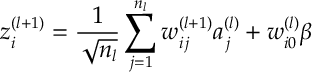

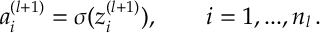

Given the activations for layer

, we define the pre-activations

and then we define

(Notice that above we rescale the weights by a factor of or

.)

Starting with inputs and defining

, we can recursively apply the above equations to get output

. We define

to be the function that maps inputs to outputs, i.e. for input

, we recursively apply the above equations and to output

. 1

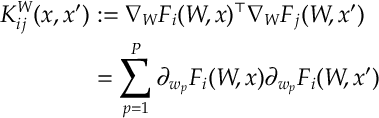

We recall the definition of the Neural Tangent Kernel as given above

Definition [Neural Tangent Kernel] For a neural network with weights , the neural tangent kernel

is defined by

where here indexes all the weights of our neural network.

Neural Networks with Gaussian Weights.

The following shows how initializing the Neural Network effects the distribution of its output. We show that in a multi-layer neural network initialized with Gaussian weights at each neuron as an output that is independent and Gaussian distributed when the width of each layer tends to infinity.

In this limit, each layer affects the covariance of the next layer in a constant way. We can use this to show that the initial Neural Tangent Kernel (which is random in the prelimit) tends to a non-random Kernel.

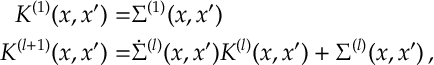

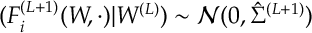

Proposition 1. In the limit as , the output of

converges to a normal distribution where in the limit each component

is independent over

, has mean zero and covariance

which satisfies the recursion

![\begin{aligned}

\Sigma^{(1)}(x,x') &= \frac{1}{n_0} x^\top x' + \beta^2

\\

\Sigma^{(l+1)}(x,x') & =

\mathbb E_{(z,z') \sim \mathcal N(0, \Sigma^{(l)}(x,x'))}

\left[

\sigma(z) \sigma(z')

\right]

+ \beta^2.\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2021/03/4b5517d5f89572024fcc0f65d98fdb9f.png?w=840)

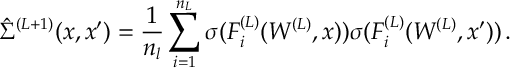

The Neural Tangent Kernel is such that converges to zero for

, whereas for

the NTK converges to a non-random real valued kernel satisfying the recursion

where

![\begin{aligned}

\dot \Sigma^{(l+1)}(x,x') & =

\mathbb E_{(z,z') \sim \mathcal N(0, \Sigma^{(l)}(x,x'))}

\left[

\dot \sigma(z)\dot \sigma(z')

\right] \, .\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2021/03/2cfb14bf488fd7c0480a6b524e752cfe.png?w=840)

Proof. The result follows by induction on the number of layers . For

it is not too hard to check the above conditions since

is Gaussian and there is no limit to be taken.

Lets assume the induction hypothesis that, in the limit where , the output from level

,

is independent mean zero Gaussian with the covariance between inputs

and

given by

. Also assume that, with probability

, the NTK satisfies

![\begin{aligned}

\label{NYK:Hyp}

K^W(x,x') \xrightarrow[n_1,...,n_{L-1} \rightarrow \infty ]{} K^{(L)}(x,x')\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2021/03/dc61efc461ba765df4b7d2a05399f772.png?w=840)

where is some deterministic kernel.

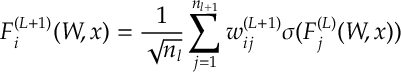

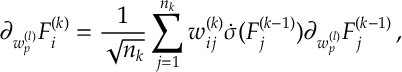

Knowing what happens layer , let’s consider what happens at layer

when

. Recall that the output at level

is

and, if we differentiate a parameter indexed by from layer

then

(Note that this is just BackPropogation)

(Note that this is just BackPropogation)

Let’s analyse . Notice that if we condition of the weights upto layer then the activations

are fixed. So then

in is Normally distributed

where

and the outputs are (conditionally) independent over . By the induction hypothesis, the terms in the sum above are i.i.d. as

. So the strong law of large numbers applies to the sum as we let

![\begin{aligned}

\label{NTK:Cov}

\hat \Sigma^{(L+1)}(x,x') \xrightarrow[n_L \rightarrow \infty ]{w.p. 1} \Sigma^{(L+1)}(x,x')\, ,\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2021/03/363a537756527abfeda8a7366d0ef566.png?w=840)

where is as stated above. Thus given the deterministic limit for the covariance , the distribution of

in has the same limit: it is normally distributed limit with mean zero and covariance

and is independent over

, as required.

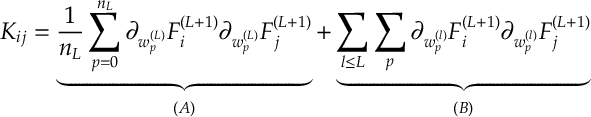

Next, let’s analyse the NTK. Given , we can see that there are two cases depending on whether the weights belong to the final layer or not: I.e. the NTK is

Given , we deal with the two terms and

separately.

Notice from , the terms in are all zero unless

. If

then

is exactly

, above, and so limits to

as given in .

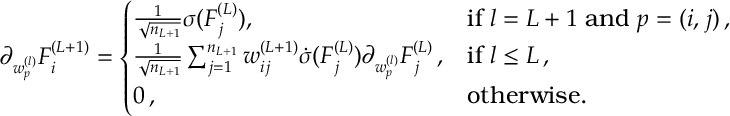

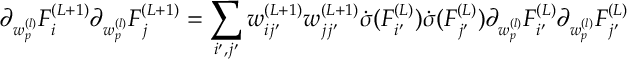

For term , by

So

So

![\begin{aligned}

&\sum_{l \leq L} \sum_p

\partial_{w^{(l)}_p} F_i^{(L+1)}(x)

\partial_{w^{(l)}_p} F_j^{(L+1)}(x')

\\=&

\sum_{i', j'}

w_{ii'}^{(L+1)} w_{jj'}^{(L+1)} \dot{\sigma} ( F_{i'}^{(L)}(x))\dot{\sigma} ( F_{j'}^{(L)}(x'))

\left[

\sum_{l \leq L} \sum_p

\partial_{w^{(l)}_p} F_{i'}^{(L)}(x)

\partial_{w^{(l)}_p} F_{j'}^{(L)}(x')

\right]

\\

\xrightarrow[n_1,..,n_{L-1} \rightarrow \infty ]{}

&

\sum_{i', j'}

w_{ii'}^{(L+1)} w_{jj'}^{(L+1)} \dot{\sigma} ( F_{i'}^{(L)}(x))\dot{\sigma} ( F_{j'}^{(L)}(x'))

K^{(L)}(x,x') \delta_{i'j'}

\\

=&

\sum_{i'=1}^{n_l}

w_{ii'}^{(L+1)} w_{ji'}^{(L+1)} \dot{\sigma} ( F_{i'}^{(L)}(x))\dot{\sigma} ( F_{i'}^{(L)}(x'))

K^{(L)}(x,x')

\\

\xrightarrow[n_L \rightarrow \infty ]{}

&

\delta_{ij} \dot{\Sigma}(x,x') K^{(L)}(x,x') \, .\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2021/03/80784e265c0b21cb06fe4e8ca594e40f.png?w=840) In the above we note that term in square brackets is the NTK for the depth

In the above we note that term in square brackets is the NTK for the depth network. Thus we apply . The only terms that are non-zero after taking this limit are terms where

. We are then left with a sum over i.i.d random variables (indexed by

) thus the strong law of large numbers gives convergence to the limit NTK, as stated. This completes the proof. QED.

Lazy Weights.

We now sketch out why weights remain approximately constant during training. Recall that weights change according to ![\begin{aligned}

\label{NTK:DW}

\frac{dW}{dt} = \hat{\mathbb E} [ \nabla_W F^{(L+1)}({W(t)},\hat x)d^{(L+1)}(t) ) ] \end{aligned}](https://appliedprobability.blog/wp-content/uploads/2021/03/24809c9d1f810e9b228989002ca0890f.png?w=840)

Previously we took for the data

. Here we leave this training direction somewhat general. (Though it is important it stays bounded.)

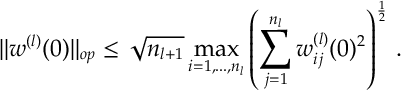

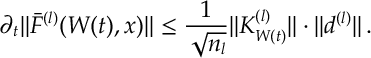

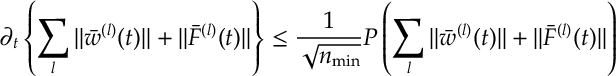

In the limit where , it holds that

and

are (stochastically) bounded and that

(Here is the operator norm and

is the

norm of the empirical distribution over the data.)

We give a sketch proof as otherwise we will spend too much time defining norms etc… To make notation a bit shorter we write and

for

and

.

Proof Sketch. (see original paper for full proof — https://arxiv.org/abs/1806.07572 ) First we show is bounded. It is not hard to show (using Cauchy-Schwartz) that any

matrix satisfies

If the components of are i.i.d. of finite variance, then dividing by

and applying the strong law of large numbers gives a finite upper bound as

. 2

Next we argue that the scaled activations remains bounded. Notice that from Proposition [init] for any input

the preactivations

are independent identically distributed Gaussian. So

is independent over

. If we consider an Euclidean norm for any input

then we get the strong law of large numbers giving a finite limit to

Now let’s analyze the change in . From above

![\begin{aligned}

\partial_t w_{ij}^{(l)}= \hat{\mathbb E} [ \partial_{w^{(l)}_{ij}}F^{(L+1)}({W(t)},\hat x) \cdot d^{(L+1)}(t, \hat x) ) ] \end{aligned}](https://appliedprobability.blog/wp-content/uploads/2021/03/8fcf0e080fd260248eba07230abf628d.png?w=840)

We know for backpropogation holds i.e. from recall that

and . We can repeatedly apply this to the above expression so that

![\begin{aligned}

\label{NTK:wn}

\partial_t w_{ij}^{(l)}

=

\frac{1}{\sqrt{n_l}}

\hat{\mathbb E} [

a^{(l)} \cdot d^{(l)}(t,\hat x)

]\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2021/03/80544705ab858e24b4c4bdedfed0b79b.png?w=840)

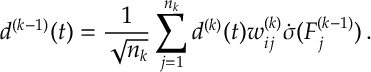

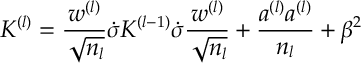

where we inductively define

(Already at this point the division by in should make us think that things are not going to grow when we let

)

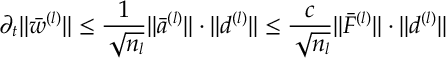

Now it is not hard to check that for any function

. Applying this to

above (dividing by

and applying Cauchy-Schwartz) we get that

where is the Lipschitz constant for the

in

.

We now have two terms to deal with and

. For

we know that this changes according to the rule (*) above. In our case this says that

![\begin{aligned}

\partial_t F^{(l)}({W(t)},x)

& = \hat{\mathbb E} \left[ K_{{W(t)}}^{(l)}(x,\hat x) d^{(l)}(t,\hat x)\right]\, .\end{aligned}](https://appliedprobability.blog/wp-content/uploads/2021/03/fb9b9697edac89bd32efcedbad162ff5.png?w=840)

where is the NTK (at time

)

So to understand we need to understand

and

. Splitting into layers lower than

and at

note that

has the form

So

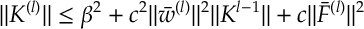

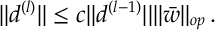

also analyzing , from , we see that

Thus both and

bounded above by increasing polynomials in

and

(but, importantly, not depending on

,

). Thus we can bound above the same polynomial in

. Applying this to and , we see that

for some polynomial . By (a polynomial version of) Grownall’s lemma it can be argued that the solution to this remains bounded for suitably large

. For this reason the norm of both

and

is not changing in time. QED