Sanov’s asks how likely is it that the empirical distribution some IIDRV’s is far from the distribution. And shows that the relative entropy determines the likelihood of being far.

Let be IIDRVs on some finite set

with a probability distribution

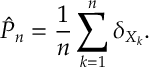

and let

be the empirical distribution of

, i.e.

Ex 1. Show that, almost surely,

Just like Cramer’s Theorem, we wish to get a large deviations view of this law of large numbers convergence. This is Sanov’s Theorem:

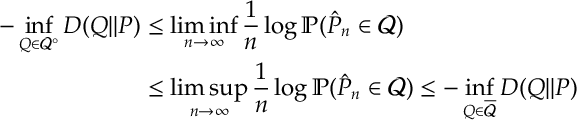

Thrm. [Sanov’s Theorem – finite alphabets]

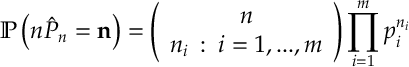

Ex 2. Convince yourself that is a multinomial distribution with

trails parameters

: i.e. for

with

,

Ex 3. The support of a multinomial distribution has size

Ex 4. If for each

then

[ is the entropy of the distribution

.]

Ex 5. If for each

then

The main ideas are now all in place. What follows now are just fiddly details.

We are now more or less done we just need to make the previous result into a LDP upper bound and a lower bound.

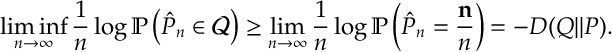

Ex 6. [Sanov lower bound] For each open set

Ex 7. [Sanov upper bound] For each closed set

The last two exercises combined prove Sanov’s Theorem. Sanov’s Theorem can be extended two more abstract probability spaces, however we do not pursue such results currently.

Answers

Ans 1. This is just a strong law of large numbers.

Ans 2. Obvious from the definition of a multinomial distribution.

Ans 3. There are points and

dividers. Thus the result holds.

Ans 4. Take logs and apply the Stirling approximation that . So,

Ans 5. Note that , so combining [2] and [4] gives

Ans 6. Take and take sequence

such that

for each

. Since

is open, eventually

. So

Maximising over gives the result.

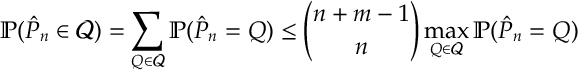

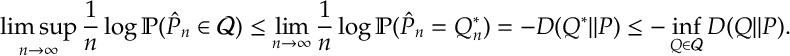

Ans 7. By [3]

Note that the combinatorial term is a polynomial in (so goes to 0 after taking logs and dividing by

). Let

be the distribution achieving the supreme on the right hand side above. The set

is compact. Taking an appropriate subsequence if required,

for some

. Taking logs limits

The equality above is [5].