The Weighted Majority Algorithm is a randomized rule used to learn the best action amongst a fixed reference set.

We consider the following setting

- There are a fixed set of actions

which one may choose. There are a set of outcomes

.

- After choosing an action

, an outcome

occurs and you receives a reward

. Over time a policy

chooses actions

and outcomes

,

occur.

- It is assumed that the function

is known by the policy.

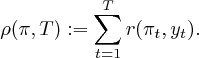

- The accumulated a net reward is

We are interested in how our dynamic policy performs in comparison to each fixed policy

, that is a policy that chooses the same action

at each time. In particular, we are interested in how this compares to the fixed policy which is retrospectively the best.

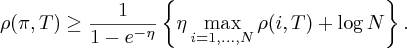

- For this reason, we consider the regret of policy

- We can only retrospectively find the best fixed policy, whilst our policy

must behave adaptively based on historical information. Thus

quantifies how we regret not having had the information to have chosen the best fixed policy.

- Note a ‘good’ policy would have low regret. For instance, we might hope our dynamic policy is as good as the best fixed policy, i.e.

.

Using Blackwell’s Approachability Theorem, we saw that low regret policies exist. See Section [Blackwell]Theorem [blackwell:regret]. One of the key advantages of such algorithms is that is does not place statistical assumptions on the outcome sequence . The Weighted Majority Algorithm is a further example of an algorithm that has asymptotically low regret and does not require statistical assumptions to be placed on the input sequence.

Weighted Majority Algorithm

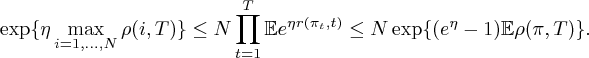

For parameter , the \emph{(exponentially) weighted majority algorithm} is a randomized policy which chooses a policy $\latex i=1,…,N$ at time

with probability

for

and for

We can derived the following regret bound

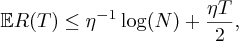

Theorem: If policy is the the weighted majority algorithm

(1) With fixed,

for choice , the above bound states

(2) For fixed the policy,

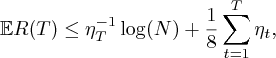

(3) For varrying over time and increasing, we have

for choice , the above bound states

•

•

Proof:

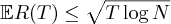

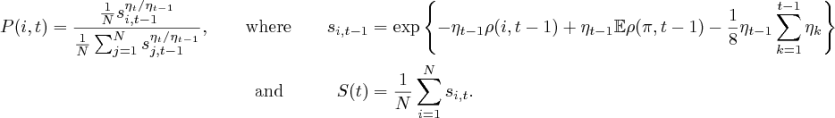

(1) Observe

In the second inequaltiy, we apply the Azuma-Hoeffding Inequality, see Section [Azuma]. Taking logs and rearranging, we gain the required expression . Substituting , we gain expression .

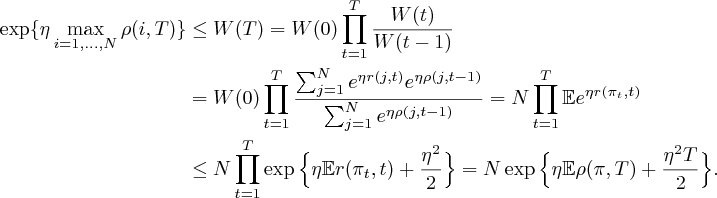

(2) We note the following inequality holds

![\label{MWA:crude bound} \bE e^{\eta r(\pi_t,t)} \leq \bE [ (e^\eta -1)r(\pi_t,t) +1 ] \leq \exp\{ (e^{\eta}-1) \bE r(\pi_t,t)\}.](https://appliedprobability.blog/wp-content/uploads/2017/05/b113f289f63da666f4a2cf569500a9af.png?w=840)

In the first inequality, we bound with the line segment above the exponential. In the second inequality, we apply the bound . Applying bound to inequality , we have

Taking logs and rearranging gives the required bound.

(3) The crux of the proof (1) was that the function is decreasing and thus bounded above by

. We apply the same prinicple, but we have to take account of the change in parameter

.

We can express our weights differently, notice,

will play a similar role to that of

in the proof of (1):

Since , the sequence is decreasing and bounded above by

. Thus, as

, we get the result.