- Dynamic Programs; Bellman’s Equation; An example.

For this section, consider the following dynamic programming formulation:

Time is discrete ;

is the state at time

;

is the action at time

;

Def 1 [Plant Equation][DP:Plant] The state evolves according to functions . Here

This is called the Plant Equation.

A policy choses an action

at each time

. The (instantaneous) reward for taking action

in state

at time

is

and

is the reward for terminating in state

at time

.

Def 2 [Dynamic Program] Given initial state , a dynamic program is the optimization

Further, let (Resp.

) be the objective (Resp. optimal objective) for when the summation is started from

, rather than

.

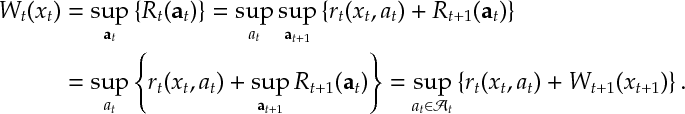

Ex 1 [Bellman’s Equation] and for

where and

.

Ex 2 An investor has a fund: it has pounds at time zero; money can’t be withdrawn; it pays

interest per-year for

years; the investor consumes proportion

of the interest and reinvests the rest.

What should the investor do to maximize consumption?

Answers

Ans 1 Let .

Ans 2 The yearly fund value satisfies . Backward induction, if reward from time

onward is

, then

where

Also, , i.e. last year consume everything. Therefore solution is consume everything last years while

otherwise consume nothing i.e. initially save and then consume in last years.