- The Hamilton-Jacobi-Bellman Equation.

- Heuristic derivation of the HJB equation.

We consider a continuous time analogue of Markov Decision Processes.

Definitions

Time is continuous ;

is the state at time

;

is the action at time

.

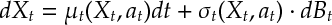

Def 1 [Plant Equation] Given functions and

, the state evolves according to a stochastic differential equation

where is an

-dimensional Brownian motion. This is called the Plant Equation. It decides how our diffusion process evolves as a function of the control actions.

Def 2 A policy chooses an action

at each time

. (We assume that

is adapted and previsible.) Let

be the set of policies. The (instantaneous) cost for taking action

in state

at time

is

and

is the cost for terminating in state

at time

.

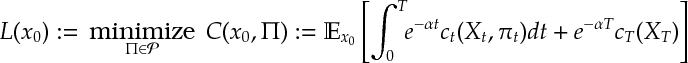

Def 3 [Diffusion Control Problem] Given initial state , a dynamic program is the optimization

Further, let (Resp.

) be the objective (Resp. optimal objective) for when the integral is started from time

with

, rather than

with

.

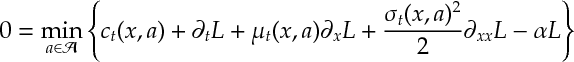

Def 4 [Hamilton-Jacobi-Bellman Equation][DCP:Bellman] For a Diffusion Control Problem , the equation

![\label{DCP:HJB}\tag{HJB} 0= \min_{a\in\mathcal{A}} \left\{ c_t(x,a)+ \partial_t L_t(x) + \mu_t(x,a)\cdot \partial_x L_t(x) + [\sigma^\T \sigma]\cdot \partial_{xx} L_t(x) - \alpha L_t(x). \right\}](https://appliedprobability.blog/wp-content/uploads/2017/04/12d80fddd458ed34ebe49745599281ba.png?w=840)

is called the Hamilton-Jacobi-Bellman equation.1 It is the continuous time analogue of the Markov Decision process Bellman equation.

Heuristically deriving the HJB equation

We heuristically develop a Bellman equation for stochastic differential equations using our knowledge of the Bellman equation for Markov decision processes. The following exercises follow from the heuristic derivation of Ito’s formula and the heuristic derivation of the HJB equation.

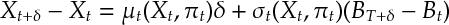

Ex 1 [Heuristic Derivation of the HJB equation] We suppose (for simplicity) that belongs to

and is driven by a one-dimensional Brownian motion. Argue that the plant equation in the plant equation is approximated by

Ex 2 [Continued] Argue that, for small and positive, the cost function in can be approximated by

![C_t(x,\Pi) \approx \mathbb{E} \Bigg[ \sum_{t\in \{0,\delta,...,T-1\}} \!\! (1-\alpha \delta)^{\frac{t}{\delta}} c_t(X_t,\pi_t) \delta + (1-\alpha \delta)^{\frac{T}{\delta}}c_T(X_T) \Bigg].](https://appliedprobability.blog/wp-content/uploads/2017/04/ba16ec04ccbb503eeea11e1388bd28e9.png?w=840)

Ex 3 [Continued] Argue that the optimal value function approximately satisfies

![L_t(x) = \min_{a\in\mathcal{A}} \left\{ c_t(x,a) \delta + (1-\alpha \delta) \mathbb{E}_{x, a} \left[L_{t+\delta}(X_{t+\delta})\right] \right\}.](https://appliedprobability.blog/wp-content/uploads/2017/04/9320687696d09091e767d53bc0640d2c.png?w=840)

Ex 4 [Continued] Argue that can be approximated as follows

![\begin{aligned} &L_{t+\delta}(X_{t+\delta}) - L_t(X_t)\\ \approx &\left[ \partial_t L + \mu_t(X_t,\pi_t) \cdot \partial_x L +\frac{\sigma_t(X_t,\pi_t)^2}{2} \partial_{xx} L \right] \delta + \partial_x L \cdot \sigma_t(X_t,\pi_t) \cdot (B_{t+\delta} - B_t) \end{aligned}](https://appliedprobability.blog/wp-content/uploads/2017/04/7fc6e9650a97a3f08371e0043e81ad37.png?w=840)

Ex 5 [Continued] Argue that satisfies the equation

i.e. the HJB equation as required.

Answers

Ans 1. Apply the Heuristc of Ito’s formula [see Ex3].

Ans 2. Follows from the definition of a Riemann Integral and since .

Ans 3. [1] and [2] define the plant equation and objective for a Markov decision process (Def 3). The required equation is the Bellman equation for that MDP.

Ans 4. This is Itô’s formula.

Ans 5. Take expectations in [4] (the Brownian term has expectation zero) and substitute into [3] and divide by .

- Here

is the dot-product of the Hessian matrix

with

. I.e. we multiply component-wise and sum up terms.↩