- Markov’s Inequality; Chebychev’s Inequality; Chernoff’s Bound.

- Bounds for the Poisson Distribution.

Throughout this section we assume that is a real valued random variable. The mean, variance and moment generating function of

are, respectively,

,

and

, when these exist.

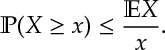

Ex 1. [Markov’s Inequality] For positive random variable

Markov’s inequality can be thought of as an upper-bound on the probability or as a lower-bound on the expectation:

Ex 2. Let be the mode of

, i.e.

then

(Similar logic applies to other quartiles.)

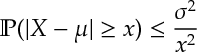

Ex 3. [Chebychev’s Inequality] For with mean

and variance

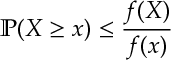

Ex 4. For a positive increasing function ,

Ex 5. [Chernoff’s Bound]

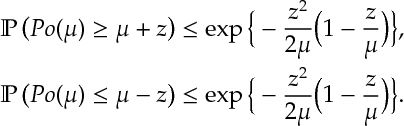

Let’s get some handy exponential and normal tail bounds for the Poisson distribution.

Ex 6. [Some Poisson Bounds] For , a Poisson random variable with parameter

,

![\mathbb{E} [e^{\theta Po(\mu)}] = e^{\mu (e^{\theta} -1)}](https://appliedprobability.blog/wp-content/uploads/2017/04/10b0c3dec59376846affdc7ac4bd84d7.png?w=840)

and

![\min_{\theta} \left\{e^{-\theta x} \bE [e^{\theta Po(\mu)}]\right\} = \exp \left\{ - \mu \left[ \left( 1- \frac{x}{\mu}\right)+ \frac{x}{\mu}\log\frac{x}{\mu}\right] \right\}](https://appliedprobability.blog/wp-content/uploads/2017/04/798854e613e319f52a4719a58aa2035b.png?w=840)

Ex 7. [Continued]

![\begin{aligned} &{\mathbb P} \left( Po(\mu) \geq x \right) \leq e^{-\mu-x },\qquad \forall x\geq \mu e^2\\ &{\mathbb P} \left( Po(\mu) \leq x \right) \leq e^{-\mu+x },\qquad \forall x\in [0,\mu]. \end{aligned}](https://appliedprobability.blog/wp-content/uploads/2017/04/5667721867b87d747677ee3ab2e326db.png?w=840)

Ex 8.[Continued] For ,

Answers.

Ans 1. now take expectations.

Ans 2. Applying Markov’s inequality

![\bE [X] \geq m (1- \bP(X\leq m)) = \frac{m}{2}.](https://appliedprobability.blog/wp-content/uploads/2017/04/0a2daea74c6a4888cfed9552e6b3fc88.png?w=840)

Ans 3. Consider , square both sides and apply Markov [1].

Ans 4. Consider , apply

to both sides and then [1].

Ans 5. Take apply [4], then minimize over theta.

Ans 6. First equality is straightforward. Second, implies

which after substitution gives the bound.

Ans 7. First bound: The Chernoff bound [5] gives

![\mathbb{P} \left(Po(\mu) \geq x \right) \leq \exp \left\{ - \mu \left[ \left( 1- \frac{x}{\mu}\right)+ \frac{x}{\mu}\log\frac{x}{\mu}\right] \right\}](https://appliedprobability.blog/wp-content/uploads/2017/04/8b2d10a1fcc89d1c46d91b2ee0b2a0ec.png?w=840)

By assumption , substituting this into the logorithm above gives the result. Second, by the same Chernoff bound and use that

.

Ans 8. Similar to [7] with ; however now apply the bound

.

This is a helpful explanation of various inequalities used in probability.

LikeLike